relieff

Rank importance of predictors using ReliefF or RReliefF algorithm

Description

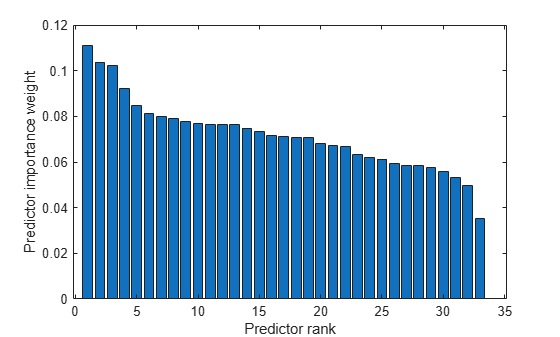

[

ranks predictors using either the ReliefF or RReliefF algorithm with

idx,weights] = relieff(X,y,k)k nearest neighbors. The input matrix

X contains predictor variables, and the vector

y contains a response vector. The function returns

idx, which contains the indices of the most important

predictors, and weights, which contains the weights of the

predictors.

If y is numeric, relieff performs

RReliefF analysis for regression by default. Otherwise, relieff

performs ReliefF analysis for classification using k nearest

neighbors per class. For more information on ReliefF and RReliefF, see Algorithms.

Examples

Input Arguments

Output Arguments

Tips

Predictor ranks and weights usually depend on

k. If you setkto 1, then the estimates can be unreliable for noisy data. If you setkto a value comparable with the number of observations (rows) inX,relieffcan fail to find important predictors. You can start withk=10and investigate the stability and reliability ofrelieffranks and weights for various values ofk.relieffremoves observations withNaNvalues.

Algorithms

References

[1] Kononenko, I., E. Simec, and M. Robnik-Sikonja. (1997). “Overcoming the myopia of inductive learning algorithms with RELIEFF.” Retrieved from CiteSeerX: https://link.springer.com/article/10.1023/A:1008280620621

[2] Robnik-Sikonja, M., and I. Kononenko. (1997). “An adaptation of Relief for attribute estimation in regression.” Retrieved from CiteSeerX: https://www.semanticscholar.org/paper/An-adaptation-of-Relief-for-attribute-estimation-in-Robnik-Sikonja-Kononenko/9548674b6a3c601c13baa9a383d470067d40b896

[3] Robnik-Sikonja, M., and I. Kononenko. (2003). “Theoretical and empirical analysis of ReliefF and RReliefF.” Machine Learning, 53, 23–69.

Version History

Introduced in R2010b

See Also

fscnca | fsrnca | knnsearch | pdist2 | sequentialfs | plotPartialDependence | fsulaplacian | fscmrmr | fsrmrmr