robustfit

Fit robust linear regression

Description

Examples

Input Arguments

Output Arguments

More About

Tips

Algorithms

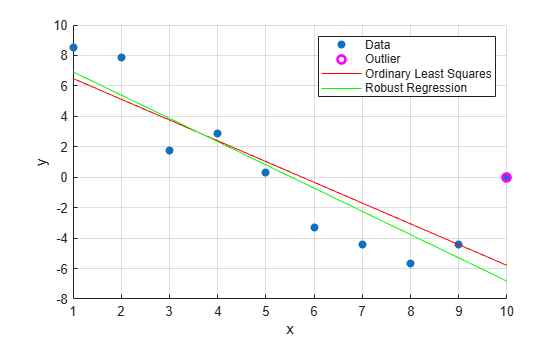

robustfituses iteratively reweighted least squares to compute the coefficientsb. The inputwfunspecifies the weights.robustfitestimates the variance-covariance matrix of the coefficient estimatesstats.covbusing the formulainv(X'*X)*stats.s^2. This estimate produces the standard errorstats.seand correlationstats.coeffcorr.In a linear model, observed values of

yand their residuals are random variables. Residuals have normal distributions with zero mean but with different variances at different values of the predictors. To put residuals on a comparable scale,robustfit“Studentizes” the residuals. That is,robustfitdivides the residuals by an estimate of their standard deviation that is independent of their value. Studentized residuals have t-distributions with known degrees of freedom.robustfitreturns the Studentized residuals instats.rstud.

Alternative Functionality

robustfit is useful when you simply need the output arguments of the

function or when you want to repeat fitting a model multiple times in a loop. If you need to

investigate a robust fitted regression model further, create a linear regression model object

LinearModel by using fitlm. Set the value for the name-value pair

argument 'RobustOpts' to 'on'.

References

[1] DuMouchel, W. H., and F. L. O'Brien. “Integrating a Robust Option into a Multiple Regression Computing Environment.” Computer Science and Statistics: Proceedings of the 21st Symposium on the Interface. Alexandria, VA: American Statistical Association, 1989.

[2] Holland, P. W., and R. E. Welsch. “Robust Regression Using Iteratively Reweighted Least-Squares.” Communications in Statistics: Theory and Methods, A6, 1977, pp. 813–827.

[3] Huber, P. J. Robust Statistics. Hoboken, NJ: John Wiley & Sons, Inc., 1981.

[4] Street, J. O., R. J. Carroll, and D. Ruppert. “A Note on Computing Robust Regression Estimates via Iteratively Reweighted Least Squares.” The American Statistician. Vol. 42, 1988, pp. 152–154.

Version History

Introduced before R2006a