You are now following this Submission

- You will see updates in your followed content feed

- You may receive emails, depending on your communication preferences

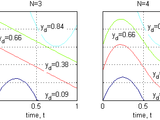

Using cost and output functionals, a continuous function is optimized for varying outputs. Once the parameters are optimal in the limited resolution, the dimension of the parameterization is incremented. Locally weighted regression is implemented as a memory model, so that data from previous evaluations need not be repeated. In addition, by adding nodes to the cubic interpolation, and retaining previous nodes, all data from the lower parameterization can be transferred to the higher resolution. Results are presented in http://dx.doi.org/10.2316/P.2011.753-031

The main file is DimIncreaseSearchOptimal_fmin_Hist_9.m and is set up for the problem where the integral of the input function is the output, while the integral of the square of the distance to a sine wave is the cost. As the number of nodes used to describe the input waveform increases, the cost approached the ideal cost. Because the required data grows exponentially based on the number of parameters to describe the input waveform, 64-bit version may be necessary depending on finial dimension. The locally weighted regression can change as addition data is gathered, which can upset the hessian update calculations, so modified nonlinear programming files are added to protect the hessian.

Cite As

Alan Jennings (2026). Unbounded Resolution for Function Approximation (https://www.mathworks.com/matlabcentral/fileexchange/37650-unbounded-resolution-for-function-approximation), MATLAB Central File Exchange. Retrieved .

General Information

- Version 1.0.0.0 (43.9 KB)

MATLAB Release Compatibility

- Compatible with any release

Platform Compatibility

- Windows

- macOS

- Linux

| Version | Published | Release Notes | Action |

|---|---|---|---|

| 1.0.0.0 |