Automating Image Registration with MATLAB

By Garima Sharma and Andy Thé, MathWorks

Image registration is the process of aligning images from two or more data sets. It involves integrating the images to create a composite view, improving the signal-to-noise ratio, and extracting information that would be impossible to obtain from a single image. Image registration is used in remote sensing, medical imaging, cartography, and other applications that rely on obtaining precise information from images—for example, discovering from satellite images how an area became flooded, or detecting tumors from MRI scans.

Determining an effective image registration approach is situation-dependent, and can be a complex and time-consuming process. It requires careful selection of a point transformation model to provide reference points between the images, and a method for comparing information to identify the parameters required to properly align the images.

There are two well-known approaches to the process of automatic image registration: feature-based and intensity-based registration algorithms. In this article we will use a fever-detection example to illustrate an intensity-based automatic image registration workflow based on imregister() and related capabilities in Image Processing Toolbox™. This workflow is a fast and effective way to integrate images from different cameras.

Image Registration Glossary

Reference (fixed) image: Image in the target orientation, specified as a 2D or 3D grayscale image

Target (moving) image: Image to be transformed into alignment with the reference image, specified as a 2D or 3D grayscale image

Intensity-based registration: The alignment of images based on their relative intensity patterns

Feature-based registration: The alignment of images using feature detection, extraction, and matching

Fever-Detection Example: Goals and Challenges

During the outbreak of severe acute respiratory syndrome (SARS) in 2003, Taiwan’s Taoyuan International Airport began screening passengers for fever symptoms to contain the spread of the deadly virus. Since it was impossible to examine each passenger individually, clinicians used infrared thermography, a noninvasive technique for detecting fever by analyzing infrared images of thermal data.

While this approach is effective, it can be challenging to implement. Infrared cameras are extremely sensitive to environmental conditions, and they must be correctly calibrated to account for all the elements that can affect the temperature reading—including ambient room temperature, relative humidity, reflective surfaces, and the distance of the subject from the camera. Effective thermal screening also depends on consistent identification of a body part that can produce reliable thermal information—in our example, the area around the eyes.

We will build a screening thermography prototype using MATLAB®, a FLIR infrared (IR) camera, and a webcam. The IR camera can measure facial temperatures in increments of 100 millikelvins, while the webcam provides more detailed information on the facial features. By registering the images from both sources, we will be able to detect the location around the eyes from the webcam image (Figure 1) and measure the temperature around the eyes from the IR camera image.

Acquiring the Images and Calibrating the IR Camera

Using Image Acquisition Toolbox™ we capture the images from the webcam and the IR camera and import them into the MATLAB workspace. The IR camera uses the GigE Vision® interface, while the webcam uses the standard DirectShow® interface.

To calibrate the IR camera we adjust for subject distance, humidity, emissivity (the relative power of a surface to emit heat by radiation), and other characteristics. During the image acquisition process, the atmospheric temperature was 295.15K and the emissivity of the wall and the subjects was 0.98.

Visualizing the Images

We view the IR image using the imshow() function in Image Processing Toolbox. Because we have captured 16-bit data, in which the actual temperature readings were measured in 100 mK increments, we perform a contrast adjustment to scale the data before displaying the image on the monitor (Figure 2).

We display the two images in the same figure window using imshowpair().

This function provides several visualization options, including 'falsecolor', for creating a composite RGB image using different color bands, and 'blend', for visualizing alpha blended images (Figure 3).

Registering the Images

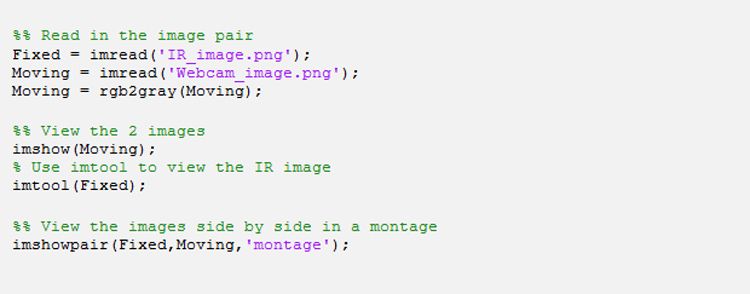

We begin the registration process by designating the IR image as the fixed image and webcam image as the moving image. The fixed image is the static reference. Our goal is to align the moving image with the fixed image. Since intensity-based image registration algorithms require grayscale, we convert the color webcam image to grayscale using rgb2gray().

To align the images, we use the Image Processing Toolbox imregister() function. In addition to a pair of images, intensity-based automatic image registration requires a metric, an optimizer, and a transformation type. We obtain the 'metric' and 'optimizer' values using imregconfig() with the 'multimodal' option. We then plug the returned values into imregister() as a starting point for the image registration.

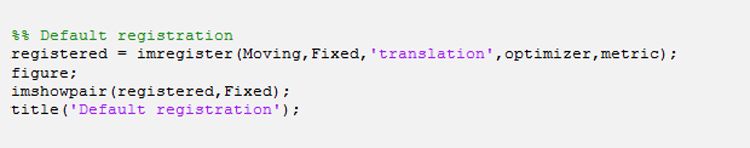

To begin the registration process we use the imregister() default transform type 'translation' and view the result with a call to imshowpair(). The outlines of the subject in the two images are somewhat misaligned (Figure 4). The gaps between the images around the head and shoulders indicate issues with both scale and rotation.

Note that getting good results from optimization-based image registration frequently involves multiple modifications of optimizer and metric values. Note, too, that while imshowpair() in its default mode works well for the images in our example, it might not work for all image pairs. It is best to explore all the visualization styles in imshowpair(), such as 'falsecolor', 'diff', 'blend', and 'montage', to identify the best one for a specific image pair.

To account for the scale and rotation distortion, we switch the transformation type in imregister() from 'translation' to 'similarity'.

We now have a reasonably accurate registered image in which the eyes are closely aligned (Figure 5).

The green and magenta areas are present because the images come from different sources. They do not indicate misregistration.

Detecting the Eyes and Reading the Temperature

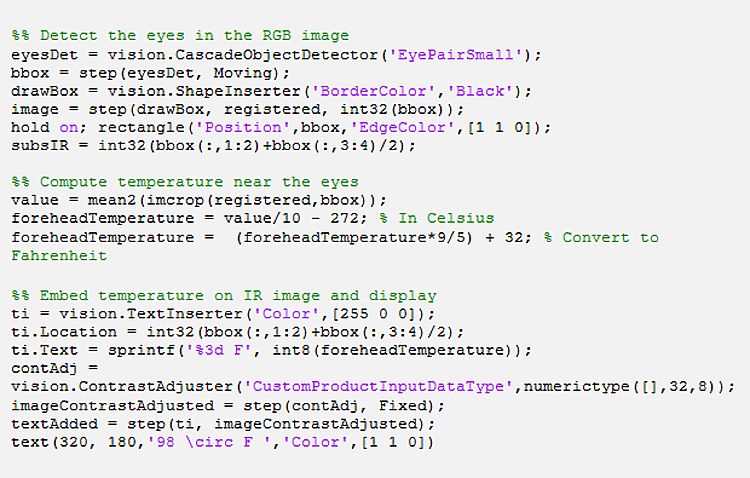

To detect the eyes, we use the cascade object detector in Computer Vision Toolbox™. This object detector uses the Viola-Jones algorithm, which detects the eyes using Haar-like features and a multistage Gentle Adaboost classifier. We then draw a bounding box near the eyes to highlight the region of interest on the registered image.

Because we have registered the images, we can use the bounding box enclosing the eyes in the webcam image to sample temperature values near the eyes in the infrared image. Using this reading we convert the temperature measurement from millikelvin to Fahrenheit before displaying it near the eyes on the registered image. We see that the subject does not have a fever (Figure 6).

Published 2013 - 92121v00