Modeling System Architecture and Resource Constraints Using Discrete-Event Simulation

By Anuja Apte, MathWorks

Optimizing system resource utilization is a key design objective for system engineers in communications, electronics, and other industries. System resources such as processors, memory, or bandwidth on a communication bus are often shared by various components in the system. To understand the utilization of a shared resource, system engineers must identify constraints on the resource, such as number of processors and memory size, and analyze the effect of input traffic or load on the shared resource.

The time-varying nature of the input traffic makes it difficult to analyze behaviors such as congestion, overload, end-to-end latencies, or packet loss. The challenge is to efficiently model the system's response to changing input traffic patterns. These systems are best modeled as event-driven or activity-based systems, since the input traffic activity places the load on the shared resource. Discrete Event Simulation is the most suitable simulation paradigm for modeling event-driven or activity-based systems.

SimEvents extends the Simulink environment for Model-Based Design into the discrete-event simulation domain. In this article, we use SimEvents to analyze resource constraints on three typical systems:

- An Ethernet communication bus

- A real-time operating system (RTOS) with prioritized task execution

- A shared memory management system

In each case, the simulation results will enable us to understand the effects of resource constraints on the system and to determine next steps.

Ethernet Communication Bus

The system in this example is a LAN consisting of three network interface cards (NICs) that share bandwidth on an Ethernet bus. We want to add another NIC and a printer to the bus—but will the bus have enough bandwidth to support them?

To answer this question, we must determine the channel utilization by evaluating the current load on the bus. As utilization increases, the end-to-end latency experienced by a packet increases in a non-linear fashion.

If the Ethernet bus is heavily utilized by the current load, it cannot support additional devices; connecting new devices will increase communication latencies and reduce throughput for all the devices.

Figure 1 shows the SimEvents model of the system. The model includes the application that sends and receives data over the Ethernet bus, a medium-access control (MAC) controller that governs each computer's use of the shared channel, and a T-junction that connects the computer to the network.

The terminator, T-junction, and cable blocks at the bottom of the model represent physical components of the bus. The blocks labeled “Cable” model the communication latency based on the cable length.

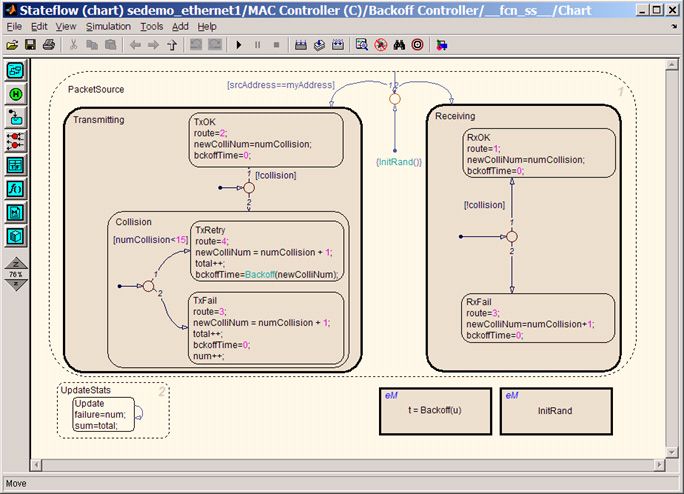

Standard Ethernet networks use a Carrier Sense Multiple Access with Collision Detection (CSMA/CD) protocol to manage use of the shared channel. Within this protocol, a collision occurs if transmissions from two computers compete for use of the channel. The colliding packets can make additional attempts to access the channel. The number of attempts and the time intervals between them are determined by a binary exponential backoff algorithm—implemented in the MAC Controller subsystem in our example. The Stateflow chart in Figure 2 shows the implementation of the binary exponential backoff algorithm.

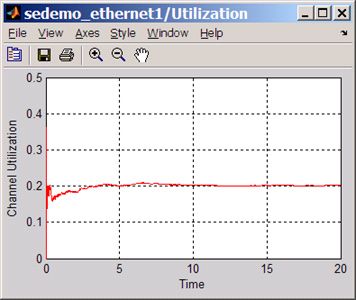

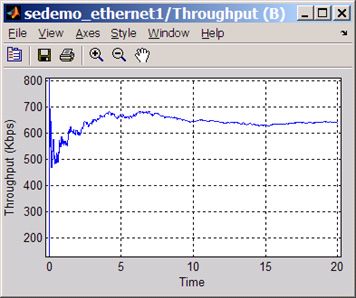

In this setup, all applications transmit at an average rate of 100 packets per second and the packet size varies from 64 byte to 1500 bytes. Simulation of this system enables us to visualize characteristics such as the utilization of the Ethernet bus, the throughput for a particular computer, and the average latency for message transmission. Figure 3a shows the overall channel utilization, or the proportion of time that the channel is in use. We can also visualize other characteristics, such as the throughput of node B (Figure 3b).

Channel utilization is low, indicating that the bus might be able to support additional traffic. We can now incorporate the traffic from additional devices into the model and study their effect on overall channel utilization and the throughput of individual devices.

Next steps could include testing the model with different parameter sets and traffic patterns by using SimEvents with SystemTest to perform Monte Carlo analysis on the model.

Real-Time Operating System with Prioritized Task Execution

This example models the prioritized task execution in an RTOS. Tasks with different priority levels share the processor in such a system. Typical tasks include low-priority application tasks in the main loop of the RTOS and high- priority interrupt tasks that invoke interrupt service routines (ISRs). Execution of interrupt service routine delays the processing of application tasks since the application tasks wait in the task queue while the ISR is executing.

Ideally, the number of tasks waiting in the task queue should not increase and the RTOS should return to the idle task to avoid excessive processing delays. How can we verify that the RTOS satisfies this requirement?

To answer this question we must model the shared processor and its interaction with application tasks and interrupts. Specifically, the model should demonstrate priority-based task execution and preemption. Figure 4 shows the SimEvents model of such a system.

The Task Token Generators section contains subsystems that generate high-priority tokens for interrupts and low-priority tokens for application tasks. The tokens carry an attribute (TaskExecTime) that indicates the execution time for that task. The Task Token Manager section contains the task queue and the processor shared by the input tasks. The Priority-Based Task Queue sorts the current queued tasks according to priority. Interrupt task tokens entering this queue preempt the application task token being served in the processor. Upon preemption, the application task returns to the Priority Based Queue. This task resumes its processing when the interrupt service routine is complete. The dashed function-call signal lines are used to generate tasks whose execution depends on the completion of another task.

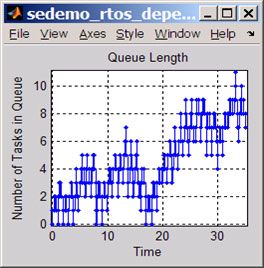

We simulate this model and generate a plot showing the number of tasks in the task queue changing over simulation time (Figure 5).

The plot indicates that the number of tasks waiting to be processed increases over time and that the task queue does not return to an empty state. It seems that the processor is not fast enough to support the high input rate of tasks.

Since we cannot control the input rate of tasks, next steps might include exploring the use of a high-speed processor to reduce the number of tasks waiting in the task queue.

Shared Memory Management

The system in this example is a communication device on a custom hardware board or a system on a chip that manages a buffer shared by the transmit buffering and receive buffering functions. Messages are dropped when the buffer is full. Low packet drop rate for the receive buffer is more important than the transmit buffer because packets can be retransmitted if they are dropped from the transmit buffer.

The quality of service (QoS) requirements limit the probability of losing received packets to 1%. How do we determine whether the design has enough memory to satisfy this requirement? To answer this question we need to model behaviors such as packet loss from the receive buffer and its relation to the size of the shared memory.

Figure 6 shows the SimEvents model of this system. The upper part of the model describes a receive buffer, where messages arrive from an I/O device such as a universal asynchronous receiver transmitter (UART). The lower part of the model describes a transmit buffer, where messages wait before transmission.

Both receive and transmit buffers model the following processes:

Generation of variable-size messages. The traffic-generation process generates messages with variable size. Each message consumes a certain space from the shared memory, depending on its size.

Regulation of the messages that cannot be stored in the buffer. When a buffer size crosses a user-defined threshold, the Message Flow Regulation subsystems begin to drop incoming messages.

Storage of messages in a buffer. The receive and transmit buffers share memory, and the receive buffer is given a preference. The memory available for transmitting messages is limited to that which is unused by the receive buffer. The Transmit Buffer and Receive Buffer subsystems both have input signals labeled "cap" that represent the capacity of each buffer.

Service of messages. The block labeled Rx Message Server models the delay in forwarding messages over the I/O bus that connects to other components. The block labeled Tx Message Server models the delay in transmitting messages.

During simulation, the Rx Message Loss Rate block displays packet loss rate for receive buffer. As Figure 6 shows, the packet loss rate at the receive buffer is 6%, which does not satisfy the QoS requirement of 1%.

To study the effect of memory size on this packet loss rate, we simulate this system with increased memory size. The following packet loss rates are observed for these simulation runs:

| Memory Size | Rx Packet Loss Without Priority-Based QoS |

|---|---|

| 8192 | 6.6% |

| 10240 | 4.4% |

| 16384 | 0% |

These results indicate that packet loss rates decrease as memory size increases. As a system engineer, however, you might want to explore other techniques to reduce the packet loss rate, such as implementing prioritized regulation of messages (dropping low-priority messages before high-priority messages) and clearing the oldest messages from a buffer when the buffer size crosses the threshold. An advanced version of this model shows that the packet loss rate can be reduced using these techniques. The following table shows the packet loss rates with this advanced model.

| Memory Size | Rx Packet Loss With Priority-Based QoS |

|---|---|

| 8192 | Packet Loss (Low Priority): 1.13% Packet Loss (High Priority): 0 % |

Benefits of this Approach

This article illustrates the value of discrete-event simulation using SimEvents to perform the following design tasks:

- Identify constraints on shared resources and bottlenecks in a system

- Simulate the effects of variable input traffic, such as congestion, packet loss, and increased end-to-end latencies

- Explore different design techniques and algorithms to optimize resource allocation

The real strength of this approach comes from the integration of SimEvents with MATLAB and Simulink. In addition, integration with tools such as SystemTest and Stateflow enable many other design tasks, such as Monte Carlo analysis or implementing control logic in event-driven systems for Model-Based Design.

Published 2008 - 91564v00

References

-

Cassandras, C.G. and S.Lafortune. Introduction to Discrete Event Systems. 2nd edition Springer Publishers, 2007.

-

Lenand, W.; Taqqu, M; Willinger, W; and Wilson, D. “On the Self Similar Nature of Ethernet Traffic (Extended Version).” IEEE/ACM Transactions on Networking, February 1994.