What Is Reinforcement Learning?

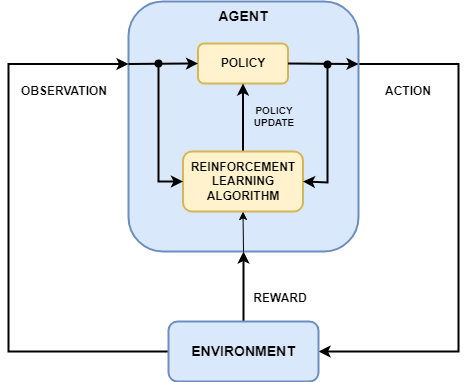

Reinforcement learning is a goal-directed computational approach where a computer learns to perform a task by interacting with an unknown dynamic environment. This learning approach enables a computer to make a series of decisions to maximize the cumulative reward for the task without human intervention and without being explicitly programmed to achieve the task. The following diagram shows a general representation of a reinforcement learning scenario.

The goal of reinforcement learning is to train an agent to complete a task within an unknown environment. The agent receives observations and a reward from the environment and sends actions to the environment. The reward is a measure of how successful an action is with respect to completing the task goal.

The agent contains two components: a policy and a learning algorithm.

The policy is a mapping that selects actions based on the observations from the environment. Typically, the policy is a function approximator with tunable parameters, such as a deep neural network.

The learning algorithm continuously updates the policy parameters based on the actions, observations, and reward. The goal of the learning algorithm is to find an optimal policy that maximizes the cumulative reward received during the task.

In other words, reinforcement learning involves an agent learning the optimal behavior through repeated trial-and-error interactions with the environment without human involvement.

As an example, consider the task of parking a vehicle using an automated driving system. The goal of this task is for the vehicle computer (agent) to park the vehicle in the correct position and orientation. To do so, the controller uses readings from cameras, accelerometers, gyroscopes, a GPS receiver, and lidar (observations) to generate steering, braking, and acceleration commands (actions). The action commands are sent to the actuators that control the vehicle. The resulting observations depend on the actuators, sensors, vehicle dynamics, road surface, wind, and many other less-important factors. All these factors, that is, everything that is not the agent, make up the environment in reinforcement learning.

To learn how to generate the correct actions from the observations, the computer repeatedly tries to park the vehicle using a trial-and-error process. To guide the learning process, you provide a signal that is one when the car successfully reaches the desired position and orientation and zero otherwise (reward). During each trial, the computer selects actions using a mapping (policy) initialized with some default values. After each trial, the computer updates the mapping to maximize the reward (learning algorithm). This process continues until the computer learns an optimal mapping that successfully parks the car.

Reinforcement Learning Workflow

The general workflow for training an agent using reinforcement learning includes the following steps.

Formulate problem — Define the task for the agent to learn, including how the agent interacts with the environment and any primary and secondary goals the agent must achieve.

Create environment — Define the environment within which the agent operates, including the interface between agent and environment and the environment dynamic model. For more information, see Reinforcement Learning Environments.

Define reward — Specify the reward signal that the agent uses to measure its performance against the task goals and how to calculate this signal from the environment. For more information, see Define Reward and Observation Signals in Custom Environments.

Create agent — Create the agent, which includes defining a policy approximator (actor) an value function approximator (critic) and configuring the agent learning algorithm. For more information, see Create Policies and Value Functions and Reinforcement Learning Agents.

Train agent — Train the agent approximators using the defined environment, reward, and agent learning algorithm. For more information, see Train Reinforcement Learning Agents.

Simulate agent — Evaluate the performance of the trained agent by simulating the agent and environment together. For more information, see Train Reinforcement Learning Agents.

Deploy policy — Deploy the trained policy approximator using, for example, generated GPU code. For more information, see Deploy Trained Reinforcement Learning Policies.

Training an agent using reinforcement learning is an iterative process. Decisions and results in later stages can require you to return to an earlier stage in the learning workflow. For example, if the training process does not converge to an optimal policy within a reasonable amount of time, you might have to update some of the following before retraining the agent:

Training settings

Learning algorithm configuration

Policy and value function (actor and critic) approximators

Reward signal definition

Action and observation signals

Environment dynamics

Related Examples

- Train Reinforcement Learning Agent in MDP Environment

- Train Reinforcement Learning Agent in Basic Grid World

- Create Simulink Environment and Train Agent