Fairness Metrics in Modelscape

This example shows how to detect bias in a credit card data set using a suite of metrics in Modelscape™ software. The metrics are built on the fairnessMetrics object.

You use Modelscape tools to set thresholds for these metrics and produce reports that appear consistent with other Modelscape validation reports.

You then assess this data based on fifteen metrics using a risk fairness metrics handler object and document the results in a Microsoft® Word document.

Load Data and Create Risk Fairness Metrics Handler Object

Load a Modelscape bias detection object that is constructed from fairnessMetrics objects. This object contains metrics for model predictions. The object contains four bias metrics and eleven group metrics, including the false negative rate and the rate of positive prediction. These metrics are based on data with the attributes "AgeGroup", "ResStatus", and "OtherCC". To evaluate data without model predictions, you need only the metrics disparate impact and statistical parity difference metrics.

load FairnessEvaluator.mat

disp(fairnessData) fairnessMetrics with properties:

SensitiveAttributeNames: {'AgeGroup' 'ResStatus' 'OtherCC'}

ReferenceGroup: {'45 < Age <= 60' 'Home Owner' 'Yes'}

ResponseName: 'Y'

PositiveClass: 1

BiasMetrics: [9×7 table]

GroupMetrics: [9×20 table]

ModelNames: 'Model1'

Properties, Methods

Construct a Modelscape fairness metric handler to compute a metric for every bias and group metric. RiskFairnessMetricsHandler is a Modelscape MetricsHandler object. For more examples and information about the properties of these objects, see Metrics Handlers.

riskFairnessMetrics(fairnessData)

ans =

RiskFairnessMetricsHandler with properties:

Accuracy: [1×1 mrm.data.validation.fairness.Accuracy]

RateOfPositivePredictions: [1×1 mrm.data.validation.fairness.RateOfPositivePredictions]

StatisticalParityDifference: [1×1 mrm.data.validation.fairness.StatisticalParityDifference]

EqualOpportunityDifference: [1×1 mrm.data.validation.fairness.EqualOpportunityDifference]

DisparateImpact: [1×1 mrm.data.validation.fairness.DisparateImpact]

AverageAbsoluteOddsDifference: [1×1 mrm.data.validation.fairness.AverageAbsoluteOddsDifference]

FalseNegativeRate: [1×1 mrm.data.validation.fairness.FalseNegativeRate]

FalsePositiveRate: [1×1 mrm.data.validation.fairness.FalsePositiveRate]

TruePositiveRate: [1×1 mrm.data.validation.fairness.TruePositiveRate]

TrueNegativeRate: [1×1 mrm.data.validation.fairness.TrueNegativeRate]

FalseDiscoveryRate: [1×1 mrm.data.validation.fairness.FalseDiscoveryRate]

FalseOmissionRate: [1×1 mrm.data.validation.fairness.FalseOmissionRate]

PositivePredictiveValue: [1×1 mrm.data.validation.fairness.PositivePredictiveValue]

NegativePredictiveValue: [1×1 mrm.data.validation.fairness.NegativePredictiveValue]

RateOfNegativePredictions: [1×1 mrm.data.validation.fairness.RateOfNegativePredictions]

Specify Thresholds for Fairness Metrics

You can specify thresholds for the fairness metrics using the riskFairnessThresholds function. Depending on the metric, riskFairnessThresholds requires one or two threshold levels:

TheDisparateImpact, StatisticalParityDifference, and EqualOpportunityDifference metrics, as well as the metrics for rates of positive and negative predictive value require two inputs. The software treats a metric level between the two inputs as a pass and values outside this range as a failure. For the rates of positive and negative predictive value, the software compares the threshold against the deviation of the rate from the true positive or negative rate.Other metrics require a single threshold value. The

riskFairnessThresholdsfunction assigns a pass or failure status to each metric value according to which side of this threshold the value is.

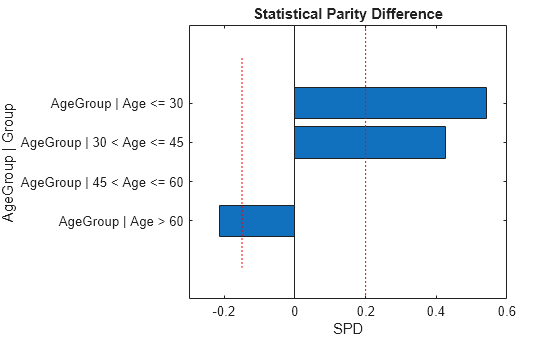

To set the thresholds, specify the name-value arguments using the metric names in the riskFairnessThresholds function. For example, set the thresholds for StatisticalParityDifference and FalseNegativeRate. For the statistical parity difference, a value in the range (-0.15, 0.2] correspond to a pass. Values outside this range correspond to a failure. For the false negative rate, values below 0.6 correspond to a pass. Otherwise, the value corresponds to a failure.

fairnessThresholds = riskFairnessThresholds(StatisticalParityDifference=[-0.15 0.2], ...

FalseNegativeRate=0.6)fairnessThresholds =

RiskFairnessThresholds with properties:

FalseNegativeRate: 0.6000

StatisticalParityDifference: [-0.1500 0.2000]

Construct a fairness metric handler with these thresholds.

fairnessMetricHandler = riskFairnessMetrics(fairnessData,fairnessThresholds);

Interrogate Fairness Metrics

Use the report object function to interrogate fairness metrics. This function summarizes all the metrics, sensitive attributes, and attribute groups. The model outputs fail both the tests because the values in the table are the worst levels across all attributes and groups.

overallSummary = report(fairnessMetricHandler); disp(overallSummary)

Summary Metric Value Status Diagnostic

________________________________ ________ ___________ _______________________________________________________

Statistical Parity Difference 0.54197 Fail (0.2, Inf)

Disparate Impact 0.050237 <undefined> <undefined>

Equal Opportunity Difference 0.39151 <undefined> <undefined>

Average Absolute Odds Difference 0.49949 <undefined> <undefined>

False Positive Rate 0.775 <undefined> <undefined>

False Negative Rate 1 Fail (0.6, Inf)

True Positive Rate 0 <undefined> <undefined>

True Negative Rate 0.225 <undefined> <undefined>

False Discovery Rate 1 <undefined> <undefined>

False Omission Rate 0.4 <undefined> <undefined>

Positive Predictive Value 0 <undefined> <undefined>

Negative Predictive Value 0.6 <undefined> <undefined>

Rate of Negative Predictions 0.23438 <undefined> <undefined>

Rate of Positive Predictions 0.76562 <undefined> <undefined>

Accuracy 0.42188 <undefined> <undefined>

Overall NaN Fail Fails at: Statistical Parity Difference, and 1 other(s)

For more details about these failures, pass extra arguments to report. For example, display detailed data about statistical parity difference. The worst statistical parity difference is in the under-30s age group.

spdSummary = report(fairnessMetricHandler,Metrics="StatisticalParityDifference");

disp(spdSummary) SensitiveAttribute | Group StatisticalParityDifference Status Diagnostic

__________________________ ___________________________ ______ ______________________________________________

AgeGroup | Age <= 30 0.54197 Fail (0.2, Inf)

AgeGroup | 30 < Age <= 45 0.42456 Fail (0.2, Inf)

AgeGroup | 45 < Age <= 60 0 Pass (-0.15, 0.2]

AgeGroup | Age > 60 -0.21242 Fail (-Inf, -0.15]

ResStatus | Home Owner 0 Pass (-0.15, 0.2]

ResStatus | Tenant 0.080908 Pass (-0.15, 0.2]

ResStatus | Other -0.11961 Pass (-0.15, 0.2]

OtherCC | No 0.19661 Pass (-0.15, 0.2]

OtherCC | Yes 0 Pass (-0.15, 0.2]

Overall NaN Fail Fails at: AgeGroup | Age <= 30, and 2 other(s)

For data sets with many predictors or groups, you can focus on a single attribute or attribute group. Alternatively, by omitting the Metrics argument, you can view a single attribute or attribute group and see how this attribute or group performs with respect to all the metrics.

spdAgeGroupReport = report(fairnessMetricHandler, ... Metrics="StatisticalParityDifference", ... SensitiveAttribute="AgeGroup"); disp(spdAgeGroupReport)

AgeGroup | Group StatisticalParityDifference Status Diagnostic

_________________________ ___________________________ ______ ______________________________________________

AgeGroup | Age <= 30 0.54197 Fail (0.2, Inf)

AgeGroup | 30 < Age <= 45 0.42456 Fail (0.2, Inf)

AgeGroup | 45 < Age <= 60 0 Pass (-0.15, 0.2]

AgeGroup | Age > 60 -0.21242 Fail (-Inf, -0.15]

Overall NaN Fail Fails at: AgeGroup | Age <= 30, and 2 other(s)

Visualize Fairness Metrics

The fairness metrics handler supports different visualizations that are specific to bias detection and are not inherited from the generic MetricsHandler functionality. For individual metrics, visualize returns a bar chart across all sensitive attributes and groups, with vertical dotted lines indicating the thresholds. You can also restrict the view to a specific attribute.

visualize(fairnessMetricHandler,Metric="StatisticalParityDifference",SensitiveAttribute="AgeGroup");