kfoldLoss

Regression loss for observations not used in training

Description

L = kfoldLoss(CVMdl)CVMdl. That is, for every

fold, kfoldLoss estimates the regression loss for

observations that it holds out when it trains using all other observations.

L contains a regression loss for each regularization

strength in the linear regression models that compose CVMdl.

L = kfoldLoss(CVMdl,Name,Value)Name,Value pair

arguments. For example, indicate which folds to use for the loss calculation

or specify the regression-loss function.

Input Arguments

CVMdl — Cross-validated, linear regression model

RegressionPartitionedLinear model object

Cross-validated, linear regression model, specified as a RegressionPartitionedLinear model object. You can create a

RegressionPartitionedLinear model using fitrlinear and specifying any of the one of the cross-validation,

name-value pair arguments, for example, CrossVal.

To obtain estimates, kfoldLoss applies the same data used to cross-validate the linear

regression model (X and Y).

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Folds — Fold indices to use for response prediction

1:CVMdl.KFold (default) | numeric vector of positive integers

Fold indices to use for response prediction, specified as the comma-separated pair consisting of 'Folds' and a numeric vector of positive integers. The elements of Folds must range from 1 through CVMdl.KFold.

Example: 'Folds',[1 4 10]

Data Types: single | double

LossFun — Loss function

'mse' (default) | 'epsiloninsensitive' | function handle

Loss function, specified as the comma-separated pair consisting of 'LossFun' and a built-in loss function name or function handle.

The following table lists the available loss functions. Specify one using its corresponding character vector or string scalar. Also, in the table,

β is a vector of p coefficients.

x is an observation from p predictor variables.

b is the scalar bias.

Value Description 'epsiloninsensitive'Epsilon-insensitive loss: 'mse'MSE: 'epsiloninsensitive'is appropriate for SVM learners only.Specify your own function using function handle notation.

Let

nbe the number of observations inX. Your function must have this signaturewhere:lossvalue =lossfun(Y,Yhat,W)The output argument

lossvalueis a scalar.You choose the function name (

lossfun).Yis ann-dimensional vector of observed responses.kfoldLosspasses the input argumentYin forY.Yhatis ann-dimensional vector of predicted responses, which is similar to the output ofpredict.Wis ann-by-1 numeric vector of observation weights.

Specify your function using

'LossFun',@.lossfun

Data Types: char | string | function_handle

Mode — Loss aggregation level

'average' (default) | 'individual'

Loss aggregation level, specified as the comma-separated pair

consisting of 'Mode' and 'average' or 'individual'.

| Value | Description |

|---|---|

'average' | Returns losses averaged over all folds |

'individual' | Returns losses for each fold |

Example: 'Mode','individual'

PredictionForMissingValue — Predicted response value to use for observations with missing predictor values

"median" | "mean" | "omitted" | numeric scalar

Since R2023b

Predicted response value to use for observations with missing

predictor values, specified as "median",

"mean", "omitted", or a

numeric scalar.

| Value | Description |

|---|---|

"median" | kfoldLoss uses the median of

the observed response values in the training-fold data

as the predicted response value for observations with

missing predictor values. |

"mean" | kfoldLoss uses the mean of the

observed response values in the training-fold data as

the predicted response value for observations with

missing predictor values. |

"omitted" | kfoldLoss excludes

observations with missing predictor values from the loss

computation. |

| Numeric scalar | kfoldLoss uses this value as

the predicted response value for observations with

missing predictor values. |

If an observation is missing an observed response value or an

observation weight, then kfoldLoss does not use

the observation in the loss computation.

Example: "PredictionForMissingValue","omitted"

Data Types: single | double | char | string

Output Arguments

L — Cross-validated regression losses

numeric scalar | numeric vector | numeric matrix

Cross-validated regression losses, returned as a numeric scalar,

vector, or matrix. The interpretation of L depends

on LossFun.

Let R be the number of regularizations strengths is the

cross-validated models (stored in

numel(CVMdl.Trained{1}.Lambda)) and

F be the number of folds (stored in

CVMdl.KFold).

If

Modeis'average', thenLis a 1-by-Rvector.L(is the average regression loss over all folds of the cross-validated model that uses regularization strengthj)j.Otherwise,

Lis anF-by-Rmatrix.L(is the regression loss for foldi,j)iof the cross-validated model that uses regularization strengthj.

To estimate L,

kfoldLoss uses the data that created

CVMdl (see X and Y).

Examples

Estimate k-Fold Mean Squared Error

Simulate 10000 observations from this model

is a 10000-by-1000 sparse matrix with 10% nonzero standard normal elements.

e is random normal error with mean 0 and standard deviation 0.3.

rng(1) % For reproducibility

n = 1e4;

d = 1e3;

nz = 0.1;

X = sprandn(n,d,nz);

Y = X(:,100) + 2*X(:,200) + 0.3*randn(n,1);Cross-validate a linear regression model using SVM learners.

rng(1); % For reproducibility CVMdl = fitrlinear(X,Y,'CrossVal','on');

CVMdl is a RegressionPartitionedLinear model. By default, the software implements 10-fold cross validation. You can alter the number of folds using the 'KFold' name-value pair argument.

Estimate the average of the test-sample MSEs.

mse = kfoldLoss(CVMdl)

mse = 0.1735

Alternatively, you can obtain the per-fold MSEs by specifying the name-value pair 'Mode','individual' in kfoldLoss.

Specify Custom Regression Loss

Simulate data as in Estimate k-Fold Mean Squared Error.

rng(1) % For reproducibility n = 1e4; d = 1e3; nz = 0.1; X = sprandn(n,d,nz); Y = X(:,100) + 2*X(:,200) + 0.3*randn(n,1); X = X'; % Put observations in columns for faster training

Cross-validate a linear regression model using 10-fold cross-validation. Optimize the objective function using SpaRSA.

CVMdl = fitrlinear(X,Y,'CrossVal','on','ObservationsIn','columns',... 'Solver','sparsa');

CVMdl is a RegressionPartitionedLinear model. It contains the property Trained, which is a 10-by-1 cell array holding RegressionLinear models that the software trained using the training set.

Create an anonymous function that measures Huber loss ( = 1), that is,

where

is the residual for observation j. Custom loss functions must be written in a particular form. For rules on writing a custom loss function, see the 'LossFun' name-value pair argument.

huberloss = @(Y,Yhat,W)sum(W.*((0.5*(abs(Y-Yhat)<=1).*(Y-Yhat).^2) + ...

((abs(Y-Yhat)>1).*abs(Y-Yhat)-0.5)))/sum(W);Estimate the average Huber loss over the folds. Also, obtain the Huber loss for each fold.

mseAve = kfoldLoss(CVMdl,'LossFun',huberloss)mseAve = -0.4448

mseFold = kfoldLoss(CVMdl,'LossFun',huberloss,'Mode','individual')

mseFold = 10×1

-0.4454

-0.4473

-0.4453

-0.4469

-0.4434

-0.4434

-0.4465

-0.4430

-0.4438

-0.4426

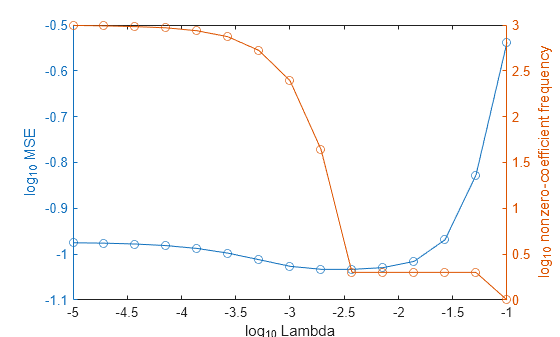

Find Good Lasso Penalty Using Cross-Validation

To determine a good lasso-penalty strength for a linear regression model that uses least squares, implement 5-fold cross-validation.

Simulate 10000 observations from this model

is a 10000-by-1000 sparse matrix with 10% nonzero standard normal elements.

e is random normal error with mean 0 and standard deviation 0.3.

rng(1) % For reproducibility

n = 1e4;

d = 1e3;

nz = 0.1;

X = sprandn(n,d,nz);

Y = X(:,100) + 2*X(:,200) + 0.3*randn(n,1);Create a set of 15 logarithmically-spaced regularization strengths from through .

Lambda = logspace(-5,-1,15);

Cross-validate the models. To increase execution speed, transpose the predictor data and specify that the observations are in columns. Optimize the objective function using SpaRSA.

X = X'; CVMdl = fitrlinear(X,Y,'ObservationsIn','columns','KFold',5,'Lambda',Lambda,... 'Learner','leastsquares','Solver','sparsa','Regularization','lasso'); numCLModels = numel(CVMdl.Trained)

numCLModels = 5

CVMdl is a RegressionPartitionedLinear model. Because fitrlinear implements 5-fold cross-validation, CVMdl contains 5 RegressionLinear models that the software trains on each fold.

Display the first trained linear regression model.

Mdl1 = CVMdl.Trained{1}Mdl1 =

RegressionLinear

ResponseName: 'Y'

ResponseTransform: 'none'

Beta: [1000x15 double]

Bias: [-0.0049 -0.0049 -0.0049 -0.0049 -0.0049 -0.0048 -0.0044 -0.0037 -0.0030 -0.0031 -0.0033 -0.0036 -0.0041 -0.0051 -0.0071]

Lambda: [1.0000e-05 1.9307e-05 3.7276e-05 7.1969e-05 1.3895e-04 2.6827e-04 5.1795e-04 1.0000e-03 0.0019 0.0037 0.0072 0.0139 0.0268 0.0518 0.1000]

Learner: 'leastsquares'

Mdl1 is a RegressionLinear model object. fitrlinear constructed Mdl1 by training on the first four folds. Because Lambda is a sequence of regularization strengths, you can think of Mdl1 as 15 models, one for each regularization strength in Lambda.

Estimate the cross-validated MSE.

mse = kfoldLoss(CVMdl);

Higher values of Lambda lead to predictor variable sparsity, which is a good quality of a regression model. For each regularization strength, train a linear regression model using the entire data set and the same options as when you cross-validated the models. Determine the number of nonzero coefficients per model.

Mdl = fitrlinear(X,Y,'ObservationsIn','columns','Lambda',Lambda,... 'Learner','leastsquares','Solver','sparsa','Regularization','lasso'); numNZCoeff = sum(Mdl.Beta~=0);

In the same figure, plot the cross-validated MSE and frequency of nonzero coefficients for each regularization strength. Plot all variables on the log scale.

figure [h,hL1,hL2] = plotyy(log10(Lambda),log10(mse),... log10(Lambda),log10(numNZCoeff)); hL1.Marker = 'o'; hL2.Marker = 'o'; ylabel(h(1),'log_{10} MSE') ylabel(h(2),'log_{10} nonzero-coefficient frequency') xlabel('log_{10} Lambda') hold off

Choose the index of the regularization strength that balances predictor variable sparsity and low MSE (for example, Lambda(10)).

idxFinal = 10;

Extract the model with corresponding to the minimal MSE.

MdlFinal = selectModels(Mdl,idxFinal)

MdlFinal =

RegressionLinear

ResponseName: 'Y'

ResponseTransform: 'none'

Beta: [1000x1 double]

Bias: -0.0050

Lambda: 0.0037

Learner: 'leastsquares'

idxNZCoeff = find(MdlFinal.Beta~=0)

idxNZCoeff = 2×1

100

200

EstCoeff = Mdl.Beta(idxNZCoeff)

EstCoeff = 2×1

1.0051

1.9965

MdlFinal is a RegressionLinear model with one regularization strength. The nonzero coefficients EstCoeff are close to the coefficients that simulated the data.

Extended Capabilities

GPU Arrays

Accelerate code by running on a graphics processing unit (GPU) using Parallel Computing Toolbox™.

This function fully supports GPU arrays. For more information, see Run MATLAB Functions on a GPU (Parallel Computing Toolbox).

Version History

Introduced in R2016aR2024a: Specify GPU arrays (requires Parallel Computing Toolbox)

kfoldLoss fully supports GPU arrays.

R2023b: Specify predicted response value to use for observations with missing predictor values

Starting in R2023b, when you predict or compute the loss, some regression models allow you to specify the predicted response value for observations with missing predictor values. Specify the PredictionForMissingValue name-value argument to use a numeric scalar, the training set median, or the training set mean as the predicted value. When computing the loss, you can also specify to omit observations with missing predictor values.

This table lists the object functions that support the

PredictionForMissingValue name-value argument. By default, the

functions use the training set median as the predicted response value for observations with

missing predictor values.

| Model Type | Model Objects | Object Functions |

|---|---|---|

| Gaussian process regression (GPR) model | RegressionGP, CompactRegressionGP | loss, predict, resubLoss, resubPredict |

RegressionPartitionedGP | kfoldLoss, kfoldPredict | |

| Gaussian kernel regression model | RegressionKernel | loss, predict |

RegressionPartitionedKernel | kfoldLoss, kfoldPredict | |

| Linear regression model | RegressionLinear | loss, predict |

RegressionPartitionedLinear | kfoldLoss, kfoldPredict | |

| Neural network regression model | RegressionNeuralNetwork, CompactRegressionNeuralNetwork | loss, predict, resubLoss, resubPredict |

RegressionPartitionedNeuralNetwork | kfoldLoss, kfoldPredict | |

| Support vector machine (SVM) regression model | RegressionSVM, CompactRegressionSVM | loss, predict, resubLoss, resubPredict |

RegressionPartitionedSVM | kfoldLoss, kfoldPredict |

In previous releases, the regression model loss and predict functions listed above used NaN predicted response values for observations with missing predictor values. The software omitted observations with missing predictor values from the resubstitution ("resub") and cross-validation ("kfold") computations for prediction and loss.

See Also

RegressionPartitionedLinear | RegressionLinear | kfoldPredict | loss

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list:

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)