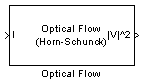

Optical Flow

Estimate object velocities

Libraries:

Computer Vision Toolbox /

Analysis & Enhancement

Description

The Optical Flow block estimates the direction and speed of object motion between two images or between one video frame to another frame using either the Horn-Schunck or the Lucas-Kanade method.

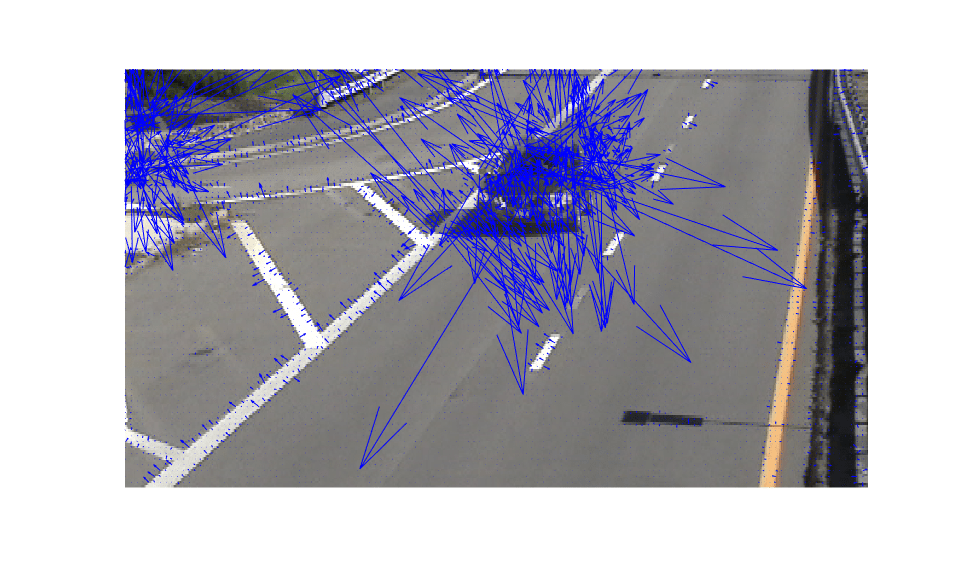

Examples

Ports

Input

I/I1 — Image or video frame

scalar | vector | matrix

Image or video frame, specified as a scalar, vector, or matrix. If the

Compute optical flow between parameter is set to

Two images, the name of this port changes to

I1.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64 | fixed point

I2 — Image

scalar | vector | matrix

Image or video frame, specified as a scalar, vector, or matrix.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64 | fixed point

Output

|V|^2 — Velocity magnitudes

scalar | vector | matrix

Velocity magnitudes, returned as a scalar, vector, or matrix.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64 | fixed point

V — Velocity components in complex form

scalar | vector | matrix

Velocity components in complex form, specified as a scalar, vector, or matrix.

Dependencies

To enable this port, set the Velocity output parameter to

Horizontal and vertical components in complex

form.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64 | fixed point

Parameters

Main Tab

Method — Optical flow calculation method

Horn-Schunck (default) | Lucas-Kanade

Select the method to use to calculate the optical flow. Options include

Horn-Schunck or

Lucas-Kanade.

Compute optical flow between — Compute optical flow

Current frame and N-th frame back (default) | Two images

Select how to compute the optical flow. Select Two images

to compute the optical flow between two images. Select Current frame and

N-th frame back to compute the optical flow between two video frames

that are N frames apart.

Dependencies

To enable this parameter, set the:

Method parameter to

Horn-SchunckMethod parameter to

Lucas-Kanadeand the Temporal gradient filter toDifference filter [-1 1]

N — Number of frames

1 (default) | scalar

Enter a scalar value that represents the number of frames between the reference frame and the current frame.

Dependencies

To enable this parameter, set the Compute optical flow

between parameter to Current frame and N-th frame

back.

Smoothness factor — Smoothness factor

1 (default) | positive scalar

Specify the smoothness factor. Enter a large positive scalar value for high relative motion between the two images or video frames. Enter a small positive scalar value for low relative motion.

Dependencies

To enable this parameter, set the Method parameter to

Horn-Schunck.

Stop iterative solution — Stop iterative solution

When maximum number of iterations is

reached (default) | When velocity difference falls below threshold | Whichever comes first

Specify the method to control when the block's iterative solution process stops. If

you want the process to stop when the velocity difference is below a certain threshold

value, select When velocity difference falls below threshold.

If you want the process to stop after a certain number of iterations, choose

When maximum number of iterations is reached. You can also

select Whichever comes first.

Dependencies

To enable this parameter, set the Method parameter to

Horn-Schunck.

Maximum number of iterations — Maximum number of iterations

10 (default) | scalar

Specify the maximum number of iterations for the block to perform.

Dependencies

To enable this parameter, set the Method parameter to

Horn-Schunck and the Stop iterative

solution parameter to When maximum number of iterations is

reached or Whichever comes first.

Velocity output — Optical flow output

Magnitude-squared (default) | Horizontal and vertical components in complex

form

Specify how to output an optical flow. If you select

Magnitude-squared, the block outputs an optical flow matrix

where each element is in the form . If you select Horizontal and vertical components in

complex form, the block outputs the optical flow matrix where each

element is in the form .

Temporal gradient filter — Filter used for temporal gradient

Difference filter [-1 1] (default) | Derivative of Gaussian

Specify whether the block solves for u and v using a difference filter or a derivative of a Gaussian filter.

Dependencies

To enable this parameter, set the Method parameter to

Lucas-Kanade.

Number of input frames to buffer — Number of input frames to buffer for smoothing

3 (default) | scalar

Specify the number of input frames to buffer for smoothing. Use this parameter for temporal filter characteristics such as the standard deviation and number of filter coefficients.

Dependencies

To enable this parameter, set the Temporal gradient filter

parameter to Derivative of Gaussian.

Standard deviation for image smoothing filter — Standard deviation for image smoothing filter

1.5 (default) | scalar

Specify the standard deviation for the image smoothing filter.

Dependencies

To enable this parameter, set the Temporal gradient filter

parameter to Derivative of Gaussian.

Standard deviation for gradient smoothing filter — Standard deviation for gradient smoothing filter

1 (default) | scalar

Specify the standard deviation for the gradient smoothing filter.

Dependencies

To enable this parameter, set the Temporal gradient filter

parameter to Derivative of Gaussian.

Discard normal flow estimates when constraint equation is ill-conditioned — Discard normal flow estimates

off (default) | on

Select this parameter to set the motion vector to zero when the optical flow constraint equation is ill-conditioned.

Dependencies

To enable this parameter, set the Temporal gradient filter

parameter to Derivative of Gaussian.

Output image corresponding to motion vectors (accounts for block delay) — Output image corresponding to motion vectors

off (default) | on

Select this parameter to output the image that corresponds to the motion vector outputted by the block.

Dependencies

To enable this parameter, set the Temporal gradient filter

parameter to Derivative of Gaussian.

Threshold for noise reduction — Threshold for noise reduction

0.0039 (default) | scalar

Specify a scalar value that determines the motion threshold between each image or video frame. The higher the number, the less small movements impact the optical flow calculation.

Dependencies

To enable this parameter, set the Method parameter to

Lucas-Kanade.

Data Types Tab

For details on the fixed-point block parameters, see Specify Fixed-Point Attributes for Blocks (DSP System Toolbox).

Block Characteristics

Data Types |

|

Multidimensional Signals |

|

Variable-Size Signals |

|

Algorithms

Optical Flow Equation

To compute the optical flow between two images, you must solve this optical flow constraint equation:

.

, , and are the spatiotemporal image brightness derivatives.

u is the horizontal optical flow.

v is the vertical optical flow.

Horn-Schunck Method

By assuming that the optical flow is smooth across the entire image, the Horn-Schunck method estimates a velocity field, , that minimizes this equation:

.

In this equation, and are the spatial derivatives of the optical velocity component, u, and scales the global smoothness term. The Horn-Schunck method minimizes the previous equation to obtain the velocity field, [u v], for each pixel in the image. This method is given by the following equations:

.

In these equations, is the velocity estimate for the pixel at (x,y), and is the neighborhood average of . For k = 0, the initial velocity is 0.

To solve u and v using the Horn-Schunck method:

Compute and by using the Sobel convolution kernel, , and its transposed form for each pixel in the first image.

Compute between images 1 and 2 using the kernel.

Assume the previous velocity to be 0, and compute the average velocity for each pixel using as a convolution kernel.

Iteratively solve for u and v.

Lucas-Kanade Method

To solve the optical flow constraint equation for u and v, the Lucas-Kanade method divides the original image into smaller sections and assumes a constant velocity in each section. Then it performs a weighted, least-square fit of the optical flow constraint equation to a constant model for in each section . The method achieves this fit by minimizing this equation:

W is a window function that emphasizes the constraints at the center of each section. The solution to the minimization problem is

.

When you set the Temporal gradient filter to Difference

filter [-1 1], u and v are

solved as follows:

Compute and using the kernel and its transposed form.

If you are working with fixed-point data types, the kernel values are signed fixed-point values with a word length equal to 16 and a fraction length equal to 15.

Compute between images 1 and 2 by using the kernel.

Smooth the gradient components, , , and , by using a separable and isotropic 5-by-5 element kernel whose effective 1-D coefficients are . If you are working with fixed-point data types, the kernel values are unsigned fixed-point values with a word length equal to 8 and a fraction length equal to 7.

Solve the 2-by-2 linear equations for each pixel using the following method:

If

then the eigenvalues of A are

In the fixed-point diagrams,

The eigenvalues are compared to the threshold, , that corresponds to the value you enter for the threshold for noise reduction. The results fall into one of the following cases.

Case 1: and

A is nonsingular, and the system of equations is solved using Cramer's rule.

Case 2: and

A is singular (noninvertible), and the gradient flow is normalized to calculate u and v.

Case 3: and

The optical flow, u and v, is 0.

Derivative of Gaussian

If you set the temporal gradient filter to Derivative of

Gaussian, u and v are solved using

these steps.

Compute and .

Use a Gaussian filter to perform temporal filtering. Specify the temporal filter characteristics, such as the standard deviation and number of filter coefficients, by using the Number of frames to buffer for temporal smoothing parameter.

Use a Gaussian filter and the derivative of a Gaussian filter to smooth the image by using spatial filtering. Specify the standard deviation and length of the image smoothing filter by using the Standard deviation for image smoothing filter parameter.

Compute between images 1 and 2.

Use the derivative of a Gaussian filter to perform temporal filtering. Specify the temporal filter characteristics, such as the standard deviation and number of filter coefficients, by using the Number of frames to buffer for temporal smoothing parameter.

Use the filter described in step 1b to perform spatial filtering on the output of the temporal filter.

Smooth the gradient components, , , and , by using a gradient smoothing filter. Use the Standard deviation for gradient smoothing filter parameter to specify the standard deviation and the number of filter coefficients for the gradient smoothing filter.

Solve the 2-by-2 linear equations for each pixel using this method:

If

then the eigenvalues of A are

When the block finds the eigenvalues, it compares them to the threshold, , that corresponds to the value you enter for the Threshold for noise reduction parameter. The results fall into one of the following cases.

Case 1: and

A is nonsingular, so the block solves the system of equations by using Cramer's rule.

Case 2: and

A is singular (noninvertible), so the block normalizes the gradient flow to calculate u and v.

Case 3: and

the optical flow, u and v, is 0.

Extended Capabilities

C/C++ Code Generation

Generate C and C++ code using Simulink® Coder™.

Fixed-Point Conversion

Design and simulate fixed-point systems using Fixed-Point Designer™.

Version History

Introduced before R2006a

See Also

Block Matching | Gaussian Pyramid | opticalFlow | opticalFlowHS | opticalFlowLK | opticalFlowLKDoG

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list:

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)