elmannet

Elman neural network

Syntax

elmannet(layerdelays,hiddenSizes,trainFcn)

Description

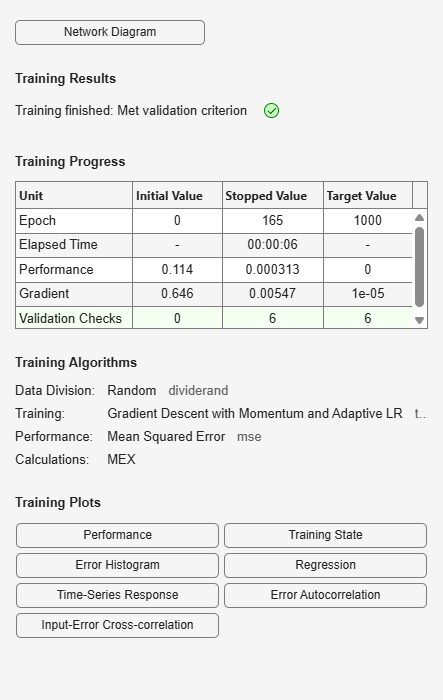

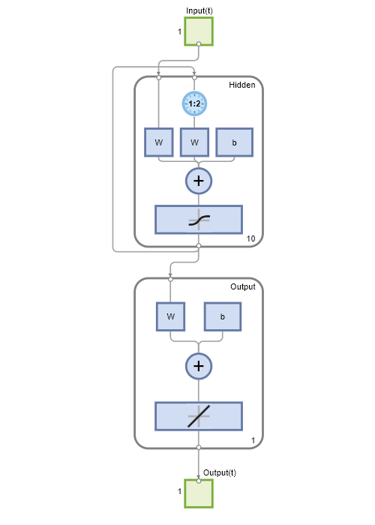

Elman networks are feedforward networks (feedforwardnet) with the addition of layer recurrent connections with tap

delays.

With the availability of full dynamic derivative calculations (fpderiv and bttderiv), the Elman network is no longer

recommended except for historical and research purposes. For more accurate learning try time

delay (timedelaynet), layer recurrent (layrecnet), NARX (narxnet), and NAR (narnet) neural networks.

Elman networks with one or more hidden layers can learn any dynamic input-output

relationship arbitrarily well, given enough neurons in the hidden layers. However, Elman

networks use simplified derivative calculations (using staticderiv, which ignores delayed connections) at the expense of less reliable

learning.

elmannet(layerdelays,hiddenSizes,trainFcn) takes these arguments,

layerdelays | Row vector of increasing 0 or positive delays (default = 1:2) |

hiddenSizes | Row vector of one or more hidden layer sizes (default = 10) |

trainFcn | Training function (default = |

and returns an Elman neural network.

Examples

Version History

Introduced in R2010b

See Also

preparets | removedelay | timedelaynet | layrecnet | narnet | narxnet