You are now following this Submission

- You will see updates in your followed content feed

- You may receive emails, depending on your communication preferences

cudaconv - Performs 2d convolution using an NVIDIA graphics chipset.

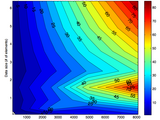

For large datasets (~1 million elements) and especially for large kernels (performance does not scale much with kernel size) cudaconv can outperform conv2 by as much as 5000%.

I did not create this algorithm.. it is adapted from an example included in the CUDA SDK and wrapped in MATLAB-compatible C code.

With very large data matrices, it can *completely* crash your computer(/graphics driver?), so beware. In testing, I found an upper limit on convolution size (limited either by the size the CUDA FFT function can accept or the size of a 2D texture) of roughly 2^20 elements, so above that the code breaks the convolution into smaller pieces. If you are feeling adventurous, feel free to raise that limit, but be aware that at those sizes cudaconv is already roughly 50-100x faster than conv2.

Cite As

Alexander Huth (2026). Fast 2D GPU-based convolution (https://www.mathworks.com/matlabcentral/fileexchange/20220-fast-2d-gpu-based-convolution), MATLAB Central File Exchange. Retrieved .

General Information

- Version 1.0.0.0 (49 KB)

MATLAB Release Compatibility

- Compatible with any release

Platform Compatibility

- Windows

- macOS

- Linux

| Version | Published | Release Notes | Action |

|---|---|---|---|

| 1.0.0.0 | Updated help, included testing script and image of benchmarks. |