Particle Swarm Optimization Research Toolbox

The Particle Swarm Optimization Research Toolbox was written to assist with thesis research combating the premature convergence problem of particle swarm optimization (PSO). The control panel offers ample flexibility to accommodate various research directions; after specifying your intentions, the toolbox will automate several tasks to free up time for conceptual planning.

EXAMPLE FEATURES

+ Choose from Gbest PSO, Lbest PSO, RegPSO, GCPSO, MPSO, OPSO, Cauchy mutation of global best, and hybrid combinations.

+ The benchmark suite consists of Ackley, Griewangk, Quadric, noisy Quartic, Rastrigin, Rosenbrock, Schaffer's f6, Schwefel, Sphere, and Weighted Sphere.

+ Each trial uses its own sequence of pseudo-random numbers to ensure both replicability and uniqueness of data.

+ Choose a maximum number of function evaluations or iterations. Terminate early if the threshold for success is reached or premature convergence is detected.

+ Choose a static or linearly varying inertia weight.

+ Activate velocity clamping and specify the percentage.

+ Choose symmetric or asymmetric initialization.

+ A suite of pre-made graph types facilitates understanding of swarm behavior.

-- Automated Graph Features --

> Specify where on the screen to generate figures.

> Automatically generate titles, legends, and labels.

> Automatically save figures to any supported format.

-- Graph Types--

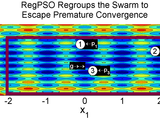

> Phase plots trace each particle's path across a contour map of the search space with iteration numbers overlaid.*

> Swarm trajectory snapshots capture the swarm state in intervals with optional tags marking global and personal bests. *

> The global bests's function value vs iteration shows how solution quality progresses and stagnates over the course of the search.

> The global best vs iteration shows how each decision variable progresses and stagnates with time.

> Each particle's function value vs iteration shows how its own solution quality oscillates with time.

> Each particle's position vector vs iteration shows how its decision variables oscillate toward the local or global minimizer.

> Each particle's velocity vector vs iteration shows how velocity components diminish with time.

> Each particle's personal best vs iteration shows how regularly and significantly each personal best updates.

* Note: Graph types marked with an asterisk are for 2D optimization problems by nature of the contour map.

+ Confine particles to the initialization space when physical limitations or a priori knowledge mandate doing so; but if the initialization space is merely an educated guess at an unfamiliar application problem, particles can be allowed to roam outside.

+ Activate or de-activate the following histories to control execution speed and the size of automatically saved workspaces.

ITERATIVE HISTORIES

> Global bests

> Function values of global bests

> Personal bests

> Function values of personal bests

> Positions

> Function values of positions

> Velocities

> Cognitive velocity components

> Social velocity components

Note: Disabling lengthy histories is recommended except when generating data to be published or verifying proper toolbox functioning, in which case histories should be analyzed.

+ Automatic input validation assertively corrects conflicting settings and displays changes made.

+ Automatically save the workspace after each trial and set of trials.

+ Automatically generate statistics.

+ Free yourself from the computer with a progress meter estimating completion time. A "choo choo" sound conveniently signals completion.

+ An Introductory Walk-through in the documentation teaches the basic functionalities of the toolbox, including how to analyze workspace variables.

ADD-IN

+ ANN Training Add-in by Tricia Rambharose

http://www.mathworks.com/matlabcentral/fileexchange/29565-neural-network-add-in-for-psort

HELPFUL LINKS

+ A history of toolbox updates is available at www.georgeevers.org/pso_research_toolbox.htm, where you can subscribe to be notified of future updates.

+ An introduction to the particle swarm algorithm is available at www.georgeevers.org/particle_swarm_optimization.htm.

+ A well-maintained list of PSO toolboxes is available at www.particleswarm.info/Programs.html.

+ My research on: (i) regrouping the swarm to liberate particles from the state of premature convergence for continued progress, and (ii) empirically searching for high-quality PSO parameters, is available at www.georgeevers.org/publications.htm.

Cite As

George Evers (2024). Particle Swarm Optimization Research Toolbox (https://www.mathworks.com/matlabcentral/fileexchange/28291-particle-swarm-optimization-research-toolbox), MATLAB Central File Exchange. Retrieved .

MATLAB Release Compatibility

Platform Compatibility

Windows macOS LinuxCategories

Tags

Community Treasure Hunt

Find the treasures in MATLAB Central and discover how the community can help you!

Start Hunting!Discover Live Editor

Create scripts with code, output, and formatted text in a single executable document.

PSORT20110515i/

| Version | Published | Release Notes | |

|---|---|---|---|

| 1.22.0.0 | Comments added to control panel by user request, definitions added to Appendix A by user request, documentation linked to online to ensure that users have the most recent information |

||

| 1.21.0.0 | Fixed bug causing axes to be overlaid for some swarm trajectory snapshots, improved readability of code related to 3D graphs, improved clarity in certain sections of the documentation |

||

| 1.20.0.0 | minor improvements to documentation |

||

| 1.19.0.0 | Compatibility has been added to accommodate calls from the neural net toolbox through Tricia Rambharose's wrapper. |

||

| 1.15.0.0 | "Load_trial_data_for_stats.m" no longer attempts to access "fghist" when it does not exist for a previously problematic input combination. |

||

| 1.13.0.0 | Corrected errors resulting from unorthodox input combinations, which caused graph generation to terminate unexpectedly: details are available at http://www.georgeevers.org/toolbox_updates.rtf |

||

| 1.12.0.0 | Removed toolbox use agreement from the free version of the toolbox posted here, and merged or converted to appendices the shortest sections of the README |

||

| 1.11.0.0 | Fixed reported bugs related to switch "OnOff_graphs"; improved README chapter "Conducting Your Own Research," improved Appendix A's definitions of variables in response to questions, improved Appendix A's definitions of benchmarks (work in progress) |

||

| 1.10.0.0 | Velocity clamping is now set identically for symmetric and asymmetric initialization, graphing switches are only created when switch OnOff_graphs is active, and the walk through has been iterated. |

||

| 1.6.0.0 | Modified "User Input Validation" phase to reflect MPSO termination criteria, completed section IX of documentation |