In this example, you transfer the learning in the VGGish regression model to an audio classification task.

Download and unzip the environmental sound classification data set. This data set consists of recordings labeled as one of 10 different audio sound classes (ESC-10).

Create an audioDatastore object to manage the data and split it into train and validation sets. Call countEachLabel to display the distribution of sound classes and the number of unique labels.

labelTable=10×2 table

chainsaw 40

clock_tick 40

crackling_fire 40

crying_baby 40

dog 40

helicopter 40

rain 40

rooster 38

sea_waves 40

sneezing 40

Determine the total number of classes and their names.

Call splitEachLabel to split the data set into train and validation sets. Inspect the distribution of labels in the training and validation sets.

ans=10×2 table

chainsaw 32

clock_tick 32

crackling_fire 32

crying_baby 32

dog 32

helicopter 32

rain 32

rooster 30

sea_waves 32

sneezing 32

ans=10×2 table

chainsaw 8

clock_tick 8

crackling_fire 8

crying_baby 8

dog 8

helicopter 8

rain 8

rooster 8

sea_waves 8

sneezing 8

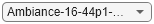

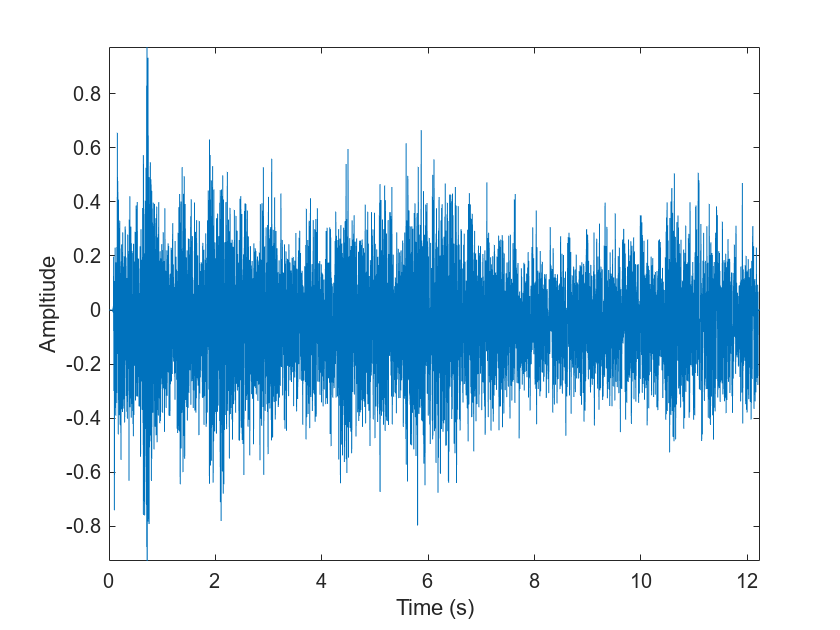

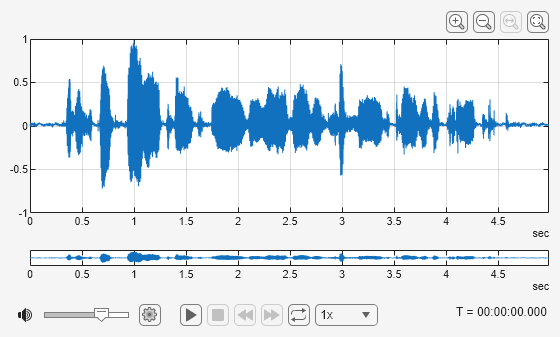

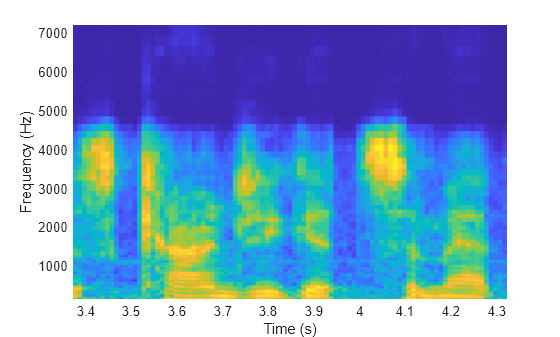

The VGGish network expects audio to be preprocessed into log mel spectrograms. Use vggishPreprocess to extract the spectrograms from the train set. There are multiple spectrograms for each audio signal. Replicate the labels so that they are in one-to-one correspondence with the spectrograms.

Extract spectrograms from the validation set and replicate the labels.

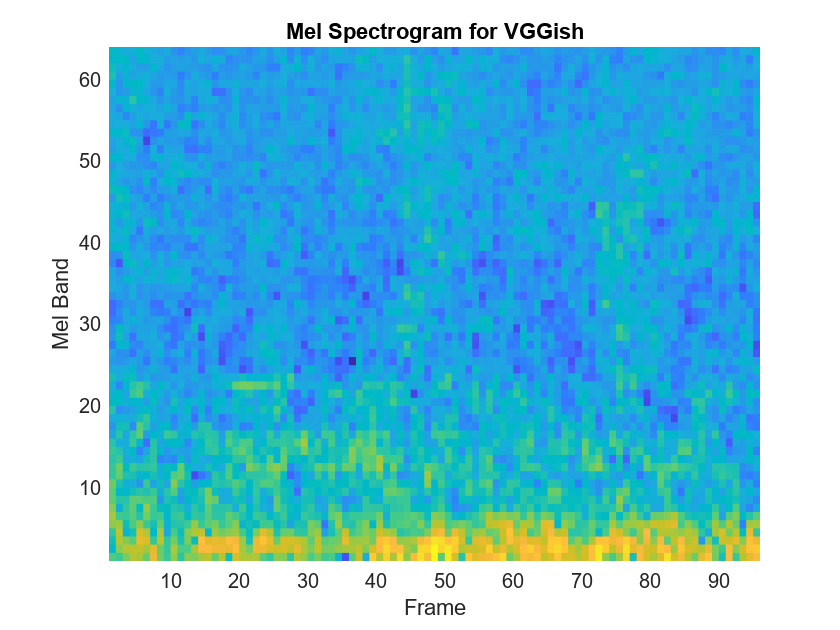

Load the VGGish model and using audioPretrainedNetwork.

Use addLayers (Deep Learning Toolbox) to add a fullyConnectedLayer (Deep Learning Toolbox) and a softmaxLayer (Deep Learning Toolbox) to the network. Set the WeightLearnRateFactor and BiasLearnRateFactor of the new fully connected layer to 10 so that learning is faster in the new layer than in the transferred layers.

Use connectLayers (Deep Learning Toolbox) to append the fully connected and softmax layers to the network.

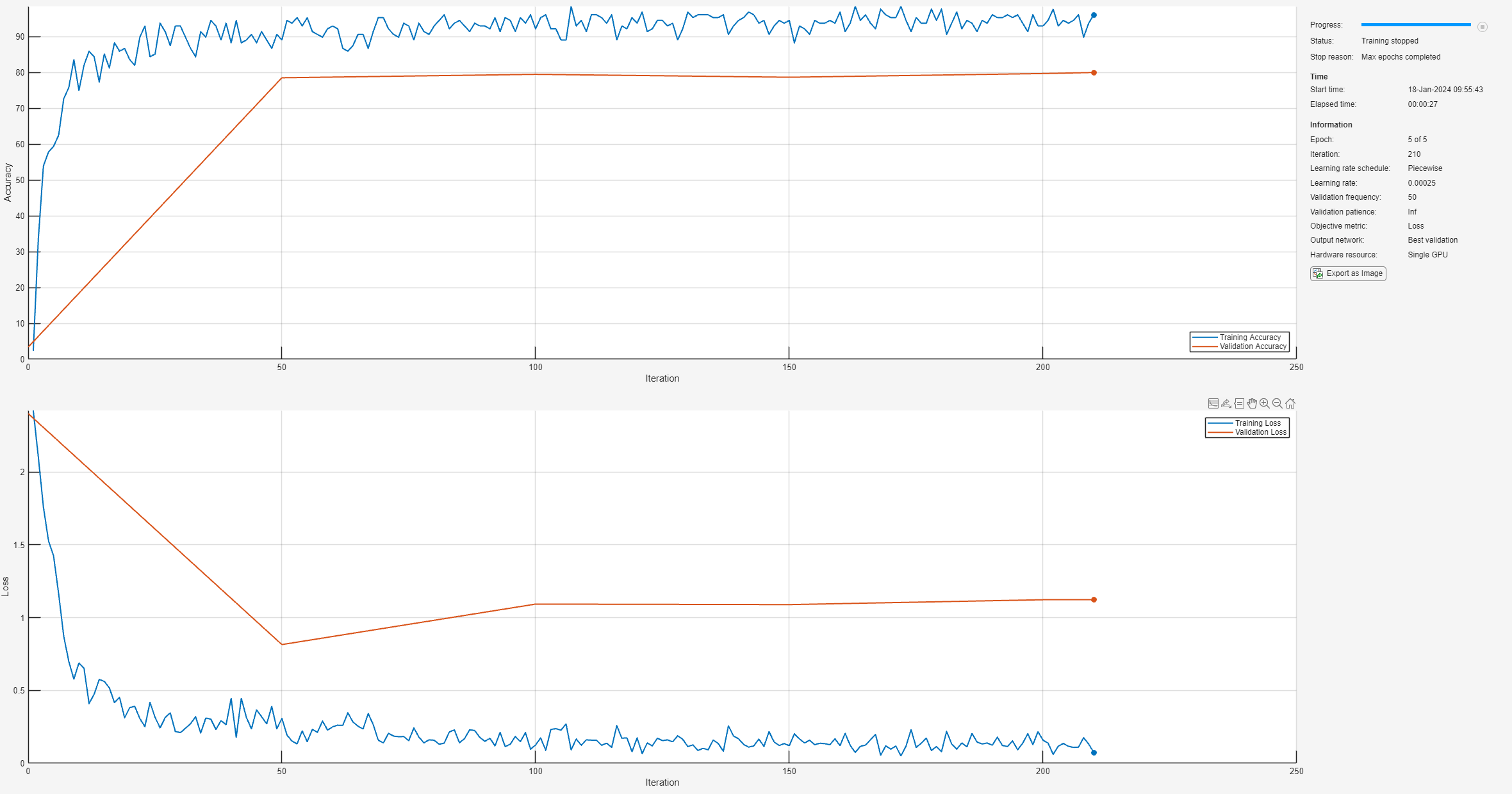

To define training options, use trainingOptions (Deep Learning Toolbox).

To train the network, use trainnet.

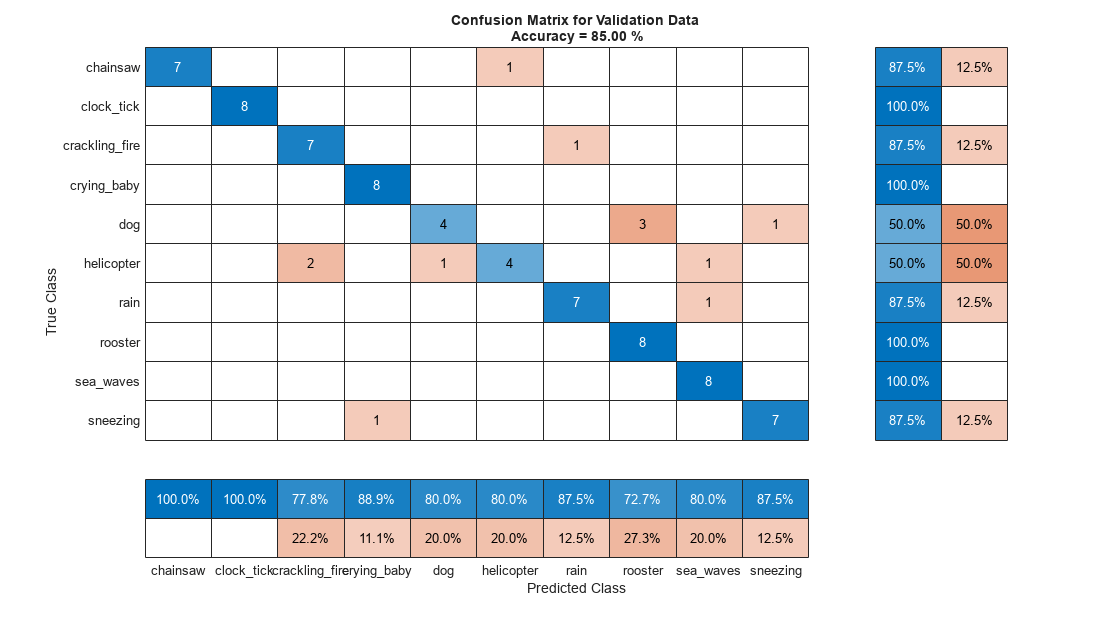

Each audio file was split into several segments to feed into the VGGish network. Combine the predictions for each file in the validation set using a majority-rule decision.

Use confusionchart (Deep Learning Toolbox) to evaluate the performance of the network on the validation set.