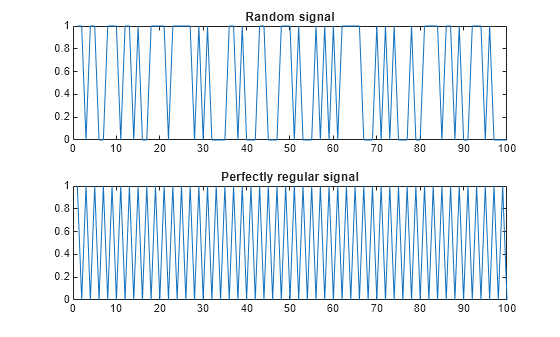

approximateEntropy

Measure of regularity of nonlinear time series

Syntax

Description

approxEnt = approximateEntropy(___,Name,Value)Name,Value pair arguments.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

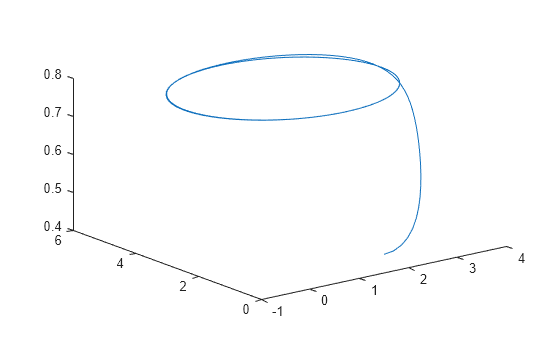

Algorithms

Approximate entropy is computed in the following way,

The

approximateEntropyfunction first generates a delayed reconstruction Y1:N for N data points with embedding dimension m, and lag τ.The software then calculates the number of within range points, at point i, given by,

where 1 is the indicator function, and R is the radius of similarity.

The approximate entropy is then calculated as where,

References

[1] Pincus, Steven M. "Approximate entropy as a measure of system complexity." Proceedings of the National Academy of Sciences. 1991 88 (6) 2297-2301; doi:10.1073/pnas.88.6.2297.

[2] U. Rajendra Acharya, Filippo Molinari, S. Vinitha Sree, Subhagata Chattopadhyay, Kwan-Hoong Ng, Jasjit S. Suri. "Automated diagnosis of epileptic EEG using entropies." Biomedical Signal Processing and Control Volume 7, Issue 4, 2012, Pages 401-408, ISSN 1746-8094.

[3] Caesarendra, Wahyu & Kosasih, P & Tieu, Kiet & Moodie, Craig. "An application of nonlinear feature extraction-A case study for low speed slewing bearing condition monitoring and prognosis." IEEE/ASME International Conference on Advanced Intelligent Mechatronics: Mechatronics for Human Wellbeing, AIM 2013.1713-1718. 10.1109/AIM.2013.6584344.

[4] Kantz, H., and Schreiber, T. Nonlinear Time Series Analysis. Cambridge: Cambridge University Press, 2003.

Extended Capabilities

Version History

Introduced in R2018a