integralKernel

Define filter for use with integral images

Description

An integralKernel object describes box filters for use with

integral images.

Creation

Description

intKernel = integralKernel(bbox,weights) creates an upright box

filter from bounding boxes, bbox, and their corresponding weights,

weights. The bounding boxes set the BoundingBoxes

property and the weights set the Weights property.

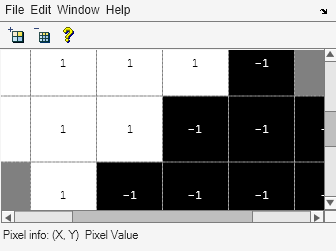

For example, a conventional filter with the coefficients:

and two regions:

| region 1: x=1, y=1, width = 4, height = 2 |

| region 2: x=1, y=3, width = 4, height = 2 |

boxH = integralKernel([1 1 4 2; 1 3 4 2],[1, -1])

intKernel = integralKernel(bbox,weights,orientation) creates a

box filter with an upright or rotated orientation. The specified orientation sets the

Orientation property.

Properties

Usage

Computing an Integral Image and Using it for Filtering with Box Filters

The integralImage function together with the

integralKernel object and integralFilter function complete the workflow for box filtering based on

integral images. You can use this workflow for filtering with box filters.

Use the

integralImagefunction to compute the integral imagesUse the

integralFilterfunction for filteringUse the

integralKernelobject to define box filters

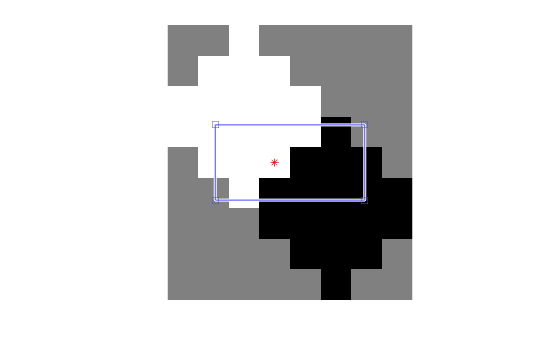

The integralKernel object allows you to

transpose the filter. You can use this to aim a directional filter. For example, you can

turn a horizontal edge detector into vertical edge detector.

Object Functions

Examples

References

[1] Viola, Paul, and Michael J. Jones. “Rapid Object Detection using a Boosted Cascade of Simple Features”. Proceedings of the 2001 IEEE® Computer Society Conference on Computer Vision and Pattern Recognition. Vol. 1, 2001, pp. 511–518.

Version History

Introduced in R2012a

See Also

detectMSERFeatures | integralImage | integralFilter | detectSURFFeatures | SURFPoints