Accelerate Radar Simulations on NVIDIA GPUs Using GPU Coder

Learn how GPU Coder™ enables you to accelerate high-compute applications in signal and image processing on NVIDIA® GPUs. Using a SAR processing example, we demonstrate how you can reduce simulation time by orders of magnitude.

Published: 27 Dec 2019

The massive parallel computational capacity of GPUs is enabling and accelerating various high-performance compute tasks. In this video we will consider how this applies to radar applications and how we can accelerate such applications on GPUs using GPU Coder.

We will use a synthetic aperture radar processing example for this illustration since SAR is commonly used for airborne radars and it’s also being used in automotive radar applications.

SAR uses the motion of the radar antenna over a target region to provide finer spatial resolution than conventional beam-scanning radars.

Here we have our raw echo data for three point targets and the plot indicates the motion of the antenna.

You synthesize the SAR image from the successive recorded radar echoes of the pulses used to illuminate the target from a moving antenna.

Here we have our algorithm that uses time domain back propagation to construct the SAR image for the three points and note that the algorithm is highly parallel, consisting of three for loops.

So we get the expected SAR image of the three points but let’s take note of the processing time. The algorithm took about 3000 seconds on my desktop CPU for 2000x10000 size input data.

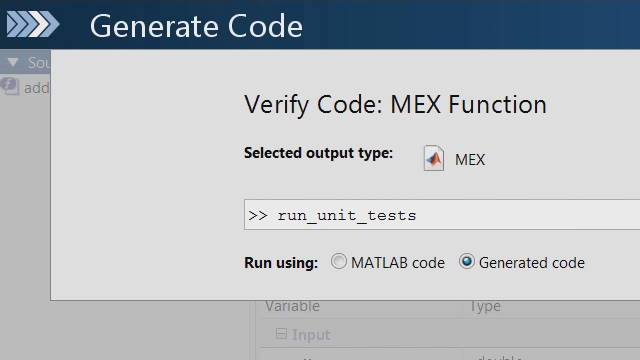

To leverage the parallelism of this algorithm and accelerate it on the GPU, we are going to utilize the mex workflow, where we generate CUDA code from this algorithm and run the compiled CUDA code from MATLAB.

You can see that the compiled CUDA code runs about two orders of magnitude faster.

The code generation report gives you additional details and insights on the generated code.

For instance, the green highlights, here, indicate the mapping of the generated code to GPU and in this example, the entire function was mapped to GPU.

You can see additional details in the metrics report about the kernels as well as the thread and block allocation. Here the generated code has a single kernel and we are only using four blocks.

But we have three loops in our algorithm and there is potential to tweak our algorithm to make it more data parallel.

By simply moving these three lines of code to the inner loop, the two outer loops are now perfectly nested without altering the behavior of the code. If we run the generated mex, we see an additional 10x speedup. You can further control the parallelism by specifying additional parameters such as the block size to the kernel pragma.

Now taking a step further, using gpucoder.profile, we can profile and get timing information that lets you explore the relative time spent processing the kernel vs. time spent in moving data back and forth from the GPU.

This can further help you identify more ways to update your application to improve the performance.

These same principles can be used to accelerate other signal processing and radar applications as well as computer vision and image processing applications.

Please refer to the documentation links posted below the video to learn more.