Deep Learning on Jetson AGX Xavier Using MATLAB, GPU Coder, and TensorRT

Designing and deploying deep learning and computer vision applications to embedded GPUs is challenging because of resource constraints inherent in embedded devices. A MATLAB® based workflow facilitates the design of these applications, and automatically generated CUDA® code can be deployed to boards like the Jetson AGX Xavier to achieve fast inference.

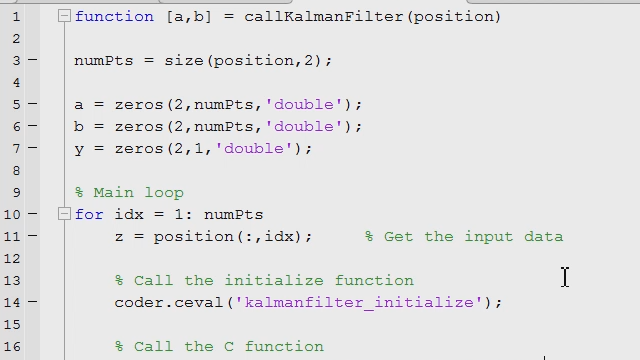

The presentation illustrates how MATLAB supports all major phases of this workflow, from acquiring data, to designing and training deep learning networks augmented with traditional computer vision techniques, to deploying them using GPU Coder™ to generate portable and optimized CUDA® code from the complete MATLAB algorithm. The generated code is then cross-compiled and deployed to the Jetson AGX Xavier board.

To illustrate the workflow, we will use a defective product detection example that can identify defective hex screws in a machine vision context. The example uses a deep neural network along with traditional computer vision techniques to identify defective hex screws.

Highlights

Watch this talk to learn how to:

- Access and manage image sets used for training the deep learning network.

- Visualize networks and gain insight into the training process.

- Import and export networks from other deep learning frameworks through ONNX and reference networks such as ResNet-50 and GoogLeNet.

- Automatically generate portable and optimized CUDA code from the MATLAB algorithm for NVIDIA GPUs such as the Jetson AGX Xavier

Published: 1 Feb 2019