Developing an Underwater 3D Camera with Range-Gated Imaging

By Jens Thielemann, Petter Risholm, and Karl H. Haugholt, SINTEF

Underwater optical imaging has the potential to provide much higher resolution images than sonar. The clarity of these images, however, depends on the water quality. In turbid water, active illumination—used in low-light situations—causes backscatter, or the reflection of light from particles in the water back toward the camera (the same effect that makes it difficult to drive in fog).

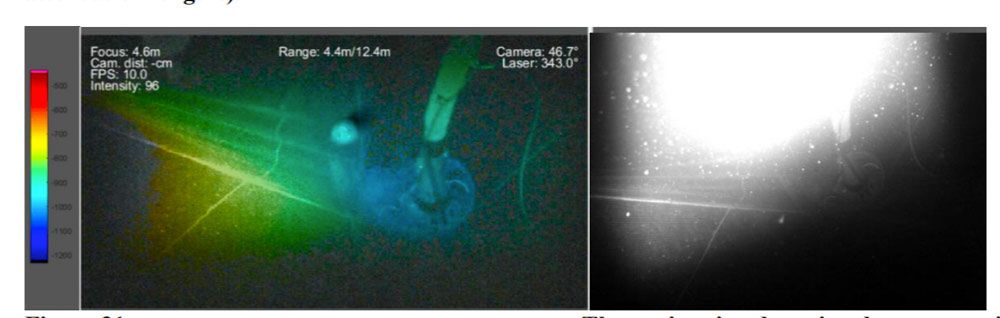

To address this challenge, SINTEF worked with partners across the EU to develop UTOFIA, an imaging system for turbid environments (Figure 1).

Figure 1. The UTOFIA camera system.

The UTOFIA camera delivers 3D images at 10–20 frames per second with a range of up to 15 meters and a resolution of one centimeter at depths of up to 300 meters. It uses range-gated imaging (see sidebar) to minimize the effects of backscatter and obtain range information for objects in its field of view (Figure 2).

We developed algorithms to process raw data from the camera and produce 3D, backscatter-free images. We were working in a new domain, and we needed to quickly test new ideas. Thanks to the integrated environment of MATLAB®, with its strong visualization support, we were able to try more than 40 different approaches and techniques. In Python® or C++, each implementation and test would have taken much longer, and it is unlikely that we would have had time to test more than a handful.

Range-Gated Imaging

Instead of illuminating a target with a constant stream of light, range-gated imaging uses nanosecond-long pulses of light produced by a strobed laser. Light reflecting off particles in front of the target returns to the camera slightly earlier than light reflecting off the target itself. We can suppress backscatter by controlling the camera shutter to capture just the light reflected by the target and very little of the light reflected by particles in the water (Figure 3). In addition, we can accurately determine the distance to the target by measuring the time-of-flight of individual light pulses and dividing by the speed of light.

Initial Data Analysis and Peak Detection

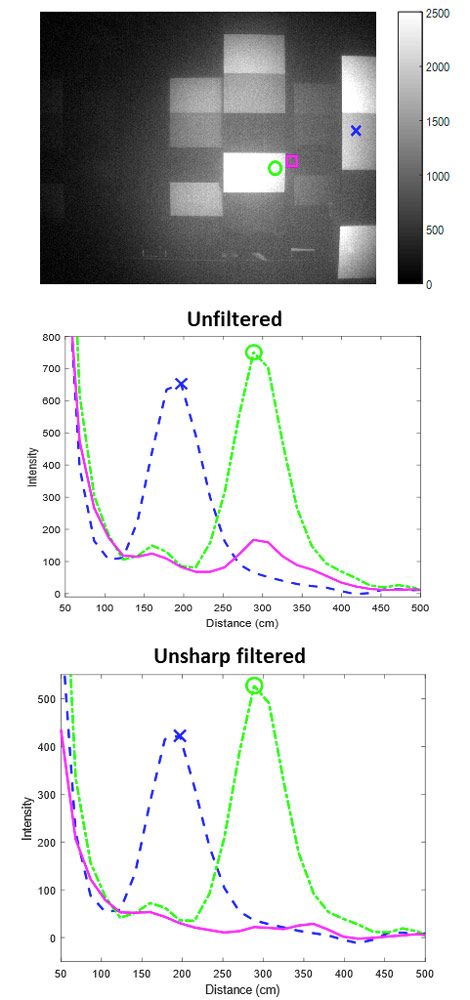

Unlike a standard digital camera, which produces 2D arrays of pixels, our camera produces 3D arrays of cubes, with the recorded value at each cube representing the intensity of light reflected at a specific location in the field of view and at a specific distance from the camera. To extract useful images from the multigigabytes of data generated by the camera, our algorithms must identify the peaks in these intensity values (Figure 4). External factors influence the peak positions, and the scattering in the water will introduce false peaks. This reduces the clarity of the resulting image and the quality of the 3D reconstruction.

Figure 4. Peaks in intensity as a function of distance (middle and bottom) for points in a captured image (top).

To understand the mechanism in action, we performed extensive statistical analyses on the data for various water turbidities and camera settings. These analyses involved building empirical models of backscatter, investigating the properties of forward scattering, and modeling detector response properties.

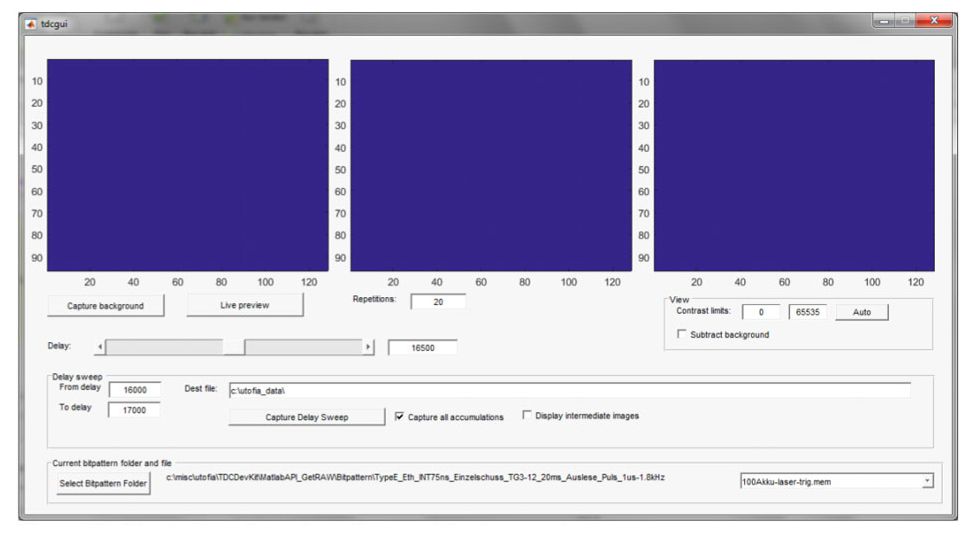

We also developed a MATLAB app to automate and control the data capture process (Figure 5). The app includes interface elements to control the pulse sweeps and a .NET interface that we used to configure capture settings and other camera components.

Developing Algorithms for 3D Reconstruction

The camera hardware diminishes backscatter significantly, but we knew that we could reduce its effects still further in software. We developed a model of backscatter response across turbidities and implemented several algorithms for reducing backscatter effects. We explored many alternatives here, including homomorphic filtering and variations over histogram equalization, finally selecting unsharp filtering, which also improved our 3D performance. In addition, we developed algorithms for camera calibration, 3D estimation, peak detection, and peak fitting.

Visualizing Image Data

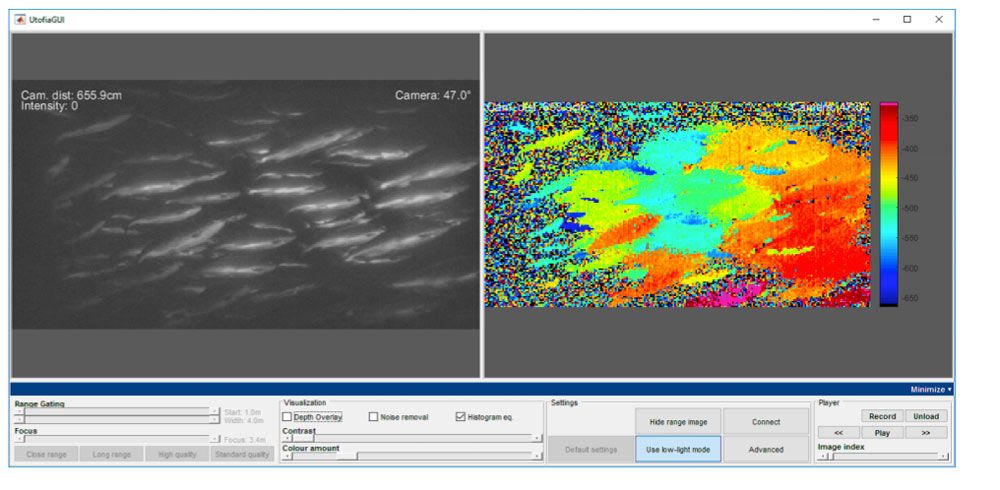

Once we had analyzed the data and developed 3D reconstruction algorithms, we needed to share the results they produced with other organizations in the UTOFIA consortium. To do this, we built a second MATLAB app for visualizing UTOFIA image data (Figure 6). This app includes controls for adjusting options and algorithm parameters, including contrast, focus, noise removal, and histogram equalization. Users can set these parameters and immediately see the effects on screen.

We packaged a standalone version with MATLAB Compiler™ and distributed it to our partners, who provided us with feedback and enhancement requests. Using MATLAB and MATLAB Compiler, we could implement the changes they requested in a few days. Implementing these changes in C/C++ or a similar language would have taken weeks, if not months.

Continued Development

We have completed the first phase of the UTOFIA project, the development of the camera and its core software. We are now performing additional processing on the image and 3D data for industry-specific applications and looking into the second phase of the project: applying machine learning and deep learning to the images to recognize objects and other phenomena.

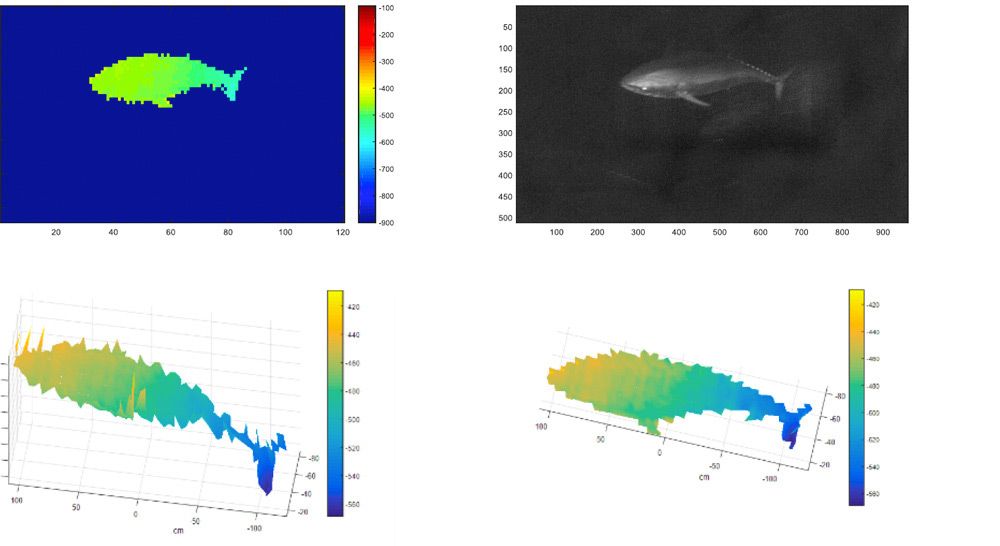

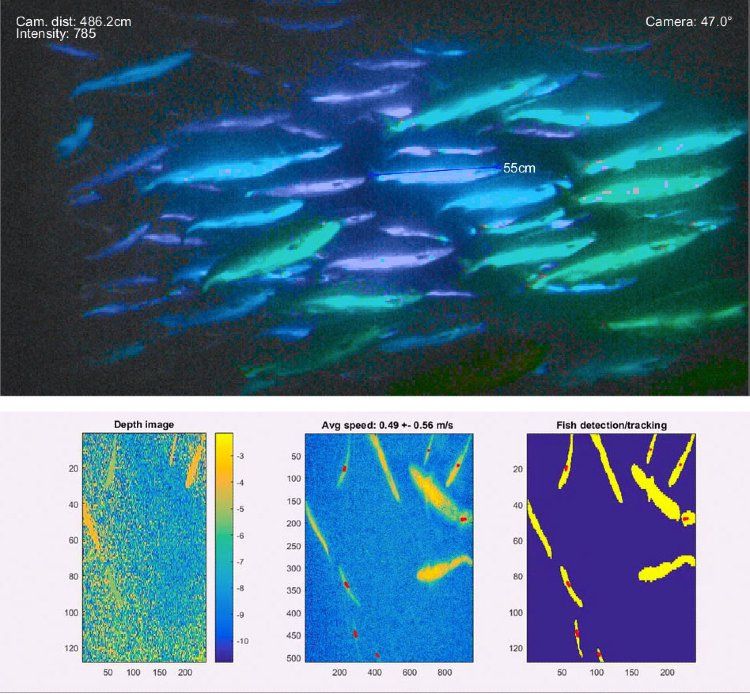

The availability of real-time 3D data has opened new possibilities for improving processes in the fishery and aquaculture industries, particularly in the area of automated, quantitative analysis. For example, at an aquaculture facility in Spain, we used the camera to identify and measure the length of red tuna (Figure 7).

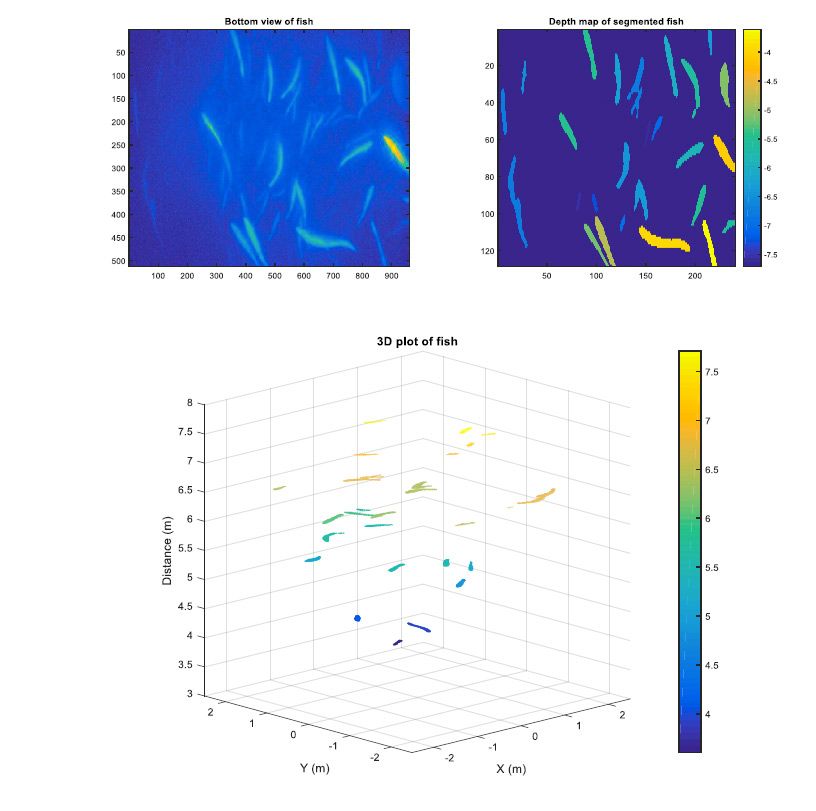

At a research facility in Norway, we used UTOFIA for behavior analysis, tracking individual fish over time to estimate swimming speed and patterns (Figure 8).

Meanwhile, in aquaculture trials of the camera, fish and other marine life are being observed in low light and high turbidity conditions for biomass estimation (Figure 9).

These conditions would have been impenetrable with a traditional underwater camera.

Published 2019

Products Used

Learn More

-

Petter Risholm, Jostein Thorstensen, Jens T. Thielemann, Kristin Kaspersen, Jon Tschudi, Chris Yates, Chris Softley, Igor Abrosimov, Jonathan Alexander, and Karl Henrik Haugholt, "Real-time super-resolved 3D in turbid water using a fast range-gated CMOS camera," Appl. Opt. 57, 3927-3937 (2018).