Battelle Neural Bypass Technology Restores Movement to a Paralyzed Man’s Arm and Hand

“The algorithms we developed using MATLAB gave the participant back basic control of his arm and hand. By the end of the study, he could grip a bottle, pour out its contents, and set it down, as well as pick up a stir stick and execute a stirring motion.”

Challenge

Restore arm and hand control to a quadriplegic man by processing signals from an electrode array implanted in his brain

Solution

Use MATLAB to analyze signal samples, apply machine learning to classify patterns mapped to movements, and generate actuation signals for a neuromuscular electrical stimulator

Results

- Control over paralyzed hand and arm restored

- Real-time processing performance achieved

- Interdisciplinary collaboration enabled

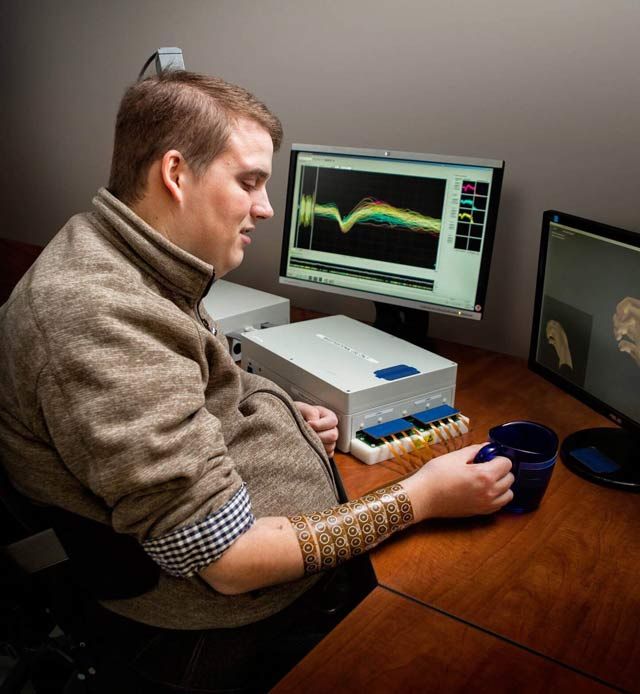

Patient using the Battelle NeuroLife system.

When disease or injury disrupts the neural pathways connecting the brain’s motor cortex to muscles, the result is often permanent paralysis. A team of engineers, scientists, and statisticians at Battelle have developed a technology that bypasses damaged neural pathways. Known as Battelle NeuroLife™, the system is the first ever to successfully restore muscle control using intracortically recorded neural signals in a human. It has enabled a quadriplegic man to regain control of his right forearm, hand, and fingers.

NeuroLife includes signal processing and machine learning algorithms developed in MATLAB®. These algorithms process and interpret signals from a microelectrode array implanted in the study participant’s brain. When the participant thinks of a specific hand movement, the algorithms decode the resultant brain signals, identify the intended movement, and generate signals that stimulate the patient’s arm to perform the movement.

Challenge

Neurosurgeons from The Ohio State University Wexner Medical Center implanted a microelectrode array into the volunteer participant’s left primary motor cortex. The array uses 96 individual electrodes to record neural activity. At 30,000 samples per second, the electrodes produce almost 300,000 samples every 100 milliseconds.

To translate this data into specific hand movements, Battelle engineers needed to extract meaningful features, apply classification algorithms to identify patterns in those features, and map the patterns to the participant’s intended hand movements. The engineers then needed to control 130 channels of a neuromuscular electrical stimulator (NMES) sleeve on the participant’s right arm. Even a seconds-long delay between thought and movement would have made the movement too unnatural, rendering the entire system impractical. As a result, all data processing, classification, and decoding had to be done in real time. To achieve performance approaching natural motion, the system had to update 10 times per second, which meant completing all processing steps in less than 100 milliseconds.

Solution

Battelle used MATLAB to develop signal processing and machine learning algorithms and run the algorithms in real time.

The participant was shown a computer-generated virtual hand performing movements such as wrist flexion and extension, thumb flexion and extension, and hand opening and closing, and instructed to think about making the same movements with his own hand.

Working in MATLAB, the team developed algorithms to analyze data from the 96 channels in the implanted electrode array. Using Wavelet Toolbox™, they performed wavelet decomposition to isolate the frequency ranges of the brain signals that govern movement.

They performed transforms on the results of the decomposition in MATLAB to calculate mean wavelet power (MWP), reducing the 3000 features captured during each 100 millisecond window for a single channel to a single value.

The resulting 96 MWP values were used as feature vectors for machine learning algorithms that translate the features into individual movements.

The team used MATLAB to test several machine learning techniques, including discriminant analysis and support vector machines (SVM), settling on a custom SVM optimized for performance.

During testing sessions, the team trained the SVM by having the participant attempt the movements shown in the videos. They used the trained SVM’s output to animate a computer-generated virtual hand that the participant could manipulate on screen. The same SVM output was scaled and used to control the 130 channels of the NMES sleeve.

While the participant moved his arm and hand to perform simple movements, all signal processing, decoding, and machine learning algorithms were run in MATLAB in real time on a desktop computer.

Battelle engineers are currently using MATLAB to develop algorithms for the second- generation NeuroLife system, which will incorporate accelerometers and other sensors to enable the control algorithms to monitor the position of the arm and detect fatigue.

Results

- Control over paralyzed hand and arm restored. “The algorithms we developed using MATLAB to decode signals from an implanted microelectrode array and actuate an NMES sleeve gave the participant back basic control of his arm and hand,” says David Friedenberg, principal research statistician at Battelle. “By the end of the study, he could grip a bottle, pour out its contents, and set it down, as well as pick up a stir stick and execute a stirring motion.”

- Real-time processing performance achieved. “Our algorithms performed all the necessary wavelet decomposition, decoding, and other processing within 60–70 milliseconds running in MATLAB,” says Nick Annetta, research scientist at Battelle.

- Interdisciplinary collaboration enabled. “I’m a statistician, Nick is an electrical engineer, and lots of other engineers and interns worked on the project,” says Friedenberg. “The whole team was comfortable with MATLAB—it was the language that we all had in common.”