Deploy Simple Adder Network by Using MATLAB Deployment Script and Deployment Instructions File

This example shows how to create a .dln file for deploying a pretrained adder network. Deploy and initialize the generated deep learning processor IP core and adder network by using a MATLAB® deployment utility script to parse the generated .dln file.

Prerequisites

Intel® Arria®10 SoC development kit

Introduction

Generate a file that has instructions to communicate with the deployed deep learning processor IP core by using Deep Learning HDL Toolbox™. Initialize the deployed deep learning processor IP core without a MATLAB® connection by using a utility to parse and execute the instructions in the generated file. Use the example MATLAB® utility, MATLABDeploymentUtility.m to create your own custom utility. To deploy and initialize the generated deep learning processor IP core:

Create a

.dlnbinary file.Deploy the

.dlnfile by using the MATLAB® utility script file.Retrieve the prediction results by using MATLAB and the

predictmethod.

Create Binary File

Create a dlhdl.Target object to deploy to a file. Provide the file name with '.dln' extension. Filename is an optional parameter here. If FileName is not provided, the generated file name is the same as the name of the object in the Bitstream argument of the dlhdl.Workflow object.

hTargetFile = dlhdl.Target('Intel','Interface','File','Filename','AdderNWdeploymentData.dln');

Create a simple adder network and an object of the dlhdl.Workflow class.

image = randi(255, [3,3,4]); % create adder only network inLayer = imageInputLayer(size(image), 'Name', 'data', 'Normalization', 'none'); addLayer = additionLayer(2, 'Name', 'add'); lgraph = layerGraph([inLayer, addLayer]); lgraph = connectLayers(lgraph, 'data', 'add/in2'); snet = dlnetwork(lgraph); hW = dlhdl.Workflow('network', snet, 'Bitstream', 'arria10soc_single','Target',hTargetFile);

Generate the network weights and biases, deployment instructions by using the compile method of the dlhdl.Workflow object.

hW.compile

### Compiling network for Deep Learning FPGA prototyping ...

### Targeting FPGA bitstream arria10soc_single.

### The network includes the following layers:

1 'data' Image Input 3×3×4 images (SW Layer)

2 'add' Addition Element-wise addition of 2 inputs (HW Layer)

3 'output' Regression Output mean-squared-error (SW Layer)

### Notice: The layer 'data' with type 'nnet.cnn.layer.ImageInputLayer' is implemented in software.

### Notice: The layer 'output' with type 'nnet.cnn.layer.RegressionOutputLayer' is implemented in software.

### Allocating external memory buffers:

offset_name offset_address allocated_space

_______________________ ______________ ________________

"InputDataOffset" "0x00000000" "4.0 MB"

"OutputResultOffset" "0x00400000" "4.0 MB"

"SchedulerDataOffset" "0x00800000" "0.0 MB"

"SystemBufferOffset" "0x00800000" "20.0 MB"

"InstructionDataOffset" "0x01c00000" "4.0 MB"

"EndOffset" "0x02000000" "Total: 32.0 MB"

### Network compilation complete.

ans = struct with fields:

weights: []

instructions: [1×1 struct]

registers: []

syncInstructions: [1×1 struct]

constantData: {}

To generate .dln file use the deploy method of the dlhdl.Workflow object.

hW.deploy

WR@ADDR: 0x00000000 Len: 1: 0x00000001 WR@ADDR: 0x00000008 Len: 1: 0x80000000 WR@ADDR: 0x0000000c Len: 1: 0x80000000 WR@ADDR: 0x00000010 Len: 1: 0x80000000 WR@ADDR: 0x00000014 Len: 1: 0x80000000 WR@ADDR: 0x00000018 Len: 1: 0x80000000 WR@ADDR: 0x00000340 Len: 1: 0x01C00000 WR@ADDR: 0x00000348 Len: 1: 0x00000006 WR@ADDR: 0x00000338 Len: 1: 0x00000001 WR@ADDR: 0x0000033c Len: 1: 0x00C00000 WR@ADDR: 0x00000308 Len: 1: 0x01C00018 WR@ADDR: 0x0000030c Len: 1: 0x00000070 WR@ADDR: 0x00000224 Len: 1: 0x00000000 WR@ADDR: 0x81c00000 Len: 118: 0x00000001 WR@ADDR: 0x00000228 Len: 1: 0x00000000 WR@ADDR: 0x00000228 Len: 1: 0x00000001 WR@ADDR: 0x00000228 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0000000B WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x5EECE9BF WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0001000B WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0xA6607FD1 WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0002000B WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0xE69958D6 WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0003000B WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0xCE9B0C98 WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0000000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0xE306BC8E WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0001000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x6D1D3062 WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0002000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x5E0BE35F WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0003000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x8E5097FB WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0004000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0xE9C840AC WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0005000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x742F745C WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0006000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x725F612A WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000 WR@ADDR: 0x00000140 Len: 1: 0x00000001 WR@ADDR: 0x0000014c Len: 1: 0x0007000A WR@ADDR: 0x00000164 Len: 1: 0x00000000 WR@ADDR: 0x00000168 Len: 1: 0x7014FDA9 WR@ADDR: 0x00000160 Len: 1: 0x00000001 WR@ADDR: 0x00000160 Len: 1: 0x00000000

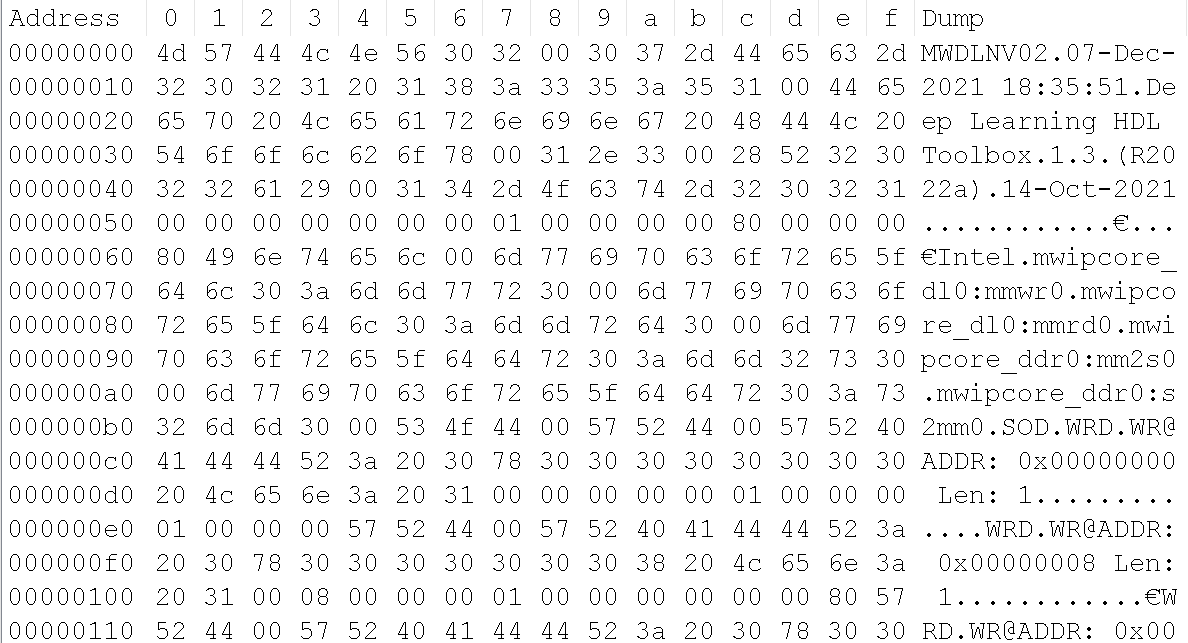

The generated .dln file is a binary file. All the data inside the file is in hex format.

Structure of Generated .dln File

The data inside the binary file is of strings and uint32 bit format. All the strings are NULL terminated. This image shows a section of the generated .dln file.

The binary file consists of:

Header section which consists of Date and time the file was generated and some important information like DL IP Base address, DL IP Address range, DDR Base address and DDR address range.

Start of Data(SOD) section indicates start of instructions to read and write data.

Data section with AXI read and write transactions.

An End of data(EOD) command indicates the end of the file.

For more information about the binary file structure, see Initialize Deployed Deep Learning Processor Without Using a MATLAB Connection.

Deploy Bitstream and .dln file Using MATLAB Deployment Utility

Setup the Intel® Quartus® tool path before programming the bitstream.

% hdlsetuptoolpath('ToolName', 'Altera Quartus II','ToolPath', 'C:\altera\22.1\quartus\bin64'); hTarget1 = dlhdl.Target('Intel','Interface','JTAG'); hW1 = dlhdl.Workflow('network', snet, 'Bitstream', 'arria10soc_single','Target',hTarget1); % Program BitStream at this point. Because to transfer data using FPGAIO i.e, Write/Read FPGA must be programmed before Wr/Rd through FPGAIO hW1.deploy('ProgramBitstream',true,'ProgramNetwork',false);

### Programming FPGA Bitstream using JTAG... ### Programming the FPGA bitstream has been completed successfully.

Deploy .dln file using MATLAB deployment utility:

Use the MATLAB deployment utility script to extract the instructions from the binary file(generated .dln file) and program the FPGA. The deployment utility script:

Reads the header details of the .dln file until detection of the 'SOD' command. Line 1 to 35 in the

MATLABDeploymentUtility.m script filereads in the header information. Once SOD is detected the script bgins the actual read and write instructions of the compiled network.Reads data by extracting the address, length of data to be read and data to read information from the read packet structure. Use the extracted address, length of data to be read and data to read as input arguments to the

readmemoryfunction.Write data by extracting the write data address and data to write information from the write packet structure. Use the extracted write data address and data to write as input arguments to the

writememoryfunction.Detects the end of data (EOD) command and closes the generated file.

MATLABDeploymentUtility('AdderNWdeploymentData.dln');WR@ADDR: 0x00000000 Len: 1: 0X00000001 WR@ADDR: 0x00000008 Len: 1: 80000000 WR@ADDR: 0x0000000c Len: 1: 80000000 WR@ADDR: 0x00000010 Len: 1: 80000000 WR@ADDR: 0x00000014 Len: 1: 80000000 WR@ADDR: 0x00000018 Len: 1: 80000000 WR@ADDR: 0x00000340 Len: 1: 0X01C00000 WR@ADDR: 0x00000348 Len: 1: 0X00000006 WR@ADDR: 0x00000338 Len: 1: 0X00000001 WR@ADDR: 0x0000033c Len: 1: 0X00C00000 WR@ADDR: 0x00000308 Len: 1: 0X01C00018 WR@ADDR: 0x0000030c Len: 1: 0X00000070 WR@ADDR: 0x00000224 Len: 1: 0X00000000 WR@ADDR: 0x81c00000 Len: 118: 0X00000001 WR@ADDR: 0x00000228 Len: 1: 0X00000000 WR@ADDR: 0x00000228 Len: 1: 0X00000001 WR@ADDR: 0x00000228 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0000000B WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 5EECE9BF WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0001000B WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: A6607FD1 WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0002000B WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: E69958D6 WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0003000B WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: CE9B0C98 WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0000000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: E306BC8E WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0001000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 6D1D3062 WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0002000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 5E0BE35F WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0003000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 8E5097FB WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0004000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: E9C840AC WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0005000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 742F745C WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0006000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 725F612A WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000 WR@ADDR: 0x00000140 Len: 1: 0X00000001 WR@ADDR: 0x0000014c Len: 1: 0X0007000A WR@ADDR: 0x00000164 Len: 1: 0X00000000 WR@ADDR: 0x00000168 Len: 1: 7014FDA9 WR@ADDR: 0x00000160 Len: 1: 0X00000001 WR@ADDR: 0x00000160 Len: 1: 0X00000000

Retrieve Prediction Results

Deploy the generated deep learning processor IP core and network by using the MATLAB deployment utility. Retrieve the prediction results from the deployed deep learning processor and compare them with the prediction results from the Deep Learning Toolbox™.

hW2 = dlhdl.Workflow('network', snet, 'Bitstream', 'arria10soc_single','Target',hTarget1); [prediction, ~] = hW2.predict(image,'ProgramBitstream',false,'ProgramNetwork',true);

### Compiling network for Deep Learning FPGA prototyping ...

### Targeting FPGA bitstream arria10soc_single.

### The network includes the following layers:

1 'data' Image Input 3×3×4 images (SW Layer)

2 'add' Addition Element-wise addition of 2 inputs (HW Layer)

3 'output' Regression Output mean-squared-error (SW Layer)

### Notice: The layer 'data' with type 'nnet.cnn.layer.ImageInputLayer' is implemented in software.

### Notice: The layer 'output' with type 'nnet.cnn.layer.RegressionOutputLayer' is implemented in software.

### Allocating external memory buffers:

offset_name offset_address allocated_space

_______________________ ______________ ________________

"InputDataOffset" "0x00000000" "4.0 MB"

"OutputResultOffset" "0x00400000" "4.0 MB"

"SchedulerDataOffset" "0x00800000" "0.0 MB"

"SystemBufferOffset" "0x00800000" "20.0 MB"

"InstructionDataOffset" "0x01c00000" "4.0 MB"

"EndOffset" "0x02000000" "Total: 32.0 MB"

### Network compilation complete.

### Finished writing input activations.

### Running single input activation.

Even though we provide 'ProgramNetwork' as 'true' in the above prediction function. FPGA remains programmed with the network instructions deployed through MATLAB deployment utility only. This is because during Programming network we look for network checksum, if checksum matches with the previous checksum, network will not be reprogrammed.

% Get DL toolbox output. DLToolboxSimulationOutp = snet.predict(image, 'ExecutionEnvironment', 'cpu'); % Verify DL Toolbox prediction result with prediction results for the deployment % done using MATLAB deployment utility Script isequal(DLToolboxSimulationOutp,prediction)

ans = logical

1

See Also

dlhdl.Target | dlhdl.Workflow | compile | deploy | predict | classify