armafevd

Generate or plot ARMA model forecast error variance decomposition (FEVD)

Syntax

Description

The armafevd function returns or plots the forecast error variance decomposition of the variables in a univariate or vector (multivariate) autoregressive moving average (ARMA or VARMA) model specified by arrays of coefficients or lag operator polynomials.

Alternatively, you can return an FEVD from a fully specified (for example, estimated) model object by using a function in this table.

The FEVD provides information about the relative importance of each innovation in affecting the forecast error variance of all variables in the system. In contrast, the impulse response function (IRF) traces the effects of an innovation shock to one variable on the response of all variables in the system. To estimate IRFs of univariate or multivariate ARMA models, see armairf.

armafevd(

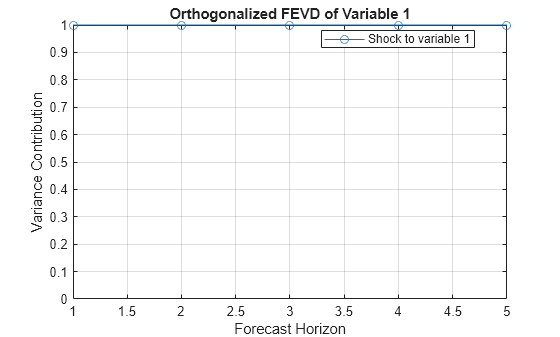

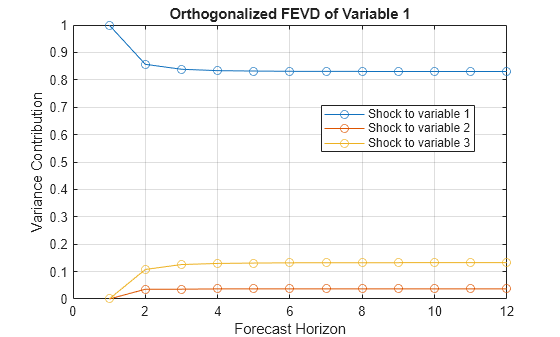

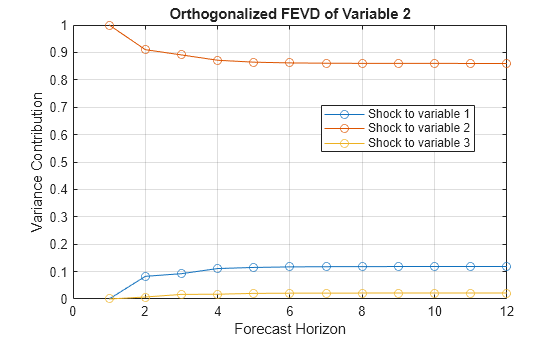

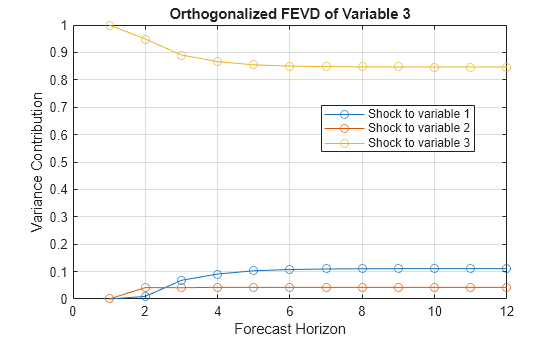

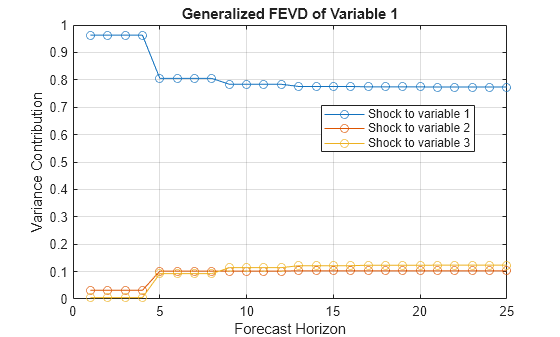

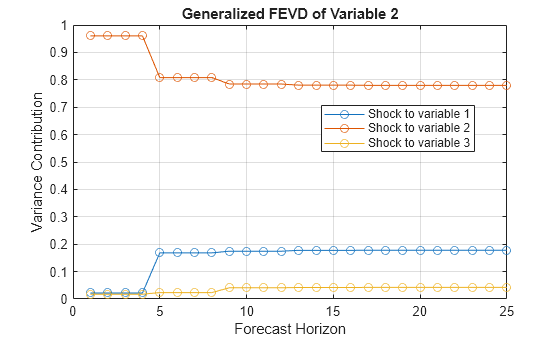

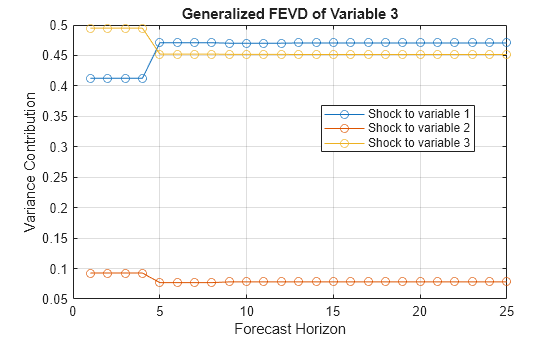

plots, in separate figures, the FEVD of the time series variables that compose an

ARMA(p,q) model, with input autoregressive (AR)

and moving average (MA) coefficients. Each figure corresponds to a variable and contains a

line plot for each time series variable. The line plots are the FEVDs of that variable,

over the forecast horizon, resulting from a one-standard-deviation innovation shock

applied to all variables in the system at time 0.ar0,ma0)

The armafevd function:

Accepts vectors or cell vectors of matrices in difference-equation notation

Accepts

LagOplag operator polynomials corresponding to the AR and MA polynomials in lag operator notationAccommodates time series models that are univariate or multivariate, stationary or integrated, structural or in reduced form, and invertible or noninvertible

Assumes that the model constant c is 0

armafevd(

plots the ar0,ma0,Name=Value)numVars FEVDs with additional options specified by one or

more name-value arguments. For example, NumObs=10,Method="generalized"

specifies a 10-period forecast horizon and the estimation of the generalized FEVD.

armafevd(

plots to the axes specified in ax,___)ax instead of

the axes in new figures. The option ax can precede any of the input argument

combinations in the previous syntaxes.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

More About

Tips

To accommodate structural ARMA(p,q) models, supply

LagOplag operator polynomials for the input argumentsar0andma0. To specify a structural coefficient when you callLagOp, set the corresponding lag to 0 by using theLagsname-value argument.For orthogonalized multivariate FEVDs, arrange the variables according to Wold causal ordering [3]:

The first variable (corresponding to the first row and column of both

ar0andma0) is most likely to have an immediate impact (t = 0) on all other variables.The second variable (corresponding to the second row and column of both

ar0andma0) is most likely to have an immediate impact on the remaining variables, but not the first variable.In general, variable j (corresponding to row j and column j of both

ar0andma0) is the most likely to have an immediate impact on the lastnumVars– j variables, but not the previous j – 1 variables.

Algorithms

armafevdplots FEVDs only when it returns no output arguments orh.If

Methodis"orthogonalized",armafevdorthogonalizes the innovation shocks by applying the Cholesky factorization of the innovations covariance matrixInnovCov. The covariance of the orthogonalized innovation shocks is the identity matrix, and the FEVD of each variable sums to one, that is, the sum along any row ofYis one. Therefore, the orthogonalized FEVD represents the proportion of forecast error variance attributable to various shocks in the system. However, the orthogonalized FEVD generally depends on the order of the variables.If

Methodis"generalized":The resulting FEVD is invariant to the order of the variables.

The resulting FEVD is not based on an orthogonal transformation.

The resulting FEVD of a variable sums to one only when

InnovCovis diagonal [4].

Therefore, the generalized FEVD represents the contribution to the forecast error variance of equation-wise shocks to the variables in the system.

If

InnovCovis a diagonal matrix, then the resulting generalized and orthogonalized FEVDs are identical. Otherwise, the resulting generalized and orthogonalized FEVDs are identical only when the first variable shocks all variables (in other words, all else being the same, both methods yield the same value ofY(:,1,:)).

References

[1] Hamilton, James D. Time Series Analysis. Princeton, NJ: Princeton University Press, 1994.

[2] Lütkepohl, H. "Asymptotic Distributions of Impulse Response Functions and Forecast Error Variance Decompositions of Vector Autoregressive Models." Review of Economics and Statistics. Vol. 72, 1990, pp. 116–125.

[3] Lütkepohl, Helmut. New Introduction to Multiple Time Series Analysis. New York, NY: Springer-Verlag, 2007.

[4] Pesaran, H. H., and Y. Shin. "Generalized Impulse Response Analysis in Linear Multivariate Models." Economic Letters. Vol. 58, 1998, pp. 17–29.

Version History

Introduced in R2018b