Deep Learning Vehicle Detector from IP Camera Stream on Jetson

This example shows how to develop a CUDA® application from a Simulink® model that performs vehicle detection using convolutional neural networks (CNN). This example takes the IP camera stream as an input and detects vehicles in the frame. This example uses the pretrained vehicle detection network from the Object Detection Using YOLO v2 Deep Learning example of the Computer Vision Toolbox™. For more information, see Object Detection Using YOLO v2 Deep Learning (Computer Vision Toolbox).

This example illustrates the following concepts:

Model the vehicle detection application in Simulink. The pretrained YOLO v2 detector processes the frames from the IP camera stream. This network detects vehicles in the video and outputs the coordinates of the bounding boxes for these vehicles and their confidence scores.

Configure the model for code generation and deployment for the NVIDIA Jetson TX2 target.

Generate a CUDA executable for the Simulink model on the NVIDIA Jetson TX2 target.

Target Board Requirements

NVIDIA® Jetson® embedded platform.

Ethernet crossover cable to connect the target board and host PC. (if you cannot connect the target board to a local network)

An IP Camera connected to the to the same network as the target.

A monitor connected to the display port of the target.

SDL (v1.2) libraries on the target.

GStreamer libraries on the target.

NVIDIA CUDA toolkit and driver.

NVIDIA cuDNN library on the target.

Environment variables on the target for the compilers and libraries. For more information, see Prerequisites for Generating Code for NVIDIA Boards.

Connect to NVIDIA Hardware

The support package uses an SSH connection over TCP/IP to execute commands while building and running the generated CUDA code on the Jetson platforms. Connect the target platform to the same network as the host computer or use an Ethernet crossover cable to connect the board directly to the host computer. For information on how to set up and configure your board, see NVIDIA documentation.

To communicate with the NVIDIA hardware, create a live hardware connection object by using the jetson function. You must know the host name or IP address, user name, and password of the target board to create a live hardware connection object. For example, when connecting to the target board for the first time, create a live object for Jetson hardware by using the command:

hwobj = jetson('jetson-tx2-name','ubuntu','ubuntu');

During the hardware live object creation, the support package performs hardware and software checks, I/O server installation, and gathers peripheral information on target. This information is displayed in the Command Window.

Verify GPU Environment on Target Board

To verify that the compilers and libraries necessary for running this example are set up correctly, use the coder.checkGpuInstall function.

envCfg = coder.gpuEnvConfig('jetson'); envCfg.DeepLibTarget = 'cudnn'; envCfg.DeepCodegen = 1; envCfg.Quiet = 1; envCfg.HardwareObject = hwobj; coder.checkGpuInstall(envCfg);

Get Pretrained Vehicle Detection Network

This example uses the yolov2ResNet50VehicleExample MAT-file containing the pretrained network. The file is approximately 98MB in size. Download the file from the MathWorks website.

vehiclenetFile = matlab.internal.examples.downloadSupportFile('vision/data','yolov2ResNet50VehicleExample.mat');

Vehicle Detection Simulink Model

The Simulink model for performing vehicle detection on the IP camera stream is shown. When the model is deployed and run on the target, the SDL Video Display block displays the IP camera stream with vehicle annotations.

open_system('VehicleDetectionOnJetson');

Set the file paths of the downloaded network model in the predict and detector blocks of the Simulink model.

set_param('VehicleDetectionOnJetson/Vehicle Detector','DetectorFilePath',vehiclenetFile)

IP Camera

To configure the Simulink model to use the stream from the IP camera, double-click the Network Video Receive block. Select Network device from the Video source list. After you select Network device, set the URL parameter to the IP camera stream's URL.

The URL depends on your network setup. This example uses a CP PLUS IP camera. An example URL for the camera is rtsp://username:password@192.168.0.110:554/Streaming/Channels/1.

Vehicle Detection

A YOLO v2 object detection network is composed of two subnetworks: a feature extraction network followed by a detection network. This pretrained network uses a ResNet-50 for feature extraction. The detection sub-network is a small CNN compared to the feature extraction network and is composed of a few convolutional layers and layers specific to YOLO v2. The Simulink model performs vehicle detection using the Object Detector block from the Computer Vision Toolbox. This block takes an image as input and outputs the bounding box coordinates along with the confidence scores for vehicles in the image.

Annotation of Vehicle Bounding Boxes in IP Camera stream

The VehicleAnnotation MATLAB function block defines a function vehicle_annotation which annotates the vehicle bounding boxes along with the confidence scores.

type vehicle_annotation

function In = vehicle_annotation(bboxes,scores,In)

% Copyright 2022 The MathWorks, Inc.

if ~isempty(bboxes)

In = insertObjectAnnotation(In, 'rectangle', bboxes, scores, 'color',[255 255 0]);

end

end

Configure the Model for Deployment on Jetson

To generate CUDA code, the model must be enabled with the following settings:

set_param('VehicleDetectionOnJetson','TargetLang','C++'); set_param('VehicleDetectionOnJetson','GenerateGPUCode','CUDA');

The model in this example is preconfigured to generate CUDA code.

For more information on generating GPU Code in Simulink, see Code Generation from Simulink Models with GPU Coder.

The Deep learning target library must be set as 'CuDNN' during code generation,

set_param('VehicleDetectionOnJetson', 'DLTargetLibrary','cudnn');

For more information, see GPU Code Generation for Deep Learning Networks Using MATLAB Function Block and GPU Code Generation for Blocks from the Deep Neural Networks Library.

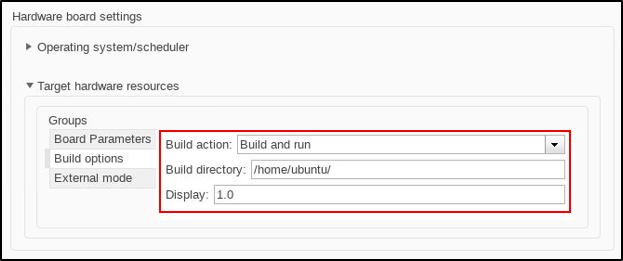

The model configuration parameters provide options for build process and deployment.

1. Open the Configuration Parameters dialog box, Hardware Implementation pane. Select Hardware board as NVIDIA Jetson (or NVIDIA Drive).

2. On the Target hardware resources section, enter the Device Address, Username, and Password of your NVIDIA Jetson target board.

3. Set the Build action, Build directory, and Display as shown. The Display represents the display environment and allows output to be displayed to the device corresponding to the environment.

4. Click Apply and OK.

Generate and Deploy Model on Target Board

1. To generate CUDA code for the model, deploy the code to the target, and run the executable, open the Hardware tab on the Simulink Editor.

2. Select Build, Deploy & Start to generate and deploy the code on the hardware.

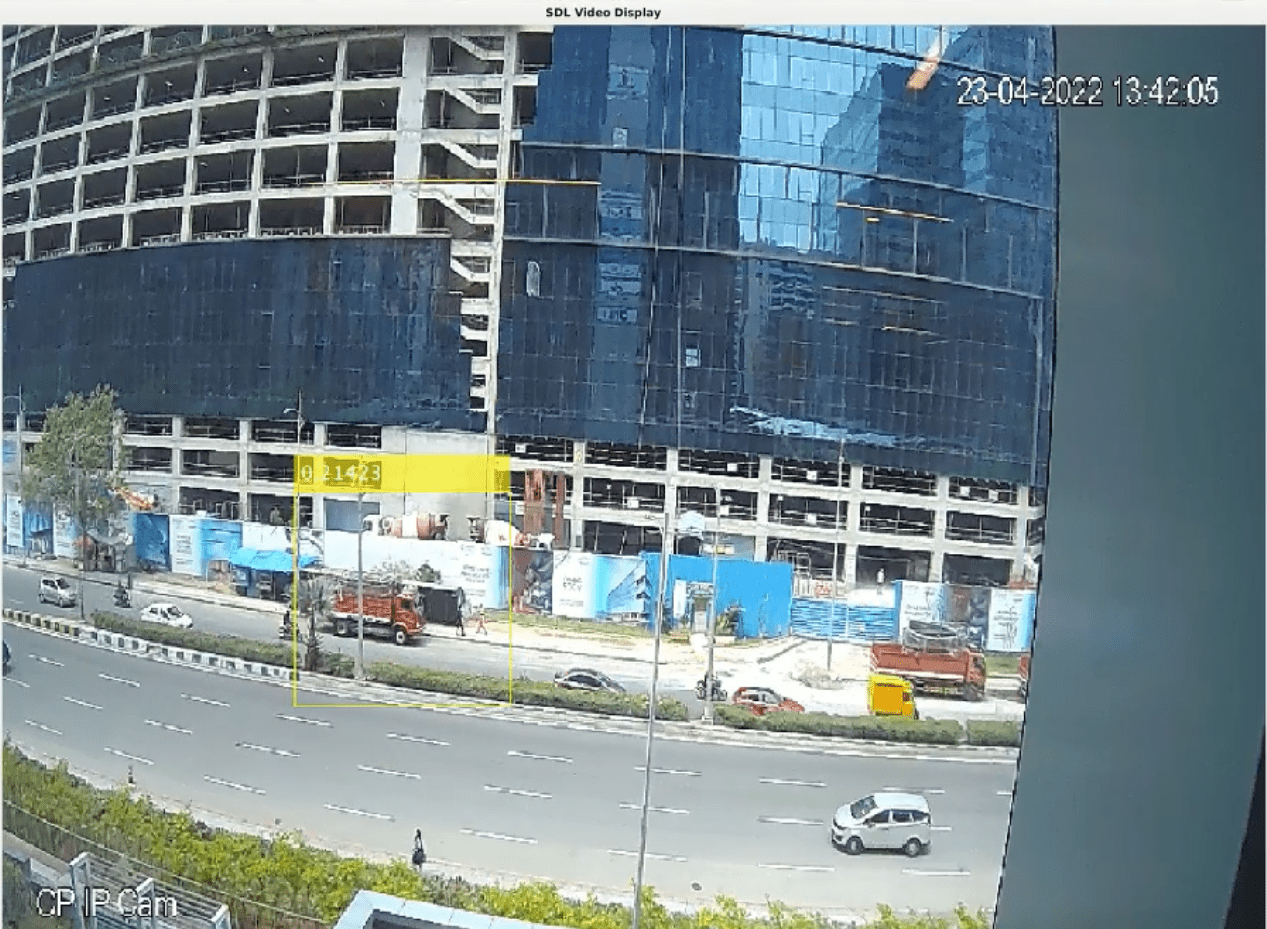

Vehicle detection on Jetson TX2

When the application starts on the target, an SDL Video window opens showing the vehicle detection results for each frame of the video stream captured by the IP camera.

Stop Application

To stop the application on the target, use the stopModel method of the hardware object.

stopModel(hwobj,'VehicleDetectionOnJetson');