Lidar 3-D Object Detection Using PointPillars Deep Learning

This example shows how to detect objects in lidar using PointPillars deep learning network. In this example, you

Configure a dataset for training and testing of PointPillars object detection network. You also perform data augmentation on the training dataset to improve the network efficiency.

Compute anchor boxes from the training data to train the PointPillars object detection network.

Create a PointPillars object detector using the

pointPillarsObjectDetectorfunction and train the detector usingtrainPointPillarsObjectDetectorfunction.

This example also provides a pretrained PointPillars object detector to use for detecting objects in a point cloud. The pretrained model is trained on PandaSet dataset. For information on PointPillars object detection network, see Get Started with PointPillars.

Download Lidar Data Set

Download a ZIP file (approximately 5.2GB in size) that contains a subset of sensor data from the PandaSet data set. After downloading, unzip the file. This file includes two main folders: Lidar and Cuboids, which contain the following data:

The PointCloud folder contains 2560 preprocessed organized point clouds in PCD format, arranged so that the ego vehicle moves along the positive y-axis. If your point cloud deviates from this orientation, you can utilize the

pctransformfunction to apply the necessary transformation.The

Cuboidfolder contains the corresponding ground truth data in table format, stored in thePandasetLidarGroundTruth.matfile. This file provides 3-D bounding box information for three categories: car, truck, and pedestrian. Any transformation applied to the point cloud should be applied to the bounding boxes using thebboxwarpfunction.

Download the PandaSet dataset from the given URL using the helperDownloadPandasetData helper function, defined at the end of this example.

outputFolder = fullfile(tempdir,'Pandaset'); lidarURL = ['https://ssd.mathworks.com/supportfiles/lidar/data/' ... 'Pandaset_LidarData.tar.gz']; helperDownloadPandasetData(outputFolder,lidarURL);

Depending on your Internet connection, the download process can take some time. The code suspends MATLAB® execution until the download process is complete. Alternatively, you can download the data set to your local disk using your web browser and extract the file. If you do so, change the outputFolder variable in the code to the location of the downloaded file.

Load Data set

Create a file datastore to load the PCD files from the specified path using the pcread function.

path = fullfile(outputFolder,'Lidar'); lidarData = fileDatastore(path,'ReadFcn',@(x) pcread(x));

Load the 3-D bounding box labels of the car and truck objects.

gtPath = fullfile(outputFolder,'Cuboids','PandaSetLidarGroundTruth.mat'); data = load(gtPath,'lidarGtLabels'); Labels = timetable2table(data.lidarGtLabels); boxLabels = Labels(:,2:3);

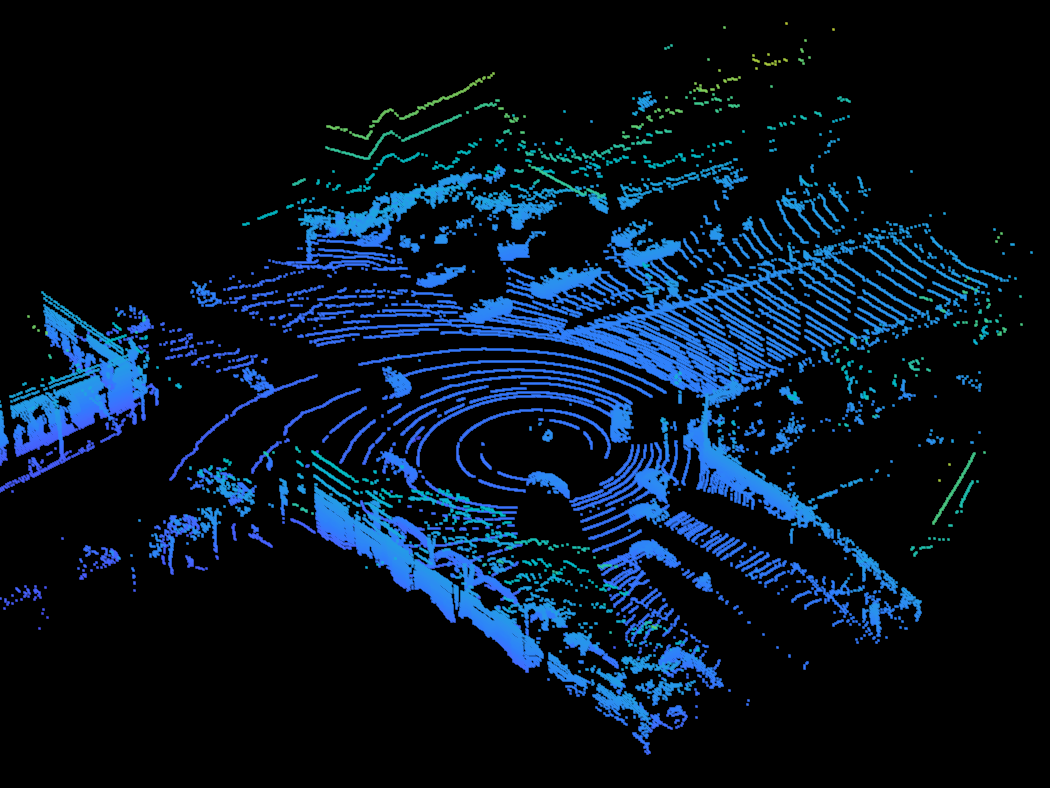

Display the full-view point cloud.

figure ptCld = preview(lidarData); ax = pcshow(ptCld.Location); set(ax,'XLim',[-50 50],'YLim',[-40 40]); zoom(ax,2.5); axis off;

Preprocess Data

The PandaSet data consists of full-view point clouds. For this example, crop the full-view point clouds to front-view point clouds using the standard parameters [1]. These parameters determine the size of the input passed to the network. Select a smaller point cloud range along the x, y, and z-axis to detect objects closer to origin. This also decreases the overall training time of the network.

xMin = 0.0; % Minimum value along X-axis. yMin = -39.68; % Minimum value along Y-axis. zMin = -5.0; % Minimum value along Z-axis. xMax = 69.12; % Maximum value along X-axis. yMax = 39.68; % Maximum value along Y-axis. zMax = 5.0; % Maximum value along Z-axis. xStep = 0.16; % Resolution along X-axis. yStep = 0.16; % Resolution along Y-axis. % Define point cloud parameters. pointCloudRange = [xMin xMax yMin yMax zMin zMax]; voxelSize = [xStep yStep];

Use the cropFrontViewFromLidarData helper function, attached to this example as a supporting file, to:

Crop the front view from the input full-view point cloud.

Select the box labels that are inside the ROI specified by

gridParams.

[croppedPointCloudObj,processedLabels] = cropFrontViewFromLidarData(...

lidarData,boxLabels,pointCloudRange);Processing data 100% complete

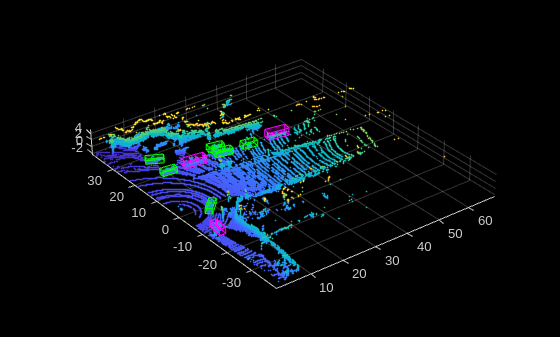

Display the cropped point cloud and the ground truth box labels.

pc = croppedPointCloudObj{1,1};

bboxes = [processedLabels.Car{1};processedLabels.Truck{1}];

ax = pcshow(pc);

showShape('cuboid',bboxes,'Parent',ax,'Opacity',0.1,...

'Color','green','LineWidth',0.5);

reset(lidarData);

Create Datastore Objects

Split the data set into training and test sets. Select 70% of the data for training the network and the rest for evaluation.

rng(1); shuffledIndices = randperm(size(processedLabels,1)); idx = floor(0.7 * length(shuffledIndices)); trainData = croppedPointCloudObj(shuffledIndices(1:idx),:); testData = croppedPointCloudObj(shuffledIndices(idx+1:end),:); trainLabels = processedLabels(shuffledIndices(1:idx),:); testLabels = processedLabels(shuffledIndices(idx+1:end),:);

So that you can easily access the datastores, save the training data as PCD files by using the saveptCldToPCD helper function, attached to this example as a supporting file. You can set writeFiles to "false" if your training data is saved in a folder and is supported by the pcread function.

writeFiles = true; dataLocation = fullfile(outputFolder,'InputData'); [trainData,trainLabels] = saveptCldToPCD(trainData,trainLabels,... dataLocation,writeFiles);

Processing data 100% complete

Create a file datastore using fileDatastore to load PCD files using the pcread function.

lds = fileDatastore(dataLocation,'ReadFcn',@(x) pcread(x));Create a box label datastore using boxLabelDatastore for loading the 3-D bounding box labels.

bds = boxLabelDatastore(trainLabels);

Use the combine function to combine the point clouds and 3-D bounding box labels into a single datastore for training.

cds = combine(lds,bds);

Perform Data Augmentation

This example uses ground truth data augmentation and several other global data augmentation techniques to add more variety to the training data and corresponding boxes. For more information on typical data augmentation techniques used in 3-D object detection workflows with lidar data, see the Data Augmentations for Lidar Object Detection Using Deep Learning.

Read and display a point cloud before augmentation using the helperShowPointCloudWith3DBoxes helper function, defined at the end of the example.

augData = preview(cds);

[ptCld,bboxes,labels] = deal(augData{1},augData{2},augData{3});

% Define the classes for object detection.

classNames = {'Car','Truck'};

% Define colors for each class to plot bounding boxes.

colors = {'green','magenta'};

helperShowPointCloudWith3DBoxes(ptCld,bboxes,labels,classNames,colors)

Use the sampleLidarData function to sample 3-D bounding boxes and their corresponding points from the training data.

sampleLocation = fullfile(outputFolder,'GTsamples'); [ldsSampled,bdsSampled] = sampleLidarData(cds,classNames,'MinPoints',20,... 'Verbose',false,'WriteLocation',sampleLocation); cdsSampled = combine(ldsSampled,bdsSampled);

Use the pcBboxOversample function to randomly add a fixed number of car and truck class objects to every point cloud. Use the transform function to apply the ground truth and custom data augmentations to the training data.

numObjects = [10 10]; cdsAugmented = transform(cds,@(x)pcBboxOversample(x,cdsSampled,classNames,numObjects));

Apply following additional data augmentation techniques to every point cloud using the helperAugmentLidarData helper function, defined at the end of the example:

Random scaling by 5 percent

Random rotation along the z-axis from [-pi/4, pi/4]

Random translation by [0.2, 0.2, 0.1] meters along the x-, y-, and z-axis respectively

cdsAugmented = transform(cdsAugmented,@(x)helperAugmentData(x));

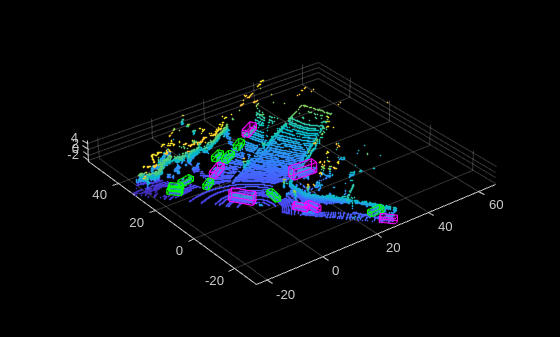

Display an augmented point cloud along with the ground truth augmented boxes using the helperShowPointCloudWith3DBoxes helper function, defined at the end of the example.

augData = preview(cdsAugmented);

[ptCld,bboxes,labels] = deal(augData{1},augData{2},augData{3});

helperShowPointCloudWith3DBoxes(ptCld,bboxes,labels,classNames,colors)

Create PointPillars Object Detector

Use the pointPillarsObjectDetector function to create a PointPillars object detection network. For more information on PointPillars network, see Get Started with PointPillars.

The diagram shows the network architecture of a PointPillars object detector. You can use the Deep Network Designer (Deep Learning Toolbox) App to create a PointPillars network.

Estimate the anchor boxes from training data using calculateAnchorsPointPillars helper function, attached to this example as a supporting file.

anchorBoxes = calculateAnchorsPointPillars(trainLabels);

Define the PointPillars detector.

detector = pointPillarsObjectDetector(pointCloudRange,classNames,anchorBoxes,... 'VoxelSize',voxelSize);

Specify Training Options

Specify the network training parameters using the trainingOptions (Deep Learning Toolbox) function. If training is interrupted, you can resume training from the saved checkpoint.

Train the detector using a CPU or GPU. Using a GPU requires Parallel Computing Toolbox™ and a CUDA® enabled NVIDIA® GPU. For more information, see GPU Computing Requirements (Parallel Computing Toolbox). To automatically detect if you have a GPU available, set executionEnvironment to "auto". If you do not have a GPU, or do not want to use one for training, set executionEnvironment to "cpu". To ensure the use of a GPU for training, set executionEnvironment to "gpu".

executionEnvironment = "auto"; options = trainingOptions('adam',... Plots = "training-progress",... MaxEpochs = 60,... MiniBatchSize = 3,... GradientDecayFactor = 0.9,... SquaredGradientDecayFactor = 0.999,... LearnRateSchedule = "piecewise",... InitialLearnRate = 0.0002,... LearnRateDropPeriod = 15,... LearnRateDropFactor = 0.8,... ExecutionEnvironment= executionEnvironment, ... PreprocessingEnvironment = 'parallel',... BatchNormalizationStatistics = 'moving',... ResetInputNormalization = false,... CheckpointFrequency = 10, ... CheckpointFrequencyUnit = 'epoch', ... CheckpointPath = userpath);

Train PointPillars Object Detector

Use the trainPointPillarsObjectDetector function to train the PointPillars object detector if doTraining is "true". Otherwise, load a pretrained detector.

doTraining = false; if doTraining [detector,info] = trainPointPillarsObjectDetector(cdsAugmented,detector,options); else pretrainedDetector = load('pretrainedPointPillarsDetector.mat','detector'); detector = pretrainedDetector.detector; end

Note: The pretrained network pretrainedPointPillarsDetector.mat is trained on point cloud data captured by a Pandar 64 sensor where the ego vehicle direction is along positive y-axis.

To generate accurate detections using this pretrained network on a custom dataset,

Transform your point cloud data such that the ego vehicle moves along positive y-axis.

Restructure your data with Pandar 64 parameters by using the

pcorganizefunction.

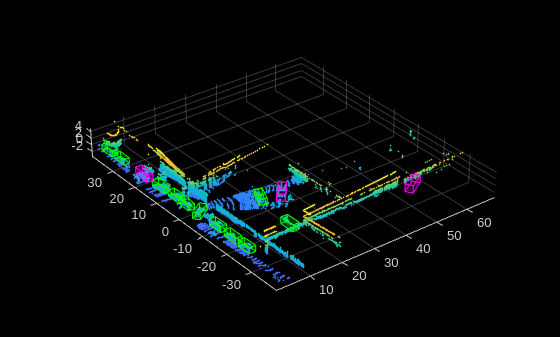

Generate Detections

Use the trained network to detect objects in the test data:

Read the point cloud from the test data.

Run the detector on the test point cloud to get the predicted bounding boxes and confidence scores.

Display the point cloud with bounding boxes using the

helperDisplay3DBoxesOverlaidPointCloudhelper function, defined at the end of the example.

ptCloud = testData{1,1};

% Run the detector on the test point cloud.

[bboxes,score,labels] = detect(detector,ptCloud);

% Display the predictions on the point cloud.

helperShowPointCloudWith3DBoxes(ptCloud,bboxes,labels,classNames,colors)

Evaluate Detector Using Test Set

Evaluate the trained object detector on a large set of point cloud data to measure the performance.

numInputs = 50; % Generate rotated rectangles from the cuboid labels. bds = boxLabelDatastore(testLabels(1:numInputs,:)); groundTruthData = transform(bds,@(x)createRotRect(x)); detectionResults = detect(detector,testData(1:numInputs,:),... 'Threshold',0.25); % Convert the bounding boxes to rotated rectangles format and calculate % the evaluation metrics. for i = 1:height(detectionResults) box = detectionResults.Boxes{i}; detectionResults.Boxes{i} = box(:,[1,2,4,5,9]); detectionResults.Labels{i} = detectionResults.Labels{i}'; end % Evaluate the object detector using average orientation similarity metric metrics = evaluateObjectDetection(detectionResults,groundTruthData,"AdditionalMetrics","AOS"); [datasetSummary,classSummary] = summarize(metrics)

datasetSummary=1×5 table

511 0.7056 0.7056 0.6821 0.6821

classSummary=2×5 table

480 0.7627 0.7627 0.7466 0.7466

31 0.6484 0.6484 0.6175 0.6175

Supporting Functions

The helperDownloadPandasetData function download the PandaSet data.

function helperDownloadPandasetData(outputFolder,lidarURL) % Download the data set from the given URL to the output folder. lidarDataTarFile = fullfile(outputFolder,'Pandaset_LidarData.tar.gz'); if ~exist(lidarDataTarFile,'file') mkdir(outputFolder); disp('Downloading PandaSet Lidar driving data (5.2 GB)...'); websave(lidarDataTarFile,lidarURL); untar(lidarDataTarFile,outputFolder); end % Extract the file. if (~exist(fullfile(outputFolder,'Lidar'),'dir'))... &&(~exist(fullfile(outputFolder,'Cuboids'),'dir')) untar(lidarDataTarFile,outputFolder); end end

The helperShowPointCloudWith3DBoxes function displays the point cloud alongside its associated bounding boxes, employing distinct colors for different classes to facilitate easy differentiation.

function helperShowPointCloudWith3DBoxes(ptCld,bboxes,labels,classNames,colors) % Validate the length of classNames and colors are the same assert(numel(classNames) == numel(colors), 'ClassNames and Colors must have the same number of elements.'); % Get unique categories from labels uniqueCategories = categories(labels); % Create a mapping from category to color colorMap = containers.Map(uniqueCategories, colors); labelColor = cell(size(labels)); % Populate labelColor based on the mapping for i = 1:length(labels) labelColor{i} = colorMap(char(labels(i))); end figure; ax = pcshow(ptCld); showShape('cuboid', bboxes, 'Parent', ax, 'Opacity', 0.1, ... 'Color', labelColor, 'LineWidth', 0.5); zoom(ax,1.5); end

The helperAugmentData function applies following augmentation to the data:

Random scaling by 5 percent.

Random rotation along the z-axis from [-pi/4, pi/4].

Random translation by [0.2, 0.2, 0.1] meters along the x-, y-, and z-axis respectively.

function data = helperAugmentData(data) % Apply random scaling, rotation and translation. pc = data{1}; minAngle = -45; maxAngle = 45; % Define outputView based on the grid-size and XYZ limits. outView = imref3d([32,32,32],[-100,100],... [-100,100],[-100,100]); theta = minAngle + rand(1,1)*(maxAngle - minAngle); tform = randomAffine3d('Rotation',@() deal([0,0,1],theta),... 'Scale',[0.95,1.05],... 'XTranslation',[0,0.2],... 'YTranslation',[0,0.2],... 'ZTranslation',[0,0.1]); % Apply the transformation to the point cloud. ptCloudTransformed = pctransform(pc,tform); % Apply the same transformation to the boxes. bbox = data{2}; [bbox,indices] = bboxwarp(bbox,tform,outView); if ~isempty(indices) data{1} = ptCloudTransformed; data{2} = bbox; data{3} = data{1,3}(indices,:); end end

References

[1] Lang, Alex H., Sourabh Vora, Holger Caesar, Lubing Zhou, Jiong Yang, and Oscar Beijbom. "PointPillars: Fast Encoders for Object Detection From Point Clouds." In 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 12689-12697. Long Beach, CA, USA: IEEE, 2019. https://doi.org/10.1109/CVPR.2019.01298.

[2] Hesai and Scale. PandaSet. Accessed September 18, 2025. https://pandaset.org/. The PandaSet data set is provided under the CC-BY-4.0 license.

[3] Xiao, Pengchuan, Zhenlei Shao, Steven Hao, et al. “PandaSet: Advanced Sensor Suite Dataset for Autonomous Driving.” 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), IEEE, September 19, 2021, 3095–101. https://doi.org/10.1109/ITSC48978.2021.9565009.

See Also

Objects

Functions

trainPointPillarsObjectDetector|trainVoxelRCNNObjectDetector|pcorganize|sampleLidarData|pcBboxOversample

Apps

- Deep Network Designer (Deep Learning Toolbox) | Lidar Labeler | Point Cloud Analyzer

Topics

- Get Started with PointPillars

- Code Generation for Lidar Object Detection Using PointPillars Deep Learning

- Data Augmentations for Lidar Object Detection Using Deep Learning

- Lidar Object Detection Using Complex-YOLO v4 Network

- Transfer Learning Using Voxel R-CNN for Lidar 3-D Object Detection

- Point Cloud Classification Using PointNet++ Deep Learning