Build and Deploy Visual SLAM Algorithm with ROS in MATLAB

In this example, you implement a visual simultaneous localization and mapping (SLAM) algorithm to estimate the camera poses for the TUM RGB-D Benchmark [1] dataset. You then generate C++ code for the visual SLAM algorithm and deploy it as a ROS node to a remote device using MATLAB®.

This example requires MATLAB Coder™.

Prerequisites for Remote Device

The remote device to which you want to deploy the code must have the following dependencies installed:

OpenCV 4.7.0 — For more information about downloading the OpenCV source and building it on your remote device, see OpenCV linux installation.

Ceres-solver 2.2.0 library — Download the source and build it as a shared library, on your remote device.

Eigen3 library — Install eigen3 library using the command

$sudo apt install libeigen3-dev.

Connect to Remote Device

For this example, download a virtual machine (VM) using the instructions in Get Started with Gazebo and Simulated TurtleBot, and then follow these steps.

Start the Ubuntu® virtual machine.

On the Ubuntu desktop, click the ROS Noetic Core icon to start the ROS core on the virtual machine.

Specify the IP address and port number of the ROS master to MATLAB so that it can communicate with the virtual machine. For this example, the ROS master is at the address

192.168.192.135on port11311.Start the ROS network using

rosinit.

masterIP = '192.168.192.135';

rosinit(masterIP,11311)Initializing global node /matlab_global_node_33565 with NodeURI http://192.168.192.1:60627/ and MasterURI http://192.168.192.135:11311.

Set Up Data on Remote Device

This example uses TUM RGB-D Benchmark [1] dataset. Download the dataset as a ROS bag file on the remote device.

$ wget https://cvg.cit.tum.de/rgbd/dataset/freiburg3/rgbd_dataset_freiburg3_long_office_household.bag

If you encounter issues playing the bag file, decompress it first.

$ rosbag decompress rgbd_dataset_freiburg3_long_office_household.bag

Implement Visual SLAM Algorithm

This example uses the monovslam (Computer Vision Toolbox) object to implement visual SLAM. For each new frame added using its addFrame object function, the monovslam object extracts and tracks features to estimate camera poses, identify key frames and compute the 3-D map points in the world frame. The monovslam object also searches for loop closures using the bag-of-features algorithm and optimizes camera poses using pose graph optimization, once a loop closure is detected. For more information on visual SLAM pipeline, see Performant and Deployable Monocular Visual SLAM (Computer Vision Toolbox).

The helperROSVisualSLAM function implements the visual SLAM algorithm using these steps:

Create a

monovslamobject using known camera intrinsics.Create a ROS subscriber to listen to the TUM RGB-D dataset frames published by the ROS bag file.

Create a publisher to publish map points and camera poses to MATLAB.

For each frame published by the bag file, add it to the

monovslamobject using itsaddFrameobject function. Themonovslamobject then determines whether it is a key frame.For each key frame, obtain the map points in the world frame and camera poses from the

monovslamobject.Publish the map points and camera poses to MATLAB for visualization.

type("helperROSVisualSLAM.m")function helperROSVisualSLAM()

% The helperROSVisualSLAM function implements the visual SLAM pipeline for

% deployment as a ROS node.

% Copyright 2023 The MathWorks, Inc.

% Create a visual SLAM object

intrinsics = cameraIntrinsics([535.4,539.2], [320.1,247.6], [480, 640]);

vslam = monovslam(intrinsics);

% Create a subscriber to read camera images

cameraSub = rossubscriber('/camera/rgb/image_color', 'sensor_msgs/Image', @(varargin)vslamNodeCallback(varargin{:}),DataFormat="struct");

% Create a publisher to publish map points and camera poses to MATLAB

cameraPub = rospublisher('/visualizePoints','std_msgs/Float64MultiArray','DataFormat','struct');

while 1

if hasNewKeyFrame(vslam)

msg = rosmessage('std_msgs/Float64MultiArray', 'DataFormat', 'struct');

% Get map points and camera poses

worldPoints = mapPoints(vslam);

[camPoses] = poses(vslam);

% Pack camera poses for publishing

poseSize = numel(camPoses);

transAndRots = zeros(poseSize*4,3);

for i = 0:poseSize-1

transAndRots(i*4+1,:) = camPoses(i+1).Translation;

transAndRots(i*4+2:i*4+4,:) = camPoses(i+1).R;

end

% Concatenate poses and points into one struct

allData = vertcat(transAndRots, worldPoints);

allDataSize = size(allData,1);

flattenPoints = reshape(allData,[allDataSize*3 1]);

msg.Data = flattenPoints;

msg.Layout.Dim = rosmessage('std_msgs/MultiArrayDimension', 'DataFormat', 'struct');

msg.Layout.Dim(end).Size = uint32(poseSize);

% Send data to MATLAB

send(cameraPub, msg);

end

end

function vslamNodeCallback(~, data)

img = rosReadImage(data);

addFrame(vslam, img);

end

end

Generate and Deploy Visual SLAM Node

Use MATLAB Coder to generate a ROS node for the visual SLAM algorithm defined by the helperROSVisualSLAM function. You can then deploy this node on the remote virtual machine. Create a MATLAB Coder configuration object that uses "Robot Operating System (ROS)" hardware. Before remote deployment, set these configuration parameters for the Linux virtual machine. Note that, if you are deploying to a different remote machine, you must change these to the appropriate parameters for your device.

Note: By default, the "Build and Load" build action deploys the node to the device, but does not automatically run it. If you want the node to run immediately after code generation, use the "Build and Run" build action, instead.

cfg = coder.config('exe'); cfg.Hardware = coder.hardware('Robot Operating System (ROS)'); cfg.Hardware.BuildAction = 'Build and load'; cfg.Hardware.CatkinWorkspace = '~/catkin_ws'; cfg.Hardware.RemoteDeviceAddress = masterIP; cfg.Hardware.RemoteDeviceUsername = 'user'; cfg.Hardware.RemoteDevicePassword = 'password'; cfg.Hardware.DeployTo = 'Remote Device'; codegen helperROSVisualSLAM -args {} -config cfg -std:c++17

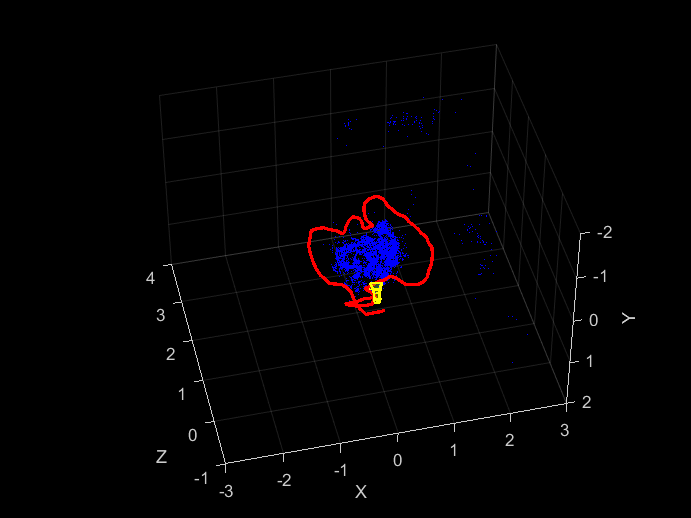

Configure Visualization

Use the helperVisualSLAMViewer object to create a viewer that visualizes map points along with the camera trajectory and the current camera pose.

viewer = helperVisualSLAMViewer(zeros(0,3),rigidtform3d(eye(4)));

Create a ROS subscriber to visualize map points and camera poses published by the deployed visual SLAM node. Assign helperVisualizePointsAndPoses function as a callback to be triggered whenever the subscriber receives a message from the deployed node.

visualizeSub = rossubscriber('/visualizePoints', 'std_msgs/Float64MultiArray', @(varargin)helperVisualizePointsAndPoses(varargin{:}, viewer),'DataFormat','struct');

Run Deployed Visual SLAM Node on Remote Device

On the Ubuntu desktop of the virtual machine, click the ROS Noetic Terminal icon. Source the catkin workspace.

$ source ~/catkin_ws/devel/setup.bash

To help the deployed node access library dependencies, append /usr/local/lib path to the environment variable, LD_LIBRARY_PATH.

$ export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/lib

Navigate to the source directory of the deployed helperrosvisualslam node on the remote device. You must run the node from this directory because the bag of features file used by the deployed node is present in this directory.

$ cd ~/catkin_ws/src/helperrosvisualslam/src/

Run the deployed visual SLAM node on the remote device.

$ rosrun helperrosvisualslam helperROSVisualSLAM

Start playing the ROS bag file in a separate ROS Noetic Terminal.

$ rosbag play rgbd_dataset_freiburg3_long_office_household.bag

Disconnect

Disconnect from ROS Network after the nodes have finished execution.

rosshutdown

References

[1] Sturm, Jürgen, Nikolas Engelhard, Felix Endres, Wolfram Burgard, and Daniel Cremers. "A benchmark for the evaluation of RGB-D SLAM systems". In Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 573-580, 2012

Helper Functions

The helperVisualizePointsAndPoses function unpacks the std_msgs/Float64MultiArray message received from the deployed node into map points and camera poses. It then calls the updatePlot object function of the helperVisualSLAMViewer object to update the visualization with the new data.

function helperVisualizePointsAndPoses(~, msg,viewer) % Unpack multi-array message based on the packing layout of translation % and rotation values offset = msg.Layout.Dim.Size * 4; nSize = numel(msg.Data) / 3; allData = reshape(msg.Data, [nSize 3]); % Extract camera poses and map points camPosesR = allData(1:offset, :); xyzPoints = allData(offset+1:end, :); % Convert camera poses to an array of rigidtform values camPoses = []; for i=0:msg.Layout.Dim.Size-1 if i == 0 camPoses = rigidtform3d(camPosesR(i*4+2:i*4+4,:),camPosesR(i*4+1,:)); else camPoses(end+1) = rigidtform3d(camPosesR(i*4+2:i*4+4,:),camPosesR(i*4+1,:)); end end % Update the viewer plot viewer.updatePlot(xyzPoints, camPoses); end

See Also

monovslam (Computer Vision Toolbox)