Hide Requirements Metrics in Model Testing Dashboard and in API Results

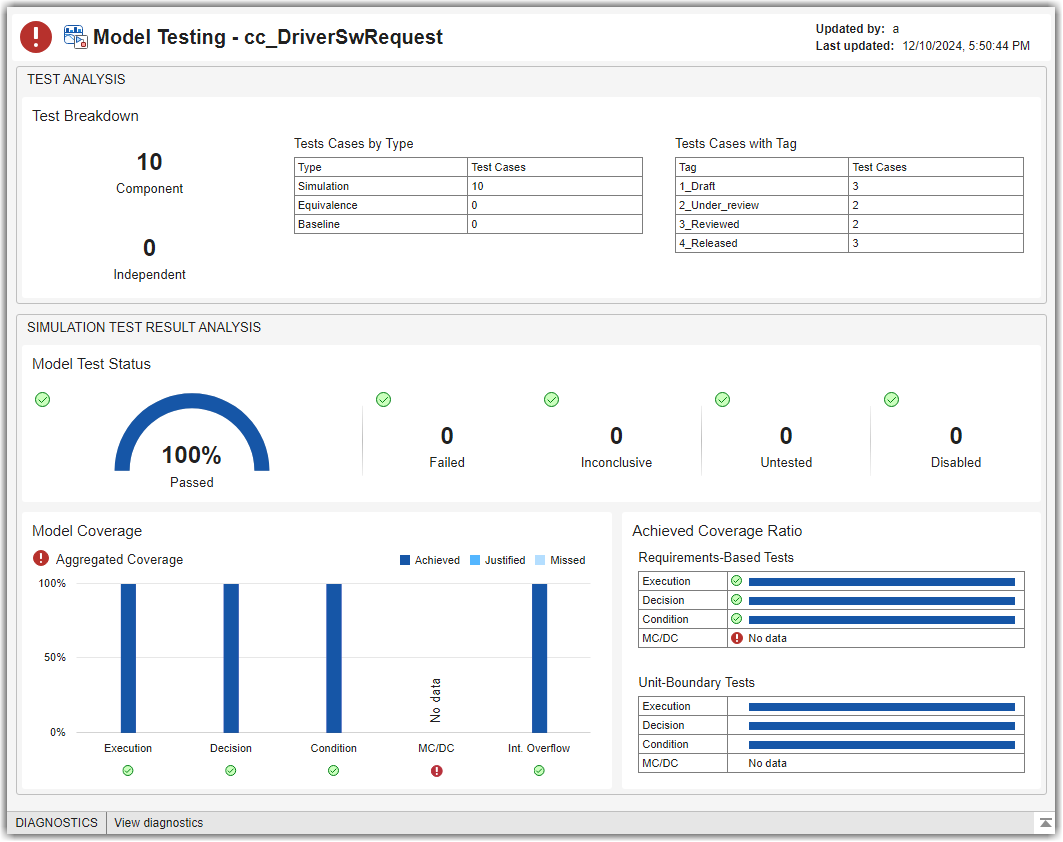

If you do not want to track requirements metrics in the Model Testing Dashboard, or cannot because of a missing Requirements Toolbox™ license, you can hide the requirements metrics. When you hide the requirements metrics, the dashboard displays only the metrics in the Test Case Breakdown, Model Test Status, and Model Coverage sections. This example shows how to hide the requirements metrics when you use the Model Testing Dashboard or when you programmatically collect metrics.

If you want to collect requirements-based metrics, see Explore Status and Quality of Testing Activities Using Model Testing Dashboard.

Open the Dashboard for the Project

Open a project that contains models and testing artifacts. For this example, in the MATLAB® Command Window, enter:

openExample("slcheck/ExploreTestingMetricDataInModelTestingDashboardExample"); openProject("cc_CruiseControl");

Open the Model Testing Dashboard by using one of these approaches:

On the Project tab, click Model Testing Dashboard.

At the command line, enter:

modelTestingDashboard

Hide Requirements Metrics

Open the Project Options dialog box by clicking Options in the toolstrip.

In the Layout section, select Hide requirements metrics and click Apply.

The app closes any open dashboards.

Note

Because the setting of the Hide requirements metrics property is saved in the project data, the dashboard displays or hides requirements-based metrics for any users who view the project.

In the Add Dashboard section of the toolstrip, click Model Testing to re-open the Model Testing Dashboard.

The dashboard shows only the widgets for the Test Case Breakdown, Model Test Status, and Model Coverage sections.

View API Results with Requirements Metrics Hidden

When you select the Hide requirements metrics in the Project Options for the dashboard, the dashboard also hides requirements metrics from the API results.

Create a

metric.Engineobject for the current project.metric_engine = metric.Engine();

Create a list of the available metric identifiers for the Model Testing Dashboard. Use the function

getAvailableMetricIdsand specify the'App'as'DashboardApp'and the'Dashboard'as'ModelUnitTesting'.nonRequirementMetrics = getAvailableMetricIds(metric_engine,... 'App','DashboardApp',... 'Dashboard','ModelUnitTesting');

Since the setting Hide requirements metrics is selected, the API results do not include requirements-based metrics like the metric

RequirementsPerTestCase. The list of metric identifiers still includes metrics likeRequirementsConditionCoverageBreakdownbecause that metric only requires a Simulink® Coverage™ license.Use

nonRequirementsMetricsto collect metric results from metric identifiers that are not associated with requirements metrics.execute(metric_engine, nonRequirementMetrics);

Get the metric results that are not associated with requirements metrics.

results = getMetrics(metric_engine, nonRequirementMetrics)

For more information about the metric API, see Collect Metrics on Model Testing Artifacts Programmatically.

See Also

metric.Engine | execute | getAvailableMetricIds | getMetrics