Fix Requirements-Based Testing Issues

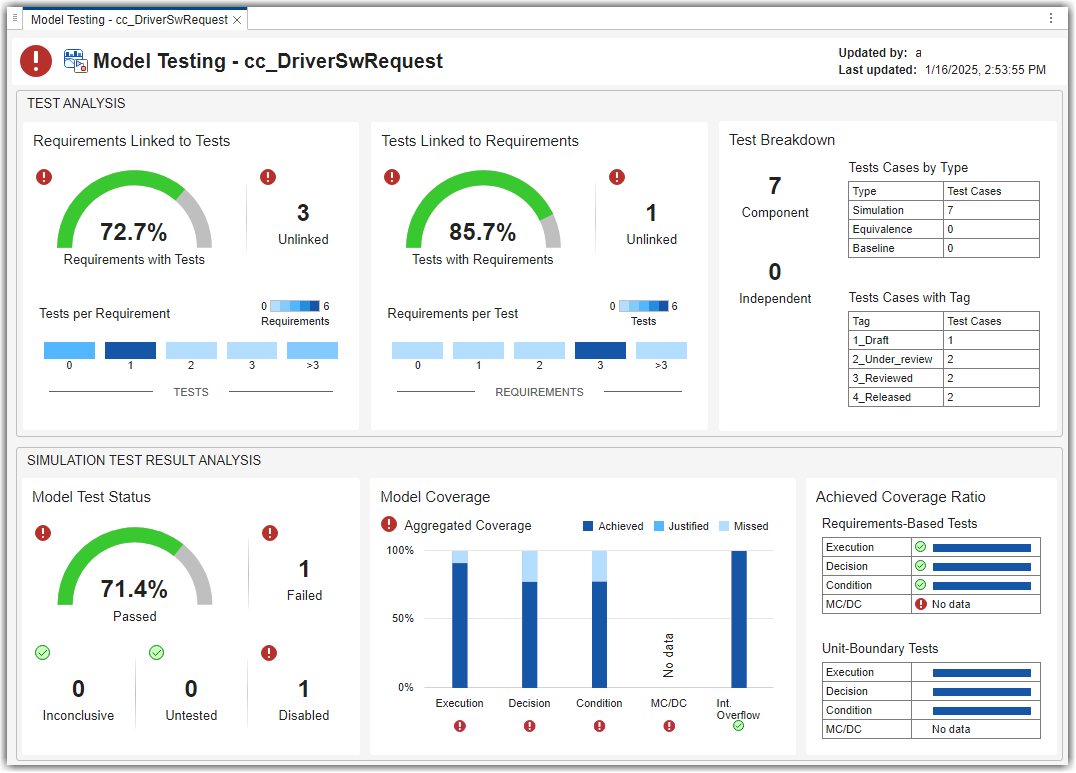

This example shows how to address common traceability issues in model requirements and tests by using the Model Testing Dashboard. The dashboard analyzes the testing artifacts in a project and reports metric data on quality and completeness measurements such as traceability and coverage, which reflect guidelines in industry-recognized software development standards, such as ISO 26262 and DO-178C. The dashboard widgets summarize the data so that you can track your requirements-based testing progress and fix the gaps that the dashboard highlights. You can click the widgets to open tables with detailed information, where you can find and fix the testing artifacts that do not meet the corresponding standards.

Collect Metrics for Testing Artifacts in Project

The dashboard displays testing data for a model and the artifacts that the unit traces to within a project. For this example, open the project and collect metric data for the artifacts.

1. Open the project that contains models and testing artifacts. For this example, in the MATLAB® Command Window, enter:

prj = openProject("cc_CruiseControl");2. Open the Dashboard window. On the Project tab, click Model Testing Dashboard.

3. Open the Model Testing Dashboard by clicking Model Testing in the dashboard gallery in the toolstrip.

4. The Project panel displays artifacts from the current project that are compatible with the currently selected dashboard. View the metric results for the unit cc_DriverSwRequest. In the Project panel, click the name of the unit, cc_DriverSwRequest. When you initially select cc_DriverSwRequest, the dashboard collects the metric results for uncollected metrics and populates the widgets with the data for the unit.

Link Requirement to Implementation in Model

The Artifacts panel shows artifacts such as requirements, tests, and test results that trace to the unit selected in the Project panel.

In the Artifacts panel, the Trace Issues folder shows artifacts that do not trace to unit models in the project. The Trace Issues folder contains subfolders for:

Unexpected Implementation Links — Requirement links of Type

Implementsfor a requirement of TypeContaineror TypeInformational. The dashboard does not expect these links to be of TypeImplementsbecause container requirements and informational requirements do not contribute to the Implementation and Verification status of the requirement set that they are in. If a requirement is not meant to be implemented, you can change the link type. For example, you can change a requirement of TypeInformationalto have a link of TypeRelated to.Unresolved and Unsupported Links — Requirement links which are broken or not supported by the dashboard. For example, if a model block implements a requirement, but you delete the model block, the requirement link is now unresolved. The Model Testing Dashboard does not support traceability analysis for some artifacts and some links. If you expect a link to trace to a unit and it does not, see the troubleshooting solutions in Resolve Missing Artifacts, Links, and Results (Simulink Check).

Untraced Tests — Tests that execute on models or subsystems that are not on the project path.

Untraced Results — Results that the dashboard can no longer trace to a test. For example, if a test produces results, but you delete the test, the results can no longer be traced to the test.

Address Testing Traceability Issues

The widgets in the Test Analysis section of the Model Testing Dashboard show data about the unit requirements, tests for the unit, and links between them. The widgets indicate if there are gaps in testing and traceability for the implemented requirements.

Link Requirements and Tests

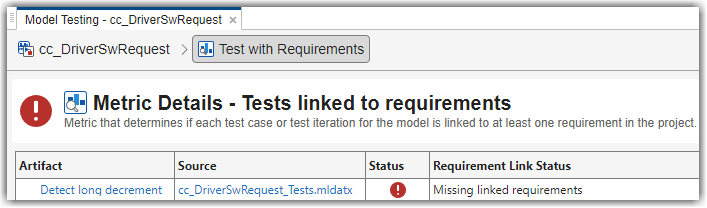

For the unit cc_DriverSwRequest, the Tests Linked to Requirements section shows that some of the tests are missing links to requirements in the model.

To see detailed information about the missing links, in the Tests Linked to Requirements section, click the widget Unlinked. The dashboard opens the Metric Details for the widget with a table of metric values and hyperlinks to each related artifact. The table shows the tests that are implemented in the unit, but do not have links to requirements. The table is filtered to show only tests that are missing links to requirements.

The test Detect long decrement is missing linked requirements.

In the Artifact column of the table, point to Detect long decrement. The tooltip shows that the test Detect long decrement is in the test suite Unit test for DriverSwRequest, in the test file cc_DriverSwRequest_Tests.

Click Detect long decrement to open the test in the Test Manager. For this example, the test needs to link to three requirements that already exist in the project. If there were not already requirements, you could add a requirement by using the Requirements Editor.

Open the software requirements in the Requirements Editor. In the Artifacts panel of the Dashboard window, expand the folder Functional Requirements > Implemented and double-click the requirement file cc_SoftwareReqs.slreqx.

View the software requirements in the container with the summary Driver Switch Request Handling. Expand cc_SoftwareReqs > Driver Switch Request Handling.

Select multiple software requirements. Hold down the Ctrl key as you click Output request mode, Avoid repeating commands, and Long Increment/Decrement Switch recognition. Keep these requirements selected in the Requirements Editor.

In the Test Manager, expand the Requirements section for the test

Detect long decrement. Click the arrow next to the Add button and select Link to Selected Requirement. The traceability link indicates that the testDetect long decrementverifies the three requirementsOutput request mode,Avoid repeating commands, andLong Increment/Decrement Switch recognition.The metric results in the dashboard reflect only the saved artifact files. To save the test suite

cc_DriverSwRequest_Tests.mldatx, in the Test Browser, right-click cc_DriverSwRequest_Tests and click Save.

Refresh Metric Results in Dashboard

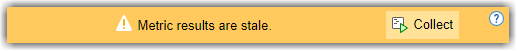

The dashboard detects the changes and warns you to refresh the dashboard.

![]()

Click the Collect button on the warning banner to re-collect the metric data so that the Metric Details reflect the traceability link between the test and requirements.

View the updated dashboard widgets by returning to the Model Testing results and collecting the metrics. At the top of the dashboard, there is a breadcrumb trail from the Metric Details back to the Model Testing results. Click the breadcrumb button for cc_DriverSwRequest to return to the Model Testing results for the unit. Then, update the impacted model testing metric results by clicking Collect in the warning banner.

The Tests Linked to Requirements section shows that there are no unlinked tests. The Requirements Linked to Tests section shows that there are 3 unlinked requirements.

Typically, before running the tests, you investigate and address these testing traceability issues by adding tests and linking them to the requirements. For this example, leave the unlinked artifacts and continue to the next step of running the tests.

Test Model and Analyze Failures and Gaps

After you create and link unit tests that verify the requirements, run the tests to check that the functionality of the model meets the requirements. To see a summary of the test results and coverage measurements, use the widgets in the Simulation Test Result Analysis section of the dashboard. The widgets help show testing failures and gaps. Use the metric results to analyze the underlying artifacts and to address the issues.

Perform Unit Testing

Run the tests for the model by using the Test Manager. Save the test results in your project and review them in the Model Testing Dashboard.

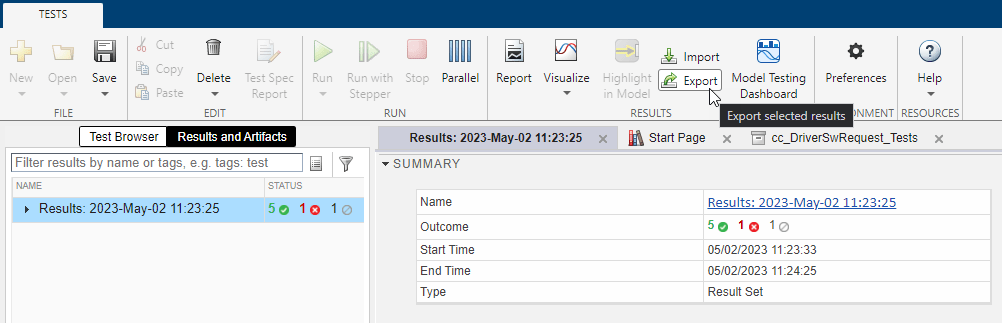

Open the unit tests for the model in the Test Manager. In the Model Testing Dashboard, in the Artifacts panel, expand the folder Tests > Unit Tests and double-click the test file cc_DriverSwRequest_Tests.mldatx.

In the Test Manager, click Run.

Select the results in the Results and Artifacts pane.

Save the test results as a file in the project. On the Tests tab, in the Results section, click Export. Name the results file

Results1.mldatxand save the file under the project root folder.

The Model Testing Dashboard detects the results and automatically updates the Artifacts panel to include the new test results for the unit in the subfolder Test Results > Model.

The dashboard also detects that the metric results are now stale and shows a warning banner at the top of the dashboard.

The Stale icon ![]() appears on the widgets in the Simulation Test Result Analysis section to indicate that they are showing stale data that does not include the changes.

appears on the widgets in the Simulation Test Result Analysis section to indicate that they are showing stale data that does not include the changes.

Click the Collect button on the warning banner to re-collect the metric data and to update the stale widgets with data from the current artifacts.

Address Testing Failures and Gaps

For the unit cc_DriverSwRequest, the Model Test Status section of the dashboard indicates that one test failed and one test was disabled during the latest test run.

To view the disabled test, in the dashboard, click the Disabled widget. The table shows the disabled tests for the model.

Open the disabled test in the Test Manager. In the table, click the test artifact Detect long decrement.

Enable the test. In the Test Browser, right-click the test and click Enabled.

Re-run the test. In the Test Browser, right-click the test and click Run and save the test suite file.

View the updated number of disabled tests. In the dashboard, click the Collect button on the warning banner. Note that there are now zero disabled tests reported in the Model Test Status section of the dashboard.

View the failed test in the dashboard. Click the breadcrumb button for cc_DriverSwRequest to return to the Model Testing results and click the Failed widget.

Open the failed test in the Test Manager. In the table, click the test artifact Detect set.

Examine the test failure in the Test Manager. You can determine if you need to update the test or the model by using the test results and links to the model. For this example, instead of fixing the failure, use the breadcrumbs in the dashboard to return to the Model Testing results and continue on to examine test coverage.

Check if the tests that you ran fully exercised the model design by using the coverage metrics. For this example, the Model Coverage section of the dashboard indicates that some conditions in the model were not covered. Place your cursor over the Decision bar in the widget to see what percent of condition coverage was achieved.

View details about the decision coverage by clicking one of the Decision bars. For this example, click the Decision bar for Achieved coverage.

In the table, expand the model artifact. The table shows the test results for the model and the results files that contains them. For this example, click on the hyperlink to the source file Results1.mldatx to open the results file in the Test Manager.

To see detailed coverage results, use the Test Manager to open the model in the Coverage perspective. In the Test Manager, in the Aggregated Coverage Results section, in the Analyzed Model column, click cc_DriverSwRequest.

Coverage highlighting on the model shows the points that were not covered by the tests. For this example, do not fix the missing coverage. For a point that is not covered in your project, you can add a test to cover it. You can find the requirement that is implemented by the model element or, if there is none, add a requirement for it. Then you can link the new test to the requirement. If the point should not be covered, you can justify the missing coverage by using a filter.

Once you have updated the unit tests to address failures and gaps in your project, run the tests and save the results. Then examine the results by collecting the metrics in the dashboard.

Iterative Requirements-Based Testing with Model Testing Dashboard

In a project with many artifacts and traceability connections, you can monitor the status of the design and testing artifacts whenever there is a change to a file in the project. After you change an artifact, use the dashboard to check if there are downstream testing impacts by updating the tracing data and metric results. Use the Metric Details tables to find and fix the affected artifacts. Track your progress by updating the dashboard widgets until they show that the model testing quality meets the standards for the project.