Set Signal Tolerances

You can specify tolerances in the Baseline Criteria or Equivalence Criteria sections of baseline and equivalence test cases. You can specify relative, absolute, leading, and lagging tolerances for a signal comparison. Leading and lagging tolerances allow you to compensate for differences in time between signals. The units for tolerances are seconds.

To learn about how tolerances are calculated, see How the Simulation Data Inspector Compares Data.

Modify Criteria Tolerances

To modify a tolerance, select the signal name in the criteria table, double-click the tolerance value, and enter a new value.

If you modify a tolerance after you run a test case, rerun the test case to apply the new tolerance value to the pass/fail results.

Change Leading Tolerance in a Baseline Comparison Test

Specify a tolerance when the difference between results falls in a range you consider acceptable. Suppose that your model under test uses a particular solver. Solvers are sometimes updated from one release to the next, and new solvers also become available. If you use an updated solver or change solvers, you can specify an acceptable tolerance for differences between your baseline and later tests. Leading and lagging tolerances allow you to re-evaluate criteria if there are differences in time, for example, due to solver the data is off by .04 seconds you can shift it left or right to account for this.

Generate the Baseline

Generate the baseline for the sf_car model, which uses the

ode-5 solver.

Open the

sf_carmodel by usingopenExample('sf_car').Open the Test Manager and create a test file named

Solver Compare. In the test case, set the system under test tosf_car.Select the signal to log. Under Simulation Outputs, click Add. In the model, select the

shift_logicoutput signal. In the Signal Selection dialog box, select the check box next toshift_logicand click Add.Save the baseline. Under Baseline Criteria, click Capture. Set the file format to

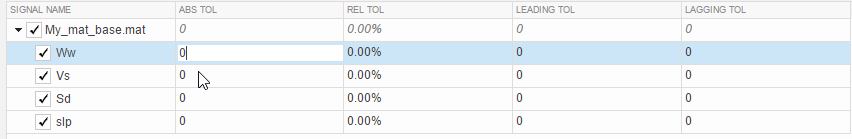

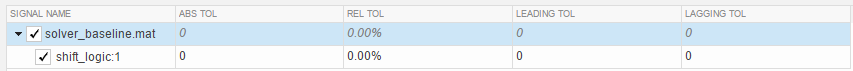

MAT. Name the baselinesolver_baselineand click Capture.After you capture the baseline MAT file, the model runs and the baseline criteria appear in the table. Each default tolerance is 0.

Change Solvers and Run the Test Case

Suppose that you want to use a different solver with your model. You run a test to compare results using the new solver with the baseline.

In the model, change the solver to

ode1.In the Test Manager, with the

Solver Comparetest file selected, click Run.In the Results and Artifacts pane, notice that the test failed.

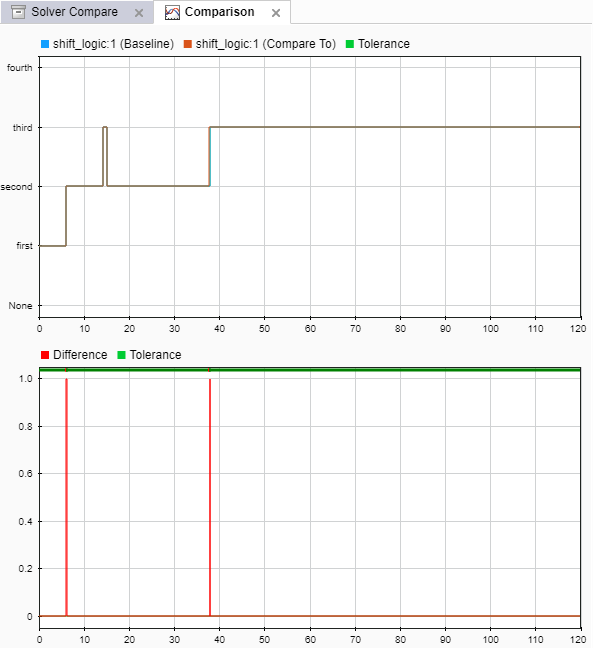

Expand the results of the failed test. Under Baseline Criteria Result, select the

shift_logicsignal.The Comparison tab shows where the difference occurred.

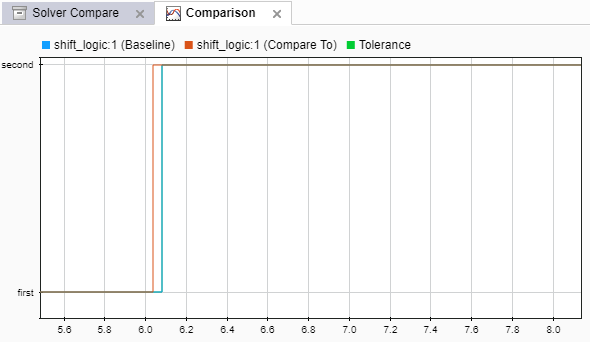

Zoom the comparison chart where the results diverged. The comparison signal changes ahead of the baseline, that is, it leads the baseline signal.

Preview and Set a Leading Tolerance Value

You can use leading and lagging tolerances to allow for slight offsets in time between the simulation and baseline data. Suppose that your team determines that a tolerance the size of the simulation step size (.04 seconds in this case) is acceptable. In the Test Manager, set a leading tolerance value. Use a leading tolerance for the signal whose change occurs ahead of your baseline. Use a lagging tolerance for a signal whose change occurs after your baseline.

You can preview how the tolerance value affects the test to see if the test passes with the specified tolerance. Then set the tolerance on the baseline criteria and rerun the test.

Preview whether the tolerance you want to use causes the test to pass. With the result signal selected, in the property box, set Leading Tolerance to

.04.

When you change this value, the status changes to show that the failed tests pass.

When you are satisfied with the tolerance value, enter it in the baseline criteria so you can rerun the test and save the new pass-fail result. In the Test Browser pane, select the test case in the

Solver Comparetest.Under Baseline Criteria, change the Leading Tol value for the

solver_baseline.matfile to.04.By default, each signal inherits this value from the baseline file. You can override the value for each signal.

Run the test again. The test passes.

To store the tolerance value and the passed test with the test file, save the test file.

See Also

sltest.testmanager.BaselineCriteria | sltest.testmanager.SignalCriteria