Tracking Cars Using Foreground Detection

This example shows how to detect and count cars in a video sequence using Gaussian mixture models (GMMs).

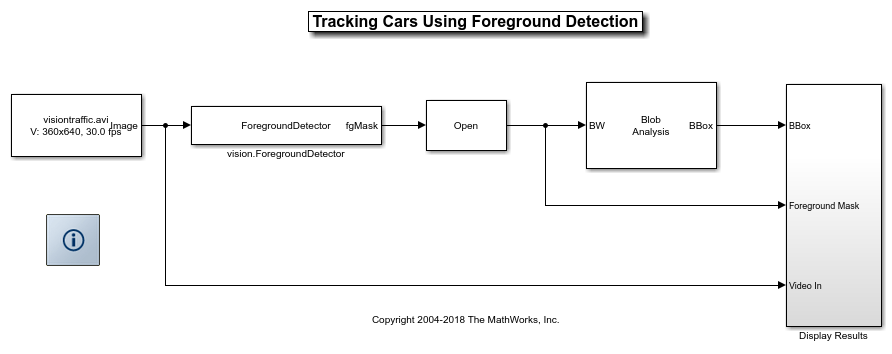

Example Model

The following figure shows the Tracking Cars Using Foreground Detection model:

Detection and Tracking Results

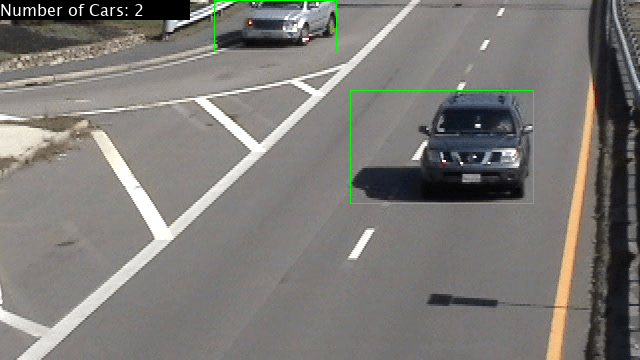

Detecting and counting cars can be used to analyze traffic patterns. Detection is also a first step prior to performing more sophisticated tasks such as tracking or categorization of vehicles by their type.

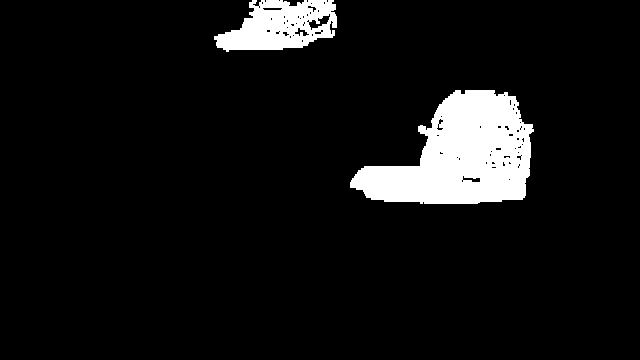

This example uses the vision.ForegroundDetector to estimate the foreground pixels of the video sequence captured from a stationary camera. The vision.ForegroundDetector estimates the background using Gaussian Mixture Models and produces a foreground mask highlighting foreground objects; in this case, moving cars.

The foreground mask is then analyzed using the Blob Analysis block, which produces bounding boxes around the cars. Finally, the number of cars and the bounding boxes are drawn into the original video to display the final results.

Tracking Results

Prototype on a Xilinx Zynq Board

The algorithm in this example is suitable for an embedded software implementation. You can deploy it to an ARM™ processor using a Xilinx™ Zynq™ video processing reference design. See Tracking Cars with Zynq-Based Hardware (SoC Blockset).