Extract Training Data for Video Classification

This example shows how to extract labeled scenes from a collection of videos where each video contains multiple scene labels. The extracted scenes and their associated labels can be used for training or validating a video classifier. For more information on scene labels, see Get Started with the Video Labeler.

Download Training Videos and Scene Labels

This example uses a small collection of video files that were labeled using the Video Labeler app. Specify a location to store the videos and scene label data.

downloadFolder = fullfile(tempdir,'sceneLabels'); if ~isfolder(downloadFolder) mkdir(downloadFolder); end

Download the training data using websave and unzip the contents to the downloadFolder.

downloadURL = "https://ssd.mathworks.com/supportfiles/vision/data/videoClipsAndSceneLabels.zip"; filename = fullfile(downloadFolder,"videoClipsAndSceneLabels.zip"); if ~exist(filename,'file') disp("Downloading the video clips and the corresponding scene labels to " + downloadFolder); websave(filename,downloadURL); end % Unzip the contents to the download folder. unzip(filename,downloadFolder);

Create a groundTruth objects to represent the labeled video files using the supporting function, createGroundTruthForVideoCollection, listed at the end of this example.

gTruth = createGroundTruthForVideoCollection(downloadFolder);

Gather Video Scene Time Ranges and Labels

Gather all the scene time ranges and the corresponding scene labels using the sceneTimeRanges function.

[timeRanges, sceneLabels] = sceneTimeRanges(gTruth);

Here timeRanges and sceneLabels are M-by-1 cell arrays, where M is the number of ground truth objects. Each time range is a T-by-2 duration matrix, where T is the number of time ranges. Each row of the matrix corresponds to a time range in the ground truth data where a scene label was applied, specified in the form [rangeStart, rangeEnd]. For example, the first ground truth object corresponding to video file video_0001.avi, contains 4 scenes with labels "noAction", "wavingHello", "clapping", and "somethingElse".

[~,name,ext] = fileparts(string(gTruth(1).DataSource.Source)); firstVideoFilename = name + ext

firstVideoFilename = "video_0001.avi"

firstTimeRange = timeRanges{1}firstTimeRange = 4×2 duration array

"15 sec" "28.033 sec"

"7.3 sec" "15.033 sec"

"0 sec" "7.3333 sec"

"28 sec" "37.033 sec"

firstSceneLabel = sceneLabels{1}firstSceneLabel = 4×1 categorical array

"noAction"

"wavingHello"

"clapping"

"somethingElse"

Write Extracted Video Scenes

Use writeVideoScenes to write the extracted video scenes to disk and organize the written files based on the labels. Saving video files to folders with scene labels as names helps with obtaining the label information easily when training a video classifier. To learn more about training a video classifier using the extracted video data, see Gesture Recognition using Videos and Deep Learning.

Select a folder in the download folder to write video scenes.

rootFolder = fullfile(downloadFolder,"videoScenes");Video files are written to the folders specified by the folderNames input. Use the scene label names as folder names.

folderNames = sceneLabels;

Write video scenes to the "videoScenes" folder.

filenames = writeVideoScenes(gTruth,timeRanges,rootFolder,folderNames);

[==================================================] 100% Elapsed time: 00:01:47 Estimated time remaining: 00:00:00

The output filenames is an M-by-1 cell array of character strings that specifies the full path to the saved video scenes in each groundTruth object.

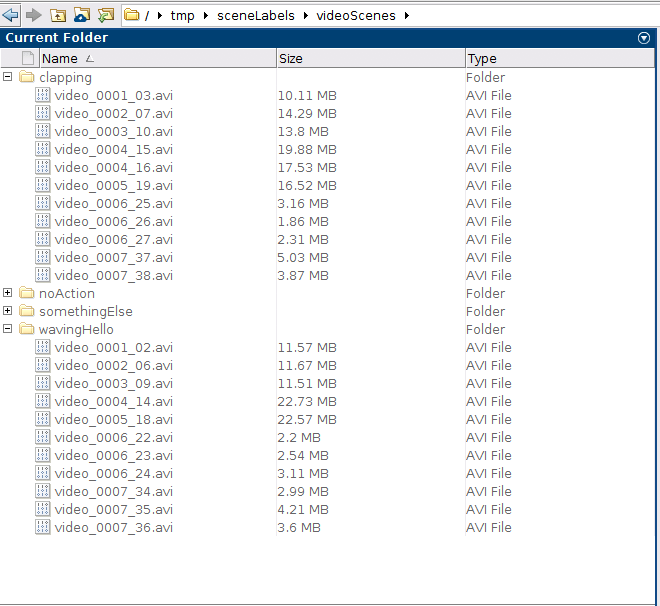

Note that the video files corresponding to a scene label are written to folders named by the scene label. For example, video scenes corresponding to the scene label "clapping" are written to the folder "videoScenes/clapping", and video scenes corresponding to the scene label "wavingHello" are written to the folder "videoScenes/wavingHello".

The extracted video scenes can now be used for training and validating a video classifier. For more information about using the extracted data for training a video classifier, see Gesture Recognition using Videos and Deep Learning. For more information about using the extracted data for evaluating a video classifier, see Evaluate a Video Classifier.

Supporting Functions

createGroundTruthForVideoCollection

The createGroundTruthForVideoCollection function creates ground truth data for a given collection of videos and the corresponding label information.

function gTruth = createGroundTruthForVideoCollection(downloadFolder) labelDataFiles = dir(fullfile(downloadFolder,"*_labelData.mat")); labelDataFiles = fullfile(downloadFolder,{labelDataFiles.name}'); numGtruth = numel(labelDataFiles); %Load the label data information and create ground truth objects. gTruth = groundTruth.empty(numGtruth,0); for ii = 1:numGtruth ld = load(labelDataFiles{ii}); videoFilename = fullfile(downloadFolder,ld.videoFilename); gds = groundTruthDataSource(videoFilename); gTruth(ii) = groundTruth(gds,ld.labelDefs,ld.labelData); end end