Pothole Detection

This example extends the Generate Cartoon Images Using Bilateral Filtering example to include calculating a centroid and overlaying a centroid marker and text label on detected potholes.

Road hazard or pothole detection is an important part of any automated driving system. Previous work [1] on automated pothole detection defined a pothole as an elliptical area in the road surface that has a darker brightness level and different texture than the surrounding road surface. Detecting potholes using image processing then becomes the task of finding regions in the image of the road surface that fit the chosen criterion. You can use any or all of the elliptical shape, darker brightness or texture criterion.

To measure the elliptical shape you can use a voting algorithm such as Hough circle, or a template matching algorithm, or linear algebra-based methods such as a least squares fit. Measuring the brightness level is simple in image processing by selecting a brightness segmentation value. The texture can be assessed by calculating the spatial frequency in a region using techniques such as the FFT.

This example uses brightness segmentation with an area metric so that smaller defects are not detected. To find the center of the defect, this design calculates the centroid. The model overlays a marker on the center of the defect and overlays a text label on the image.

Download Input File

This example uses the potholes2.avi file as an input. The file is approximately 50 MB in size. Download the file from the MathWorks website and unzip the downloaded file.

potholeZipFile = matlab.internal.examples.downloadSupportFile('visionhdl','potholes2.zip'); [outputFolder,~,~] = fileparts(potholeZipFile); unzip(potholeZipFile,outputFolder); potholeVideoFile = fullfile(outputFolder,'potholes2'); addpath(potholeVideoFile);

Introduction

The PotHoleHDLDetector.slx system is shown. The PotHoleHDL subsystem contains the pothole detector and overlay algorithms and supports HDL code generation. There are four input parameters that control the algorithm. The ProcessorBehavioral subsystem writes character maps into a RAM for use as overlay labels.

modelname = 'PotHoleHDLDetector'; open_system(modelname); set_param(modelname,'SampleTimeColors','on'); set_param(modelname,'SimulationCommand','Update'); set_param(modelname,'Open','on'); set(allchild(0),'Visible','off');

Overview of the FPGA Subsystem

The PotHoleHDL subsystem converts the RGB input video to intensity, then performs bilateral filtering and edge detection. The TrapezoidalMask subsystem selects the roadway area. Then the design applies a morphological close and calculates centroid coordinates for all potential potholes. The detector selects the largest pothole in each frame and saves the center coordinates. The Pixel Stream Aligner block matches the timing of the coordinates with the input stream. Finally, the |Fiducial31x31 and the Overlay32x32 subsystems apply alpha channel overlays on the frame to add a pothole center marker and a text label.

open_system([modelname '/PotHoleHDL'],'force');

Input Parameter Values

The subsystem has four input parameters that can change while the system is running.

The gradient intensity parameter, Gradient Threshold, controls the edge detection part of the algorithm.

The Cartoon RGB parameter changes the color of the overlays, that is, the fiducial marker and the text.

The Area Threshold parameter sets the minimum number of marked pixels in the detection window in order for it to be classified as a pothole. If this value is too low, then linear cracks and other defects that are not road hazards will be detected. If it is too high then only the largest hazards will be detected.

The final parameter, Show Raw, allows you to debug the system more easily. It toggles the displayed image on which the overlays are drawn between the RGB input video and the binary image that the detector sees. Set this parameter to 1 to see how the detector is working.

All of these parameters work best if changes are only allowed on video frame boundaries. The FrameBoundary subsystem registers the parameters only on a valid start of frame.

open_system([modelname '/PotHoleHDL/FrameBoundary'],'force');

RGB to Intensity

The model splits the input RGB pixel stream so that a copy of the RGB stream continues toward the overlay blocks. The first step for the detector is to convert from RGB to intensity. Since the input data type for the RGB is uint8, the RGB to Intensity block automatically selects uint8 as the output data type.

Bilateral Filter

The next step in the algorithm is to reduce high visual frequency noise and smaller road defects. There are many ways this can be accomplished but using a bilateral filter has the advantage of preserving edges while reducing the noise and smaller areas.

The Bilateral Filter block has parameters for the neighborhood size and two standard deviations, one for the spatial part of the filter and one for the intensity part of the filter. For this application a relatively large neighborhood of 9x9 works well. This model uses 3 and 0.75 for the standard deviations. You can experiment with these values later.

Sobel Edge Detection

The filtered image is then sent to the Sobel Edge Detector block which finds the edges in the image and returns those edges that are stronger than the gradient threshold parameter. The output is a binary image. In your final application, this threshold can be set based on variables such as road conditions, weather, image brightness, etc. For this model, the threshold is an input parameter to the PotHoleHDL subsystem.

Trapezoidal Mask

From the binary edge image, you need to remove any edges that are not relevant to pothole detection. A good strategy is to use a mask that selects a polygonal region of interest and makes the area outside of that black. The model does not use a normal ROI block since that would remove the location context that you need later for the centroid calculation and labeling.

The order of operations also matters here because if you used the mask before edge detection, the edges of the mask would become strong lines that would result in false positives at the detector.

In the input video, the area in which the vehicle might encounter a pothole is limited to the roadway immediately in front of it and a trapezoidal section of roadway ahead. The exact coordinates depend on the camera mounting and lens. This example uses fixed coordinates for left-side top, right-side top, left-side bottom, and right-side bottom corners of the area. For this video, the top and bottom of the trapezoidal area are not parallel so this is not a true trapezoid.

The mask consists of straight lines between the corners, connecting left,right and top,bottom.

ltc---rtc

/ \

/ \

/ \

lbc-------------rbcThis example uses polyfit to determine a straight-line fit from corner to corner. For ease of implementation, the design calls polyfit with the vertical direction as the independent variable. This usage calculates x = f(y) instead of the more usual y = f(x). Using polyfit this way allows you to use a y-direction line counter as the input address of a lookup table of x-coordinates of the start (left) and end (right) of the area of interest on each line.

The lookup table is typically implemented in a BRAM in an FPGA, so it should be addressed with 0-based addressing. The model converts from MATLAB 1-based addressing to 0-based addressing just before the LUTs. To further reduce the size of the lookup table, the address is offset by the starting line of the trapezoid. In order to get good synthesis results, match typical block RAM registering in FPGAs by using a register after the lookup table. This register also adds some modest pipelining to the design.

For the 320x180 image:

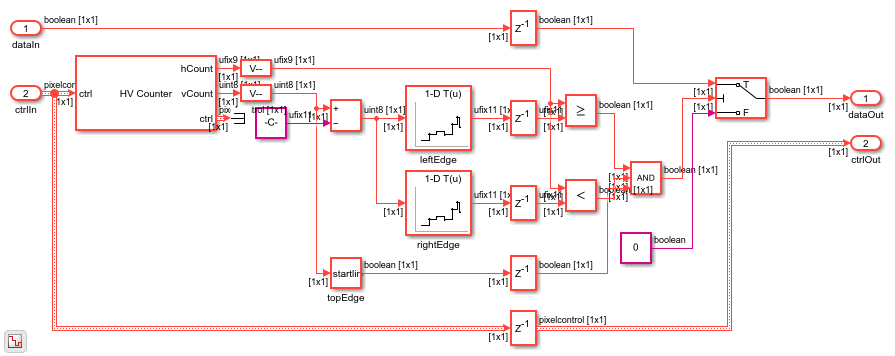

raster = [320,180]; ltc = [155, 66]; lbc = [ 1,140]; rtc = [155, 66]; rbc = [285,179]; % fit to x = f(y) for convenient LUT indexing abl = polyfit([lbc(2),ltc(2)],[lbc(1),ltc(1)],1); % left side abr = polyfit([rbc(2),rtc(2)],[rbc(1),rtc(1)],1); % right side leftxstart = max(1,round((ltc(2):rbc(2))*abl(1)+abl(2))); rightxend = min(raster(1),round((ltc(2):rbc(2))*abr(1)+abr(2))); startline = min(ltc(2),rtc(2)); endline = max(lbc(2),rbc(2)); % correct to zero-based addressing leftxstart = leftxstart - 1; rightxend = rightxend - 1; startline = startline - 1; endline = endline - 1; open_system([modelname '/PotHoleHDL/TrapezoidalMask'],'force');

Morphological Closing

Next the design uses the morphological Closing block to remove or close in small features. Closing works by first doing dilation and then erosion, and helps to remove small features that are not likely to be potholes. Specify a neighborhood on the block mask that determines how small or large a feature you want to remove. This model uses a 5x5 neighborhood, similar to a disk, so that small features are closed in.

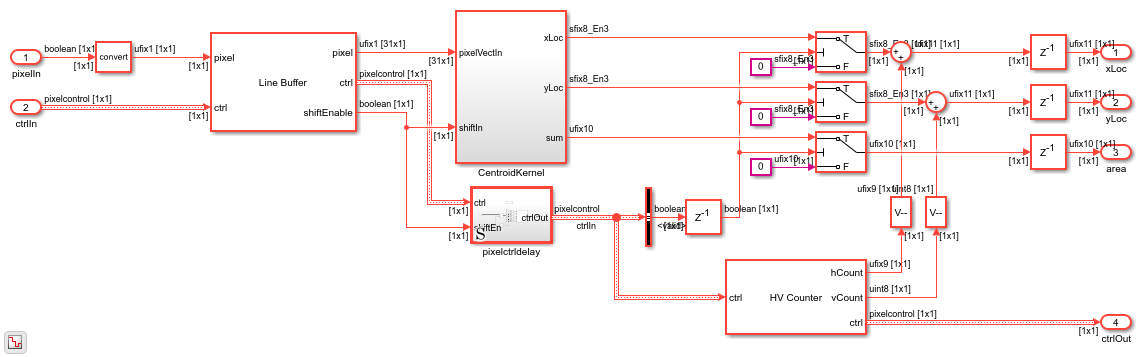

Centroid

The centroid calculation finds the center of an active area. The design continuously computes the centroid of the marked area in each 31x31 pixel region. It only stores the center coordinates when the detected area is larger than an input parameter. This is a common difference between hardware and software systems: when designing hardware for FPGAs it is often easier to compute continuously but only store the answer when you need it, as opposed to calling functions as-needed in software.

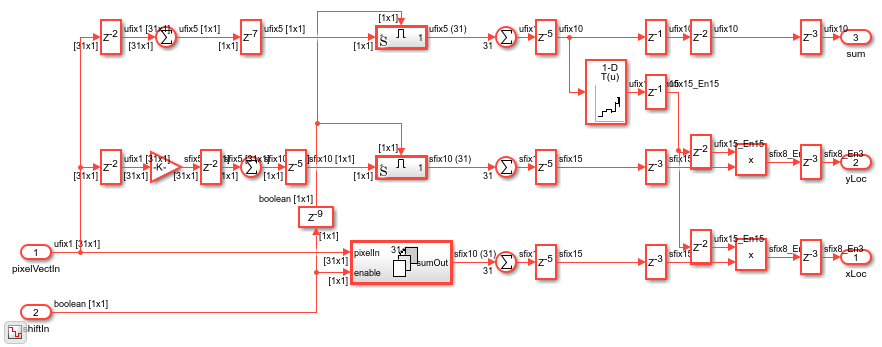

For a centroid calculation, you need to compute three things from the region of the image: the weighted sum of the pixels in the horizontal direction, the weighted sum in the vertical direction, and the overall sum of all the pixels which corresponds to the area of the marked portion of the region. The Line Buffer block selects regions of 31x31 pixels, and returns them one column at a time. The algorithm uses the column to compute vertical weights, and total weights. For the horizontal weights, the design combines the columns to obtain a 31x31 kernel. You can choose the weights depending on what you want "center" to mean. This example uses -15:15 so that the center of the 31x31 region is (0,0) in the computed result.

The Vision HDL Toolbox™ blocks force the output data to zero when the output is not valid, as indicated in the pixelcontrol bus output. While not strictly required, this behavior makes testing and debugging much easier. To accomplish this behavior for the centroid results, the model uses Switch (Simulink) blocks with a Constant (Simulink) block set to 0.

Since you want the center of the detected region to be relative to the overall image coordinate system, add the horizontal and vertical pixel count to the calculated centroid.

open_system([modelname '/PotHoleHDL/Centroid31'],'force');

open_system([modelname '/PotHoleHDL/Centroid31/CentroidKernel'],'force');

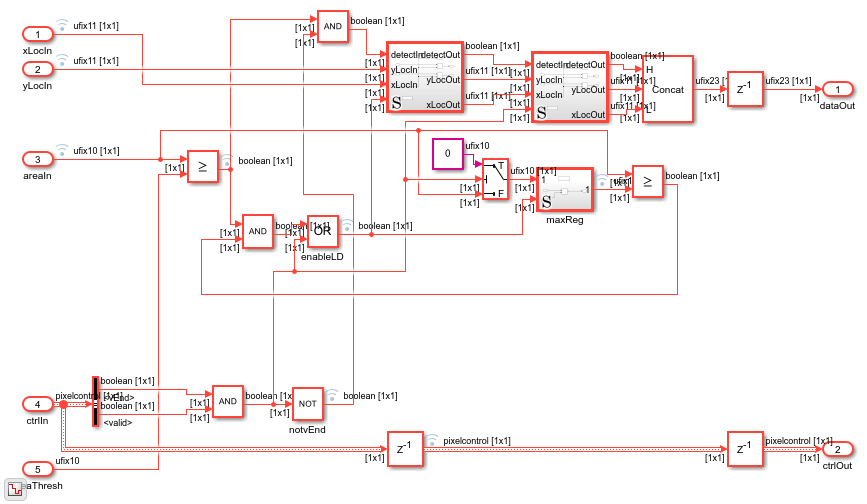

Detect and Hold

The detector operates on the total area sum from the centroid. The detector itself is very simple: compare the centroid area value to the threshold parameter, and find the largest area that is larger than the threshold. The model logic compares a stored area value to the current area value and stores a new area when the input is larger than the currently stored value. By using > or >= you can choose the earliest value over the threshold or the latest value over the threshold. The model stores the latest value because later values are closer to the camera and vehicle. When the detector stores a new winning area value, it also updates the X and Y centroid values that correspond to that area. These coordinates are then passed to the alignment and overlay parts of the subsystem.

To pass the X, Y, and valid indication to the alignment algorithm, pack the values into one 23-bit word. The model unpacks them once they are aligned in time with the input frames for overlay.

open_system([modelname '/PotHoleHDL/DetectAndHold'],'force');

Pixel Stream Aligner

The Pixel Stream Aligner block takes the streaming information from the detector and sends it and the original RGB pixel stream to the overlay subsystems. The aligner compensates for the processing delay added by all the previous parts of the detection algorithm, without having to know anything about the latency of those blocks. If you later change a neighborhood size or add more processing, the aligner can compensate. If the total delay exceeds the Maximum number of lines parameter of the Pixel Stream Aligner block, adjust the parameter.

Fiducial Overlay

The fiducial marker is a square reticle represented as a 31-element array of 31-bit fixed-point numbers. This representation is convenient because a single read returns the whole word of overlay pixels for each line.

The diagram shows the overlay pattern by converting the fixed-point data to binary. This pattern can be anything you wish within the 31x31 size in this design.

load fiducialROM31x31.mat crosshair = bin(fiducialROM); crosshair(crosshair=='0') = ' ' %change '0' to space for better display

crosshair =

31×31 char array

' 1 '

' 1 '

' 1 '

' 1 '

' 11111111111111111111111 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 '

' 1 1 '

' 1 1 '

'111111111111 111111111111'

' 1 1 '

' 1 1 '

' 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 1 1 1 '

' 11111111111111111111111 '

' 1 '

' 1 '

' 1 '

' 1 '

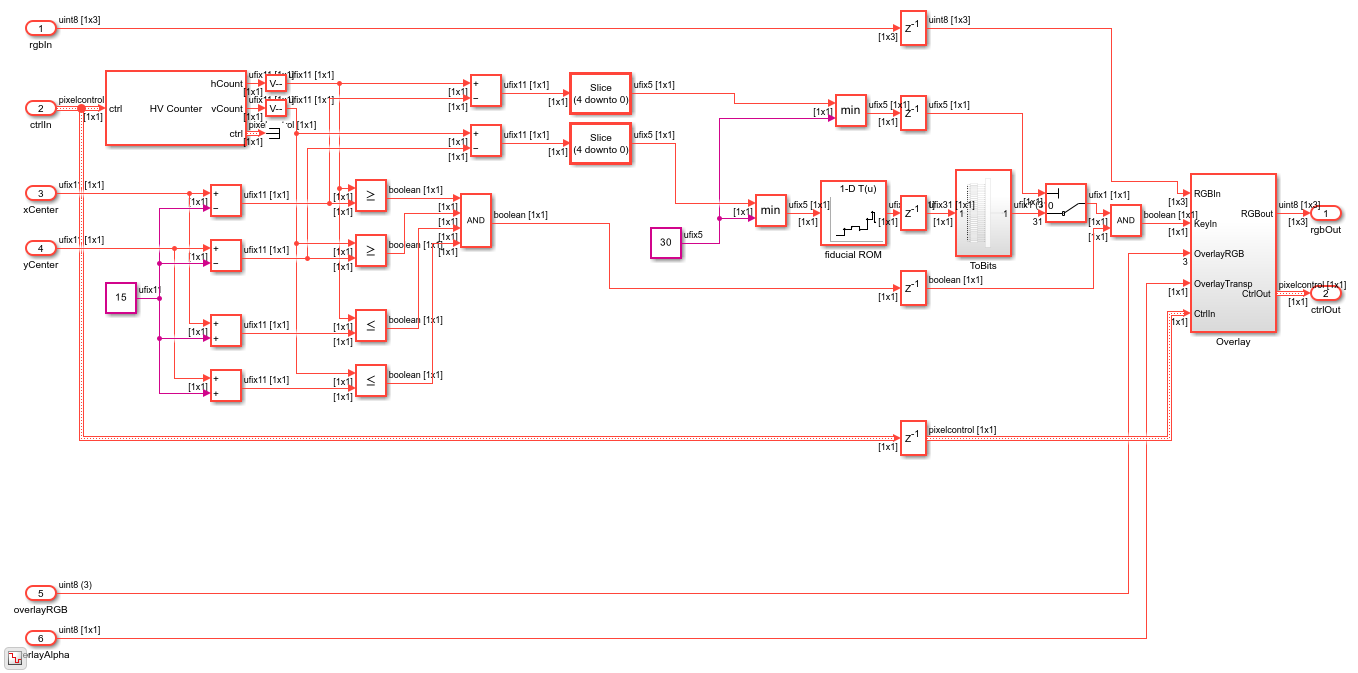

The fiducial overlay subsystem has a horizontal and vertical counter with a set of four comparators that uses the center of the detected area as the center of the region for the marker. The marker data is used as a binary switch that turns on alpha channel overlay. The alpha value is a fixed transparency parameter applied as a gain on the binary Detect signal when it is unpacked, in the ExpandData subsystem.

open_system([modelname '/PotHoleHDL/Fiducial31x31'],'force');

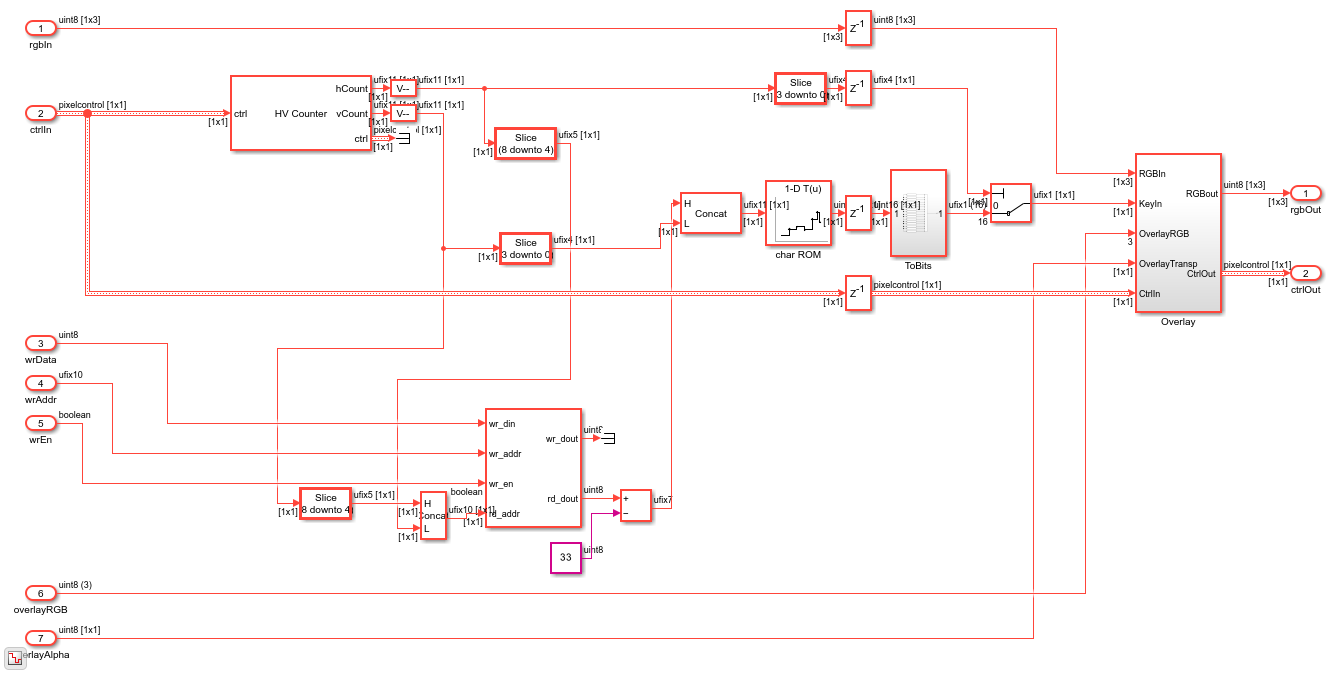

Character Overlay

The character font ROM for the on-screen display stores data in a manner similar to the fiducial ROM described above. Each 16-bit fixed-point number represents 16 consecutive horizontal pixels. The character maps are 16x16.

Since the character data would typically be written by a CPU in ASCII, the simplest way is to store the character data under 8-bit ASCII addresses in a dual-port RAM. The font ROM stores ASCII characters 33 ("!") to 122 ("z"). The design offsets the address by 33.

The font ROM was constructed from a public domain fixed width font with a few edits to improve readability. As in the fiducial marker, the character ROM data is used as a binary switch that turns on alpha channel overlay. The character alpha value is a fixed transparency parameter applied as a gain on the Detect signal when it is unpacked, in the ExpandData subsystem.

To visualize the character B in the font ROM, display it in binary.

load charROM16x16.mat letterB = bin(charROM16x16(529:544)); % character array letterB(letterB=='0')=' ' % remove '0' chars for better display

letterB =

16×16 char array

' '

' 111111111 '

' 11111111111 '

' 111 111 '

' 111 111 '

' 111 111 '

' 111 111 '

' 1111111111 '

' 111111111 '

' 111 111 '

' 111 111 '

' 111 111 '

' 111 111 '

' 111 1111 '

' 11111111111 '

' 111111111 '

open_system([modelname '/PotHoleHDL/Overlay32x32'],'force');

Viewing Detector Raw Image

When you work with a complicated algorithm, viewing intermediate steps in the processing can be very helpful for debugging and exploration. In this model, you can set the boolean Show Raw parameter to 1 (true) to display the result of morphological closing of the binary image, with the overlay of the detected results. To convert the binary image for use with the 8-bit RGB overlay, the model multiplies the binary value by 255 and uses that value on all three color channels.

HDL Code Generation

To check and generate the HDL code referenced in this example, you must have an HDL Coder™ license.

To generate the HDL code, use the following command.

makehdl('PotHoleHDLDetector/PotHoleHDL')

To generate the test bench, use the following command. Note that test bench generation takes a long time due to the large data size. You may want to reduce the simulation time before generating the test bench.

makehdltb('PotHoleHDLDetector/PotHoleHDL')

The part of this model that you can implement on an FPGA is the part between the Frame To Pixels and Pixels To Frame blocks. That is the subsystem called PotHoleHDL, which includes all the elements of the detector.

Simulation in an HDL Simulator

Now that you have HDL code, you can simulate it in your HDL Simulator. The automatically generated test bench allows you to prove that the Simulink simulation and the HDL simulation match.

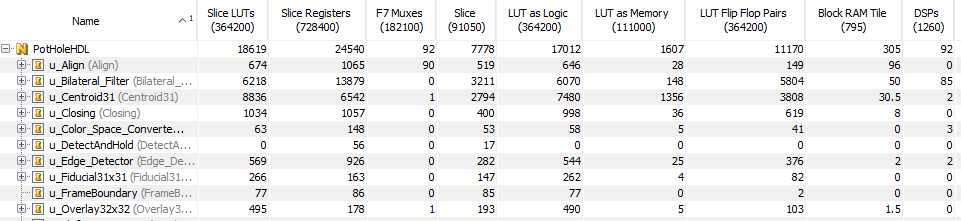

Synthesis for an FPGA

You can also synthesize the generated HDL code in an FPGA synthesis tool, such as AMD® Vivado®. In an AMD Virtex-7 FPGA (xc7v585tffg1157-1), the design achieves a clock rate of over 150 MHz.

The utilization report shows that the bilateral filter, pixel stream aligner, and centroid functions consume most of the resources in this design. The bilateral filter requires the most DSPs. The centroid implementation is quite efficient and uses only two DSPs. Centroid calculation also requires a reciprocal lookup table and so uses a large number of LUTs as memory.

Going Further

This example shows one possible implementation of an algorithm for detecting potholes. This design could be extended in the following ways :

The gradient threshold could be computed from the average brightness using a gray-world model.

The trapezoidal mask block could be made "steerable" by looking at the vehicle wheel position and adjusting the linear fit for the sloping sides of the mask.

The detector could be made more robust by looking at the average brightness of the RGB or intensity image relative to the surrounding pavement since potholes are typically darker in intensity than the surrounding area.

The visual frequency spectrogram of the pothole could also be used to look for specific types of surfaces in potholes.

The detection area threshold value could be computed using average intensity in the trapezoidal roadway region.

Multiple potholes could be detected in one frame by storing the top N responses rather than only the maximum detected response. The fiducial marker subsystem would need to be redesigned slightly to allow for overlapping markers.

Conclusion

This model shows how a pothole detection algorithm can be implemented in an FPGA. Many useful parts of this detector can be reused in other applications, such as the centroid block and the fiducial and character overlay blocks.

References

[1] Koch, Christian, and Ioannis Brilakis. "Pothole detection in asphalt pavement images." Advanced Engineering Informatics 25, no. 3 (2011): 507-15. doi:10.1016/j.aei.2011.01.002.

[2] Omanovic, Samir, Emir Buza, and Alvin Huseinovic. "Pothole Detection with Image Processing and Spectral Clustering." 2nd International Conference on Information Technology and Computer Networks (ICTN '13), Antalya, Turkey. October 2013.

See Also

Blocks

Functions

imbilatfilt(Image Processing Toolbox) |edge(Image Processing Toolbox) |imclose(Image Processing Toolbox)