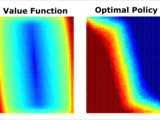

Creates a Markov Decision Process model of a pendulum, then finds optimal swing-up policy.

You are now following this Submission

- You will see updates in your followed content feed

- You may receive emails, depending on your communication preferences

Takes a single pendulum (with a torque actuator) and models it as a Markov Decision Process (MDP), using linear barycentric interpolation over a uniform grid. Then, value iteration is used to compute the optimal policy, which is then displayed as a plot. Finally, a simulation is run to demonstrate how to evaluate the optimal policy.

Cite As

Matthew Kelly (2026). Markov Decision Process - Pendulum Control (https://github.com/MatthewPeterKelly/MDP_Pendulum), GitHub. Retrieved .

General Information

- Version 1.4.0.0 (23.3 KB)

-

View License on GitHub

MATLAB Release Compatibility

- Compatible with any release

Platform Compatibility

- Windows

- macOS

- Linux

Versions that use the GitHub default branch cannot be downloaded

| Version | Published | Release Notes | Action |

|---|---|---|---|

| 1.4.0.0 | Changed name |

||

| 1.3.0.0 | updated photo |

||

| 1.2.0.0 | added a photo. |

||

| 1.1.0.0 | Improved error handling regarding the MEX functions. |

||

| 1.0.0.0 |