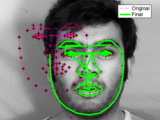

Use active shape models from Cootes et al. to locate faces (or other objects) in images.

You are now following this Submission

- You will see updates in your followed content feed

- You may receive emails, depending on your communication preferences

Run the Example_FindFace.m script for a walk-through of how to use the code. The repository contains a shape model and a gray-level model trained on images from the data set listed below, as well as a single example face. The repository includes code for manually labeling new images and training new shape and gray-level models, meaning it can be used for more than face detection if trained properly. I'd be happy for any feedback you may have. Enjoy!

Version 2.0 supports the MUCT landmark arrangement:

http://www.milbo.org/muct/

The simple landmark arrangement with I labeled is still supported. The faces I used for manual labeling are available here:

http://robotics.csie.ncku.edu.tw/Databases/FaceDetect_PoseEstimate.htm#Our_Database_

If you have a question or suggestion for the project, please open an Issue on GitHub. I will be much more likely to see your question there.

https://github.com/johnwmillr/ActiveShapeModels/issues

Cite As

John W. Miller (2026). Face detection with Active Shape Models (ASMs) (https://github.com/johnwmillr/ActiveShapeModels), GitHub. Retrieved .

Acknowledgements

Inspired by: Active Shape Model (ASM) and Active Appearance Model (AAM)

General Information

- Version 2.0.1.1 (14.6 MB)

-

View License on GitHub

MATLAB Release Compatibility

- Compatible with any release

Platform Compatibility

- Windows

- macOS

- Linux

Versions that use the GitHub default branch cannot be downloaded

| Version | Published | Release Notes | Action |

|---|---|---|---|

| 2.0.1.1 | Added a note about opening Issues on GitHub. |

||

| 2.0.1.0 | Updated description. MUCT landmarks now supported. |

||

| 2.0.0.0 | Version 2.0 supports the MUCT landmark arrangement. |

|

|

| 1.0.0.0 | Changed title to "Face detection with Active Shape Models (ASMs)." |