Implementing a Cyber-Physical System with MATLAB and Model-Based Design

By Pieter J. Mosterman, MathWorks and Justyna Zander, NVIDIA Corporation

When a hurricane, earthquake, or other natural disaster strikes, lives depend on the rapid delivery of supplies and assistance. To prioritize requests for help, assemble a plan, and send help where it’s needed, emergency responders must be able to adapt to changing conditions, complete their missions autonomously, and collaborate with other individuals and teams.

Those capabilities―adapt, act autonomously, and collaborate―are the hallmarks of cyber-physical systems (CPS). Cyber-physical systems connect the networked computer world with the physical world of sensors, actuators, drones, robots, and other devices. Capable of reconfiguring itself to meet shifting requirements, a CPS typically comprises numerous autonomous but networked systems. Smart grids and energy-neutral buildings are examples of CPS in operation today. In the not-distant future, a CPS may be deployed in the aftermath of a natural disaster.

CPS design and implementation presents numerous challenges, however. First, the subsystems that make up a CPS operate on a wide range of temporal and spatial scales. An emergency response CPS, for example, encompasses mission planning performed on the scale of kilometers and hours, and control and actuation performed on the scale of millimeters and microseconds (Figure 1). Second, CPS development requires expertise in multiple disciplines and domains. The systems span not only mechanics, electronics, hydraulics, and other physical domains but also controls, networking, communication, and even human social domains.

Using the smart emergency response system (SERS) developed for the SmartAmerica Challenge as an example, this article shows how MATLAB® and Simulink® for technical computing and Model-Based Design address the challenges of CPS development and implementation.

The SERS software is available for download.

How SERS Works

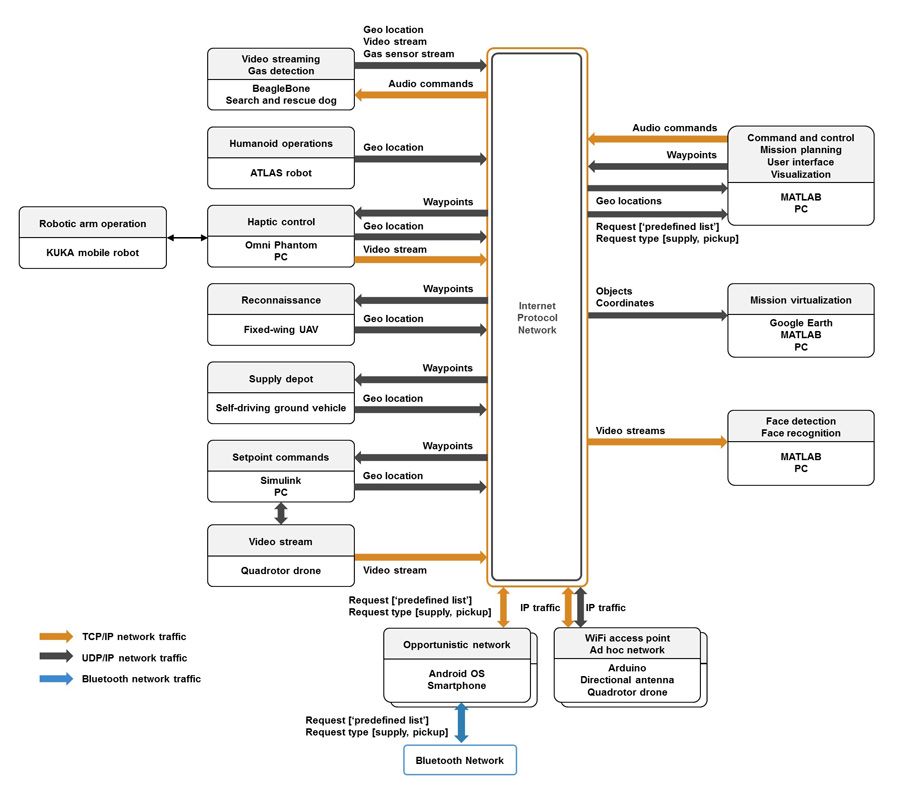

SERS responds to requests for assistance by deploying a fleet of ground vehicles, unmanned aerial vehicles (UAVs), and robots across an uncertain infrastructure. Designed to be open and extensible, the SERS architecture is anchored by mission command and control software (Figure 2 and Figure 3). This software receives information about the operating environment, processes and prioritizes incoming requests, generates a mission plan, dispatches commands to the fleet, and monitors the mission.

SERS Infrastructure and Architecture

The SERS fleet is made up of several autonomous, collaborative components, including:

- Self-driving ground vehicles to set up supply depots

- Delivery drones to carry supplies to the individual who requested them and return them to the depot afterwards

- Sensor drones and trained search-and-rescue dogs equipped with sensor harnesses to locate victims and monitor the environment

- Quadrotor drones equipped with wireless routers and antennas to set up a Wi-Fi network in the event of damage to existing communications infrastructure

- A remotely operated KUKA mobile robotic arm and an ATLAS humanoid robot to perform dangerous field activities, such as fixing a gas leak or clearing debris

- Fixed-wing unmanned aerial aircraft (UAVs) to survey roads for blockages caused by structural damage, congestion caused by people fleeing the scene, and other circumstances

In addition, the SERS architecture provides several human-machine interfaces, including:

- A help request app for mobile devices

- A Google Earth interface for visualizing field operations and virtual missions (for teaching and training purposes)

- A video stream interface for analyzing input from cameras on the drones, mobile devices, and canine harnesses

MATLAB Algorithms: Mission Command and Control Optimization

The MATLAB based mission command and control software lies at the heart of SERS. At startup, the emergency response team provides this software with basic configuration parameters, including the composition of the mission fleet. The configuration also includes shapefiles (a file format for geographic information systems (GIS)) containing detailed information about roads and other elements of the infrastructure in the operating environment, which the software reads using Mapping Toolbox™.

The mission command and control software processes requests generated by the mobile app. Each request specifies the latitude and longitude of the requestor and the item being requested, such as a thermal blanket, medicine, or a defibrillator.

The software displays incoming requests on a map using an interface to Google Earth. When the number of accumulated requests passes a previously defined threshold, the software computes an optimal mission plan. To compute the mission plan, the software performs a three-step process:

- Identifies the best locations to set up the supply depots

- Calculates the optimal route from the ground vehicles’ current locations to these planned depot locations and then determines waypoints along the available roads for the ground vehicles to follow

- Matches delivery drones to supplies, and plans the routes that will ensure the fastest pickups and deliveries

Each step presents an optimization problem that is solved using Optimization Toolbox™. Step 3 is the most complex optimization challenge, requiring the optimization algorithm to account for the payload capacity of the drones, the current state of charge of the drone batteries, and the range of each drone given a specific payload weight as well as the relative priority and nature of each request.

After considering multiple mission alternatives, the MATLAB algorithms arrive at an optimal mission plan that is communicated to the fleet via TCP.

Model-Based Design: Drone Guidance and Navigation

Three types of rotorcraft drones are employed in the SERS fleet. The first type runs the delivery and pickup sorties as directed by mission control. In the initial demonstration of SERS, these drones and the ground vehicles were simulated using Simulink and Simulink Real-Time™. The second type, designed by University of North Texas SERS team members, is equipped with antennas and Wi-Fi equipment for establishing an ad hoc, airborne network. The third type, a sensor drone, is equipped with high-resolution cameras that can be used to locate victims in need of assistance.

MathWorks SERS team members used Model-Based Design to develop the guidance, navigation, and control software for the sensor drone. They created a dynamic system model of the drone from measured input-output data using System Identification Toolbox™. Using this Simulink model, they designed and synthesized control laws with Simulink Control Design™.

Computer Vision: Automating Face Recognition and Incorporating Humans in the Loop

The high-resolution cameras on the sensor drones and rescue dogs transmit live video streams back to the mission command and control software. The software analyzes the streams to identify faces and matches facial features against an authorized photo database using face recognition algorithms in Computer Vision Toolbox™. Rescue teams can then rapidly notify families when loved ones are found.

While much of the software and many of the robots, vehicles, and drones that make up SERS operate autonomously, SERS incorporates humans in the loop when appropriate. The delivery and sensing drones, for example, are designed to fly autonomously until they near a rescue worker on the scene. The rescue worker can take control of the drone and fly it to where it needs to go to deliver supplies. The drone does not need to land, but can hover while the rescue worker takes over control with a mobile device.

Demonstrating SERS at the SmartAmerica Challenge Expo

To demonstrate SERS, the team simulated a disaster recovery mission following an earthquake in San Francisco. We imported a shapefile of San Francisco streets (retrieved from the publicly available central clearinghouse for data published by the City and County of San Francisco) and overlaid the data with a map of the city using Mapping Toolbox. We then simulated requests for assistance originating from random points around the city.

Following DevOps (development and operations) principles, we used the same mission command and control environment for operations and for development and testing. The optimized mission plan was dispatched to two simulated ground vehicles and three simulated fixed-wing UAVs, and we tracked their progress visually in real time with Google Earth (Figure 4). We could see the requests appear on the map as they came in throughout the day, along with the path taken by the vehicles and four simulated delivery drones as the mission unfolded. We showed an Atlas robot and a search-and-rescue dog following their routes to a help request location. In a separate demonstration, we flew the sensor drone in front of a live audience and showed that it could detect individual audience members’ faces. Lastly, we showed that it was possible to set up a Wi-Fi network using the quadrotor drone system designed by the University of North Texas team.

Tests of these various aspects of SERS were all successful, though the technology maturity of SERS is still at the experimental level.

Opportunities for Enhancing SERS

Technologies used in SERS specifically and CPS more generally are rapidly evolving, opening numerous opportunities to add new capabilities. Because SERS is a prototype, there are many areas in which it could be improved, starting with replacing the simulated ground vehicles and aircraft with real versions of the same vehicles. Another straightforward improvement would be to accelerate the optimization algorithms by executing them on multicore processors with Parallel Computing Toolbox™. The SERS source code is available for download. We encourage other organizations to build on this software to advance the practical use of CPS in disaster recovery.

Model-Based Design with MATLAB and Simulink covers the full breadth of technologies employed in CPS, from microprocessors up through mission planning and execution via networked embedded systems. This capability, demonstrated on the SERS project, holds the potential to transform the way that CPS are designed and deployed.

About the SmartAmerica Challenge

The SmartAmerica Challenge was part of the Presidential Innovation Fellows (PIF) program, which paired technologists and innovators with civil servants working at the highest levels of the U.S. government. By bringing together industry, academia, and government, the SmartAmerica Challenge aimed to tackle some the country’s biggest social and economic challenges while increasing job growth, opening new business opportunities, and enabling socio-economic advances.

Altogether more than 65 participating companies, government agencies, and academic institutions launched 12 projects, including smart water distribution, life support, transportation, and community safety systems. Projects were demonstrated at a full-day event held in Washington, D.C.

The SERS team included engineers and researchers from MathWorks, BluHaptics, Boeing, MIT, National Instruments, North Carolina State University, University of North Texas, University of Washington, and Worcester Polytechnic Institute.

Acknowledgements

The authors gratefully acknowledge the contributions of the entire SERS core team, including:

- Yosuke Bando, Massachusetts Institute of Technology

- Alper Bozkurt, North Carolina State University

- Andy Chang, National Instruments

- Howard Chizeck, University of Washington

- Daniel Dubois, Massachusetts Institute of Technology

- Shengli Fu, University of North Texas

- Kevin Huang, University of Washington

- Taskin Padir, Worcester Polytechnic Institute

- Jim Paunicka, Boeing

- David Roberts, North Carolina State University

- Fredrik Ryden, BluHaptics

- Yan Wan, University of North Texas

- Konosuke Watanabe, Massachusetts Institute of Technology

And contributions from fellow MathWorks engineers:

- Enes Bilgin

- Aubrey da Cunha

- Poornima Kaniarasu

- Srikanth Parupati

- David Escobar Sanabria

- Kun Zhang

Published 2017 - 93098v00