CREPE

Libraries:

Audio Toolbox /

Deep Learning

Description

The CREPE block leverages a pretrained convolutional neural model to estimate pitch from an audio signal. This block requires Deep Learning Toolbox™.

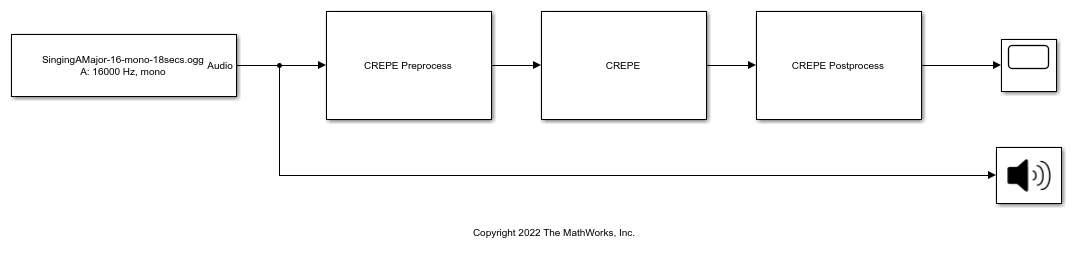

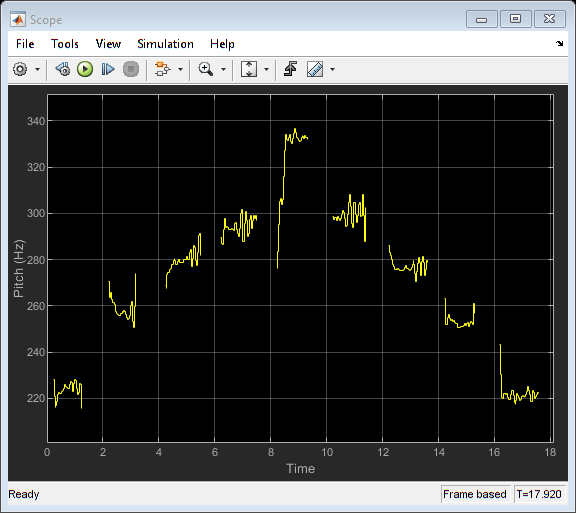

Examples

Ports

Input

Output

Parameters

Block Characteristics

Data Types |

|

Direct Feedthrough |

|

Multidimensional Signals |

|

Variable-Size Signals |

|

Zero-Crossing Detection |

|

References

[1] Kim, Jong Wook, Justin Salamon, Peter Li, and Juan Pablo Bello. “Crepe: A Convolutional Representation for Pitch Estimation.” In 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 161–65. Calgary, AB: IEEE, 2018. https://doi.org/10.1109/ICASSP.2018.8461329.

Extended Capabilities

Version History

Introduced in R2023a