The CREPE network requires you to preprocess your audio signals to generate buffered, overlapped, and normalized audio frames that can be used as input to the network. This example walks through audio preprocessing using crepePreprocess and audio postprocessing with pitch estimation using crepePostprocess. The pitchnn function performs these steps for you.

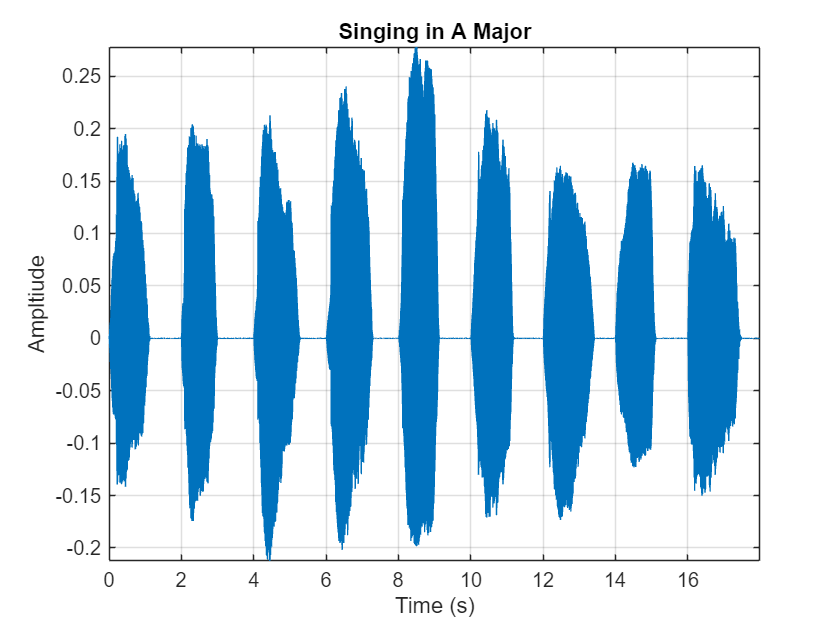

Read in an audio signal for pitch estimation. Visualize and listen to the audio. There are nine vocal utterances in the audio clip.

Use crepePreprocess to partition the audio into frames of 1024 samples with an 85% overlap between consecutive mel spectrograms. Place the frames along the fourth dimension.

Create a CREPE network with ModelCapacity set to tiny.

Predict the network activations.

Use crepePostprocess to produce the fundamental frequency pitch estimation in Hz. Disable confidence thresholding by setting ConfidenceThreshold to 0.

Visualize the pitch estimation over time.

With confidence thresholding disabled, crepePostprocess provides a pitch estimate for every frame. Increase the ConfidenceThreshold to 0.8.

Visualize the pitch estimation over time.

Create a new CREPE network with ModelCapacity set to full.

Predict the network activations.

Visualize the pitch estimation. There are nine primary pitch estimation groupings, each group corresponding with one of the nine vocal utterances.

Find the time elements corresponding to the last vocal utterance.

For simplicity, take the most frequently occurring pitch estimate within the utterance group as the fundamental frequency estimate for that timespan. Generate a pure tone with a frequency matching the pitch estimate for the last vocal utterance.

The value for lastUtteranceEstimate of 217.3 Hz. corresponds to the note A3. Overlay the synthesized tone on the last vocal utterance to audibly compare the two.

Call spectrogram to more closely inspect the frequency content of the singing. Use a frame size of 250 samples and an overlap of 225 samples or 90%. Use 4096 DFT points for the transform. The spectrogram reveals that the vocal recording is actually a set of complex harmonic tones composed of multiple frequencies.