hac

Heteroscedasticity and autocorrelation consistent covariance estimators

Syntax

Description

[

returns a robust covariance matrix estimate, and vectors of corrected standard errors and

OLS coefficient estimates from applying ordinary least squares (OLS) on the multiple linear

regression models EstCoeffCov,se,coeff] = hac(X,y)y = Xβ +

ε under general forms of heteroscedasticity and autocorrelation in the

innovations process ε. y is a vector of response

data and X is a matrix of predictor data.

[

returns a robust covariance matrix estimate, and vectors of corrected standard errors and

OLS coefficient estimates from a fitted multiple linear regression model, as returned by

EstCoeffCov,se,coeff] = hac(Mdl)fitlm.

[

returns a table containing the robust covariance matrix estimate, and a table containing the

corrected standard errors and OLS coefficient estimates, from a fitted multiple linear

regression model of the variables in the input table or timetable.CovTbl,CoeffTbl] = hac(Tbl)

The response variable in the regression is the last table variable, and all other

variables are the predictor variables. To select a different response variable for the

regression, use the ResponseVariable name-value argument. To select

different predictor variables, use the PredictorNames name-value

argument.

[___] = hac(___,

specifies options using one or more name-value arguments in

addition to any of the input argument combinations in previous syntaxes.

Name=Value)hac returns the output argument combination for the

corresponding input arguments.

For example, hac(Tbl,ResponseVariable="GDP",Type="HC") provides

a heteroscedasticity-consistent coefficient covariance estimate of a regression where the

table variable GDP is the response while all other variables are

predictors.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

More About

Tips

To reduce bias in HAC estimators, prewhiten the input series [2]. The prewhitening procedure tends to increase estimator variance and mean-squared error, but it can improve confidence interval coverage probabilities and reduce the over-rejection of t statistics.

Algorithms

The original White HC estimator, specified by the settings

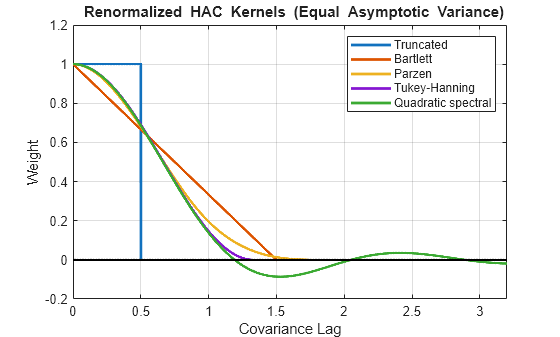

Type="HC",Weights="HC0"is justified asymptotically. The other values of theWeightsname-value argument,"HC1","HC2","HC3", and"HC4", are meant to improve small-sample performance. Specify"HC3"or"HC4"in the presence of influential observations (see [6] and [3]).HAC estimators formed using the truncated kernel might not be positive semidefinite in finite samples. [10] proposes using the Bartlett kernel as a remedy, but the resulting estimator is suboptimal in terms of its rate of consistency. The quadratic spectral kernel achieves an optimal rate of consistency.

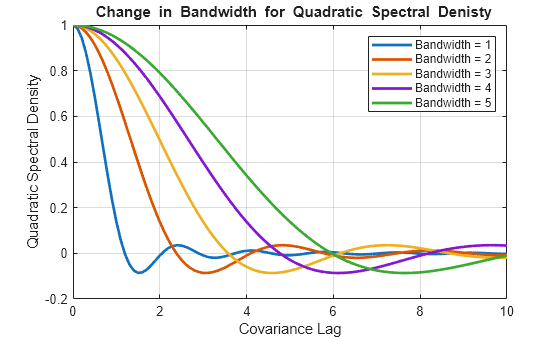

The default estimation method for HAC bandwidth selection, specified by the

Bandwidthname-value argument, is"AR1MLE", which is generally more accurate, but slower, than the AR(1) alternative"AR1OLS". If you specifyBandwidth="ARMA11",hacfits the model using maximum likelihood.Bandwidth selection models might exhibit sensitivity to the relative scale of the input predictors.

References

[1] Andrews, D. W. K. "Heteroskedasticity and Autocorrelation Consistent Covariance Matrix Estimation." Econometrica. Vol. 59, 1991, pp. 817–858.

[2] Andrews, D. W. K., and J. C. Monohan. "An Improved Heteroskedasticity and Autocorrelation Consistent Covariance Matrix Estimator." Econometrica. Vol. 60, 1992, pp. 953–966.

[3] Cribari-Neto, F. "Asymptotic Inference Under Heteroskedasticity of Unknown Form." Computational Statistics & Data Analysis. Vol. 45, 2004, pp. 215–233.

[4] den Haan, W. J., and A. Levin. "A Practitioner's Guide to Robust Covariance Matrix Estimation." In Handbook of Statistics. Edited by G. S. Maddala and C. R. Rao. Amsterdam: Elsevier, 1997.

[5] Frank, A., and A. Asuncion. UCI Machine Learning Repository. Irvine, CA: University of California, School of Information and Computer Science. https://archive.ics.uci.edu/, 2012.

[6] Gallant, A. R. Nonlinear Statistical Models. Hoboken, NJ: John Wiley & Sons, Inc., 1987.

[7] Kutner, M. H., C. J. Nachtsheim, J. Neter, and W. Li. Applied Linear Statistical Models. 5th ed. New York: McGraw-Hill/Irwin, 2005.

[8] Long, J. S., and L. H. Ervin. "Using Heteroscedasticity-Consistent Standard Errors in the Linear Regression Model." The American Statistician. Vol. 54, 2000, pp. 217–224.

[11] Newey, W. K, and K. D. West. “Automatic Lag Selection in Covariance Matrix Estimation.” The Review of Economic Studies. Vol. 61 No. 4, 1994, pp. 631–653.

[12] White, H. "A Heteroskedasticity-Consistent Covariance Matrix and a Direct Test for Heteroskedasticity." Econometrica. Vol. 48, 1980, pp. 817–838.

[13] White, H. Asymptotic Theory for Econometricians. New York: Academic Press, 1984.