bfscore

Contour matching score for image segmentation

Syntax

Description

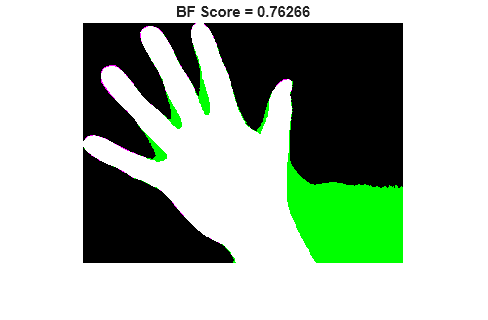

score = bfscore(prediction,groundTruth)prediction and the true segmentation in

groundTruth. prediction and

groundTruth can be a pair of logical arrays for binary

segmentation, or a pair of label or categorical arrays for multiclass

segmentation.

[

also returns the precision and recall values for the score,precision,recall] = bfscore(prediction,groundTruth)prediction

image compared to the groundTruth image.

[___] = bfscore(

computes the BF score using a specified threshold as the distance error tolerance,

to decide whether a boundary point has a match or not.prediction,groundTruth,threshold)

Examples

Input Arguments

Output Arguments

More About

References

[1] Csurka, G., D. Larlus, and F. Perronnin. "What is a good evaluation measure for semantic segmentation?" Proceedings of the British Machine Vision Conference, 2013, pp. 32.1-32.11.