Minimizing an Expensive Optimization Problem Using Parallel Computing Toolbox

This example shows how to speed up the minimization of an expensive optimization problem using functions in Optimization Toolbox™ and Global Optimization Toolbox. In the first part of the example solve the optimization problem by evaluating functions in a serial fashion, and in the second part of the example, solve the same problem using the parallel for loop (parfor) feature by evaluating functions in parallel. Compare the time taken by the optimization function in both cases.

Expensive Optimization Problem

For the purpose of this example, solve a problem in four variables, where the objective and constraint functions are made artificially expensive by pausing.

function f = expensive_objfun(x) % Simulate an expensive function by pausing. pause(0.1) % Evaluate objective function. f = exp(x(1)) * (4*x(3)^2 + 2*x(4)^2 + 4*x(1)*x(2) + 2*x(2) + 1); end function [ineqnonlin,eqnonlin] = expensive_confun(x) % Simulate an expensive function by pausing. pause(0.1); % Evaluate constraints. ineqnonlin = [1.5 + x(1)*x(2)*x(3) - x(1) - x(2) - x(4); -x(1)*x(2) + x(4) - 10]; % No nonlinear equality constraints: eqnonlin = []; end

Minimizing Using fmincon

Measure the time taken by fmincon in serial so that you can compare it to the parallel time.

startPoint = [-1 1 1 -1]; options = optimoptions("fmincon",Display="iter"); startTime = tic; xsol = fmincon(@expensive_objfun,startPoint,[],[],[],[],[],[],@expensive_confun,options);

First-order Norm of

Iter F-count f(x) Feasibility optimality step

0 5 1.839397e+00 1.500e+00 3.211e+00

1 11 -9.760099e-01 3.708e+00 7.902e-01 2.362e+00

2 16 -1.480976e+00 0.000e+00 8.344e-01 1.069e+00

3 21 -2.601599e+00 0.000e+00 8.390e-01 1.218e+00

4 29 -2.823630e+00 0.000e+00 2.598e+00 1.118e+00

5 34 -3.905338e+00 0.000e+00 1.210e+00 7.302e-01

6 39 -6.212992e+00 3.934e-01 7.372e-01 2.405e+00

7 44 -5.948761e+00 0.000e+00 1.784e+00 1.905e+00

8 49 -6.940062e+00 1.233e-02 7.668e-01 7.553e-01

9 54 -6.973887e+00 0.000e+00 2.549e-01 3.920e-01

10 59 -7.142993e+00 0.000e+00 1.903e-01 4.735e-01

11 64 -7.155325e+00 0.000e+00 1.365e-01 2.626e-01

12 69 -7.179122e+00 0.000e+00 6.336e-02 9.115e-02

13 74 -7.180116e+00 0.000e+00 1.069e-03 4.670e-02

14 79 -7.180409e+00 0.000e+00 7.799e-04 2.815e-03

15 84 -7.180410e+00 0.000e+00 6.607e-06 3.121e-04

Local minimum found that satisfies the constraints.

Optimization completed because the objective function is non-decreasing in

feasible directions, to within the value of the optimality tolerance,

and constraints are satisfied to within the value of the constraint tolerance.

<stopping criteria details>

time_fmincon_sequential = toc(startTime);

fprintf("Serial fmincon optimization takes %g seconds.\n",time_fmincon_sequential);Serial fmincon optimization takes 18.9224 seconds.

Minimizing Using Genetic Algorithm

Since ga usually takes many more function evaluations than fmincon, remove the expensive constraint from this problem and perform unconstrained optimization instead. Pass empty matrices [] for constraints. In addition, limit the maximum number of generations to 15 for ga so that ga can terminate in a reasonable amount of time. Measure the time taken by ga so that you can compare it to the parallel ga evaluation. Note that running ga requires Global Optimization Toolbox.

rng default % for reproducibility try gaAvailable = false; nvar = 4; gaoptions = optimoptions("ga",MaxGenerations=15,Display="iter"); startTime = tic; gasol = ga(@expensive_objfun,nvar,[],[],[],[],[],[],[],gaoptions); time_ga_sequential = toc(startTime); fprintf("Serial ga optimization takes %g seconds.\n",time_ga_sequential); gaAvailable = true; catch ME warning(message("optim:optimdemos:optimparfor:gaNotFound")); end

Single objective optimization:

4 Variables

Options:

CreationFcn: @gacreationuniform

CrossoverFcn: @crossoverscattered

SelectionFcn: @selectionstochunif

MutationFcn: @mutationgaussian

Best Mean Stall

Generation Func-count f(x) f(x) Generations

1 100 -5.546e+05 1.483e+15 0

2 147 -5.581e+17 -1.116e+16 0

3 194 -7.556e+17 6.679e+22 0

4 241 -7.556e+17 -7.195e+16 1

5 288 -9.381e+27 -1.876e+26 0

6 335 -9.673e+27 -7.497e+26 0

7 382 -4.511e+36 -9.403e+34 0

8 429 -5.111e+36 -3.011e+35 0

9 476 -7.671e+36 9.346e+37 0

10 523 -1.52e+43 -3.113e+41 0

11 570 -2.273e+45 -4.67e+43 0

12 617 -2.589e+47 -6.281e+45 0

13 664 -2.589e+47 -1.015e+46 1

14 711 -8.149e+47 -5.855e+46 0

15 758 -9.503e+47 -1.29e+47 0

ga stopped because it exceeded options.MaxGenerations.

Serial ga optimization takes 83.4899 seconds.

Setting Parallel Computing Toolbox

The finite differencing used by the functions in Optimization Toolbox to approximate derivatives is done in parallel using the parfor feature if Parallel Computing Toolbox™ is available and there is a parallel pool of workers. Similarly, ga, gamultiobj, and patternsearch solvers in Global Optimization Toolbox evaluate functions in parallel. To use the parfor feature, use the parpool function to set up the parallel environment. The computer on which this example is published has four cores, so parpool starts four MATLAB® workers. If there is already a parallel pool when you run this example, use that pool; see the documentation for parpool for more information.

if max(size(gcp)) == 0 % parallel pool needed parpool % create the parallel pool end

Minimizing Using Parallel fmincon

To minimize the expensive optimization problem using the parallel fmincon function, you must explicitly indicate that the objective and constraint functions can be evaluated in parallel and that you want fmincon to use its parallel functionality wherever possible. Currently, finite differencing can be done in parallel. Measure the time taken by parallel fmincon so that you can compare it to the serial fmincon run.

options = optimoptions(options,UseParallel=true); startTime = tic; xsol = fmincon(@expensive_objfun,startPoint,[],[],[],[],[],[],@expensive_confun,options);

First-order Norm of

Iter F-count f(x) Feasibility optimality step

0 5 1.839397e+00 1.500e+00 3.211e+00

1 11 -9.760099e-01 3.708e+00 7.902e-01 2.362e+00

2 16 -1.480976e+00 0.000e+00 8.344e-01 1.069e+00

3 21 -2.601599e+00 0.000e+00 8.390e-01 1.218e+00

4 29 -2.823630e+00 0.000e+00 2.598e+00 1.118e+00

5 34 -3.905338e+00 0.000e+00 1.210e+00 7.302e-01

6 39 -6.212992e+00 3.934e-01 7.372e-01 2.405e+00

7 44 -5.948761e+00 0.000e+00 1.784e+00 1.905e+00

8 49 -6.940062e+00 1.233e-02 7.668e-01 7.553e-01

9 54 -6.973887e+00 0.000e+00 2.549e-01 3.920e-01

10 59 -7.142993e+00 0.000e+00 1.903e-01 4.735e-01

11 64 -7.155325e+00 0.000e+00 1.365e-01 2.626e-01

12 69 -7.179122e+00 0.000e+00 6.336e-02 9.115e-02

13 74 -7.180116e+00 0.000e+00 1.069e-03 4.670e-02

14 79 -7.180409e+00 0.000e+00 7.799e-04 2.815e-03

15 84 -7.180410e+00 0.000e+00 6.607e-06 3.121e-04

Local minimum found that satisfies the constraints.

Optimization completed because the objective function is non-decreasing in

feasible directions, to within the value of the optimality tolerance,

and constraints are satisfied to within the value of the constraint tolerance.

<stopping criteria details>

time_fmincon_parallel = toc(startTime);

fprintf("Parallel fmincon optimization takes %g seconds.\n",time_fmincon_parallel);Parallel fmincon optimization takes 10.7073 seconds.

Minimizing Using Parallel Genetic Algorithm

To minimize the expensive optimization problem using the ga function, you must explicitly indicate that the objective function can be evaluated in parallel and that you want ga to use its parallel functionality wherever possible. To use the parallel ga you must ensure that the Vectorized option is set to the default ("off"). Measure the time taken by ga so that you can compare it to the serial ga run. Though this run may be different from the serial one because ga uses a random number generator, the number of expensive function evaluations is the same in both runs. Note that running ga requires Global Optimization Toolbox.

rng default % To get the same evaluations as the previous run if gaAvailable gaoptions = optimoptions(gaoptions,UseParallel=true); startTime = tic; gasol = ga(@expensive_objfun,nvar,[],[],[],[],[],[],[],gaoptions); time_ga_parallel = toc(startTime); fprintf("Parallel ga optimization takes %g seconds.\n",time_ga_parallel); end

Single objective optimization:

4 Variables

Options:

CreationFcn: @gacreationuniform

CrossoverFcn: @crossoverscattered

SelectionFcn: @selectionstochunif

MutationFcn: @mutationgaussian

Best Mean Stall

Generation Func-count f(x) f(x) Generations

1 100 -5.546e+05 1.483e+15 0

2 147 -5.581e+17 -1.116e+16 0

3 194 -7.556e+17 6.679e+22 0

4 241 -7.556e+17 -7.195e+16 1

5 288 -9.381e+27 -1.876e+26 0

6 335 -9.673e+27 -7.497e+26 0

7 382 -4.511e+36 -9.403e+34 0

8 429 -5.111e+36 -3.011e+35 0

9 476 -7.671e+36 9.346e+37 0

10 523 -1.52e+43 -3.113e+41 0

11 570 -2.273e+45 -4.67e+43 0

12 617 -2.589e+47 -6.281e+45 0

13 664 -2.589e+47 -1.015e+46 1

14 711 -8.149e+47 -5.855e+46 0

15 758 -9.503e+47 -1.29e+47 0

ga stopped because it exceeded options.MaxGenerations.

Parallel ga optimization takes 22.4566 seconds.

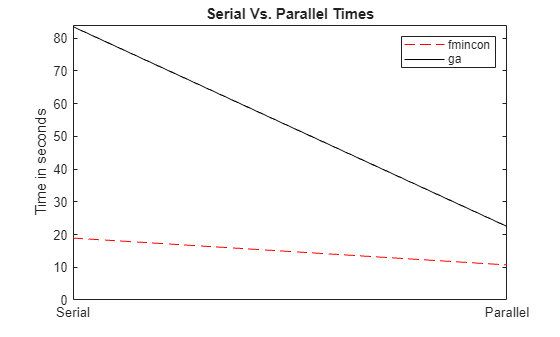

Compare Serial and Parallel Time

X = [time_fmincon_sequential time_fmincon_parallel]; Y = [time_ga_sequential time_ga_parallel]; t = [0 1]; plot(t,X,"r--",t,Y,"k-") ylabel("Time in seconds") legend("fmincon","ga") ax = gca; ax.XTick = [0 1]; ax.XTickLabel = ["Serial" "Parallel"]; axis([0 1 0 ceil(max([X Y]))]) title("Serial Vs. Parallel Times")

Utilizing parallel function evaluation via parfor improved the efficiency of both fmincon and ga. The improvement is typically better for expensive objective and constraint functions.