Kernel Latency

In a deployed application, switching between threads requires a finite amount of time depending on the current state of the thread, embedded processor, and OS. Kernel latency defines the time required for the operating system to respond to a trigger signal, stop execution of any running threads, and start the execution of the thread responsible for the trigger signal.

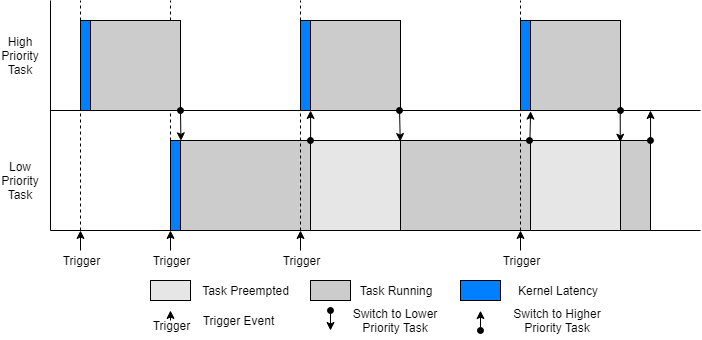

SoC Blockset™ models simulate Kernel latency as a delay at the start of execution of a task the first time the task moves from the waiting to running state. The following diagram shows the execution timing of a high-priority and low-priority task on a system that simulates a single processor core.

Other factors affecting kernel latency, such as context switch times, can be considered negligible compared to other effects and are not modeled in simulation.

Note

Kernel latency requires advanced knowledge of the processor specifications and can be

generally set to 0 without impact to the simulation.

Effect Kernel Latency on Task Execution

This example shows the effect of kernel latency on the behavior and timing of two timer driven tasks in an SoC application.

The following model simulates a software application with two timer driven tasks. The task characteristics, specified in the Task Manager block, are as follows:

With these timing conditions, the high priority task preempts the low priority task. In the model Configuration Parameters dialog box, the Hardware Implementation > Simulation settings > Kernel latency is set to 0.002.

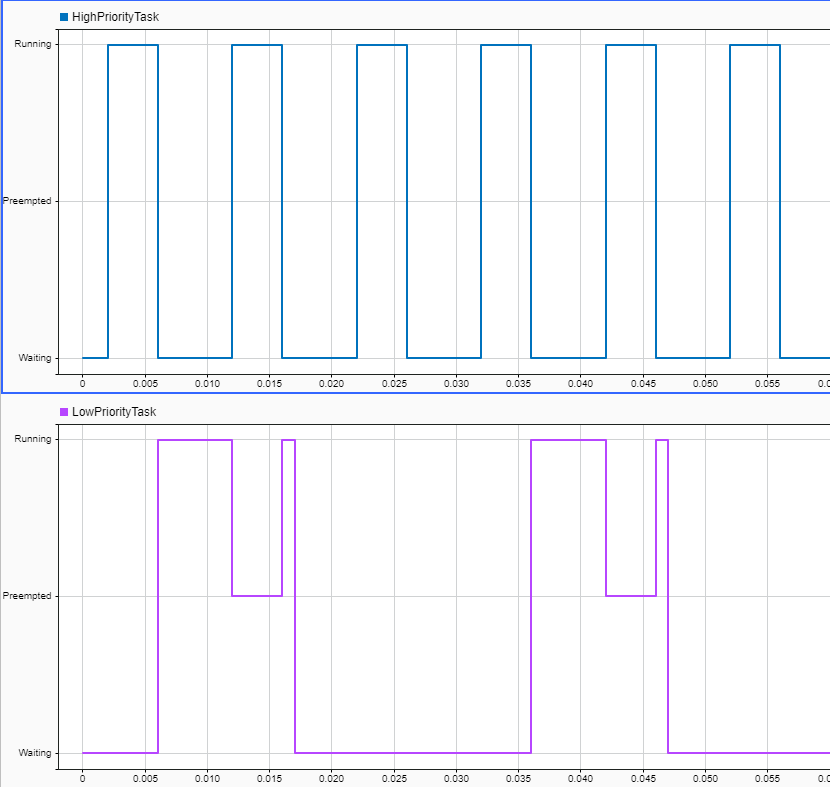

Run the model and open the Simulation Data Inspector. Selecting the two task signal produces the following display.

Inspecting the Simulation Data Inspector, note the following:

There is a trigger every 0.01 seconds, and the high priority task switches from Waiting to Running with a latency of

0.002seconds.The low priority task changes from Waiting to Running without latency, since the waiting period exceeded the kernel latency.

The low priority task is preempted when the high priority task executes.

The low priority task changes from Preempted to Running without latency.