iforest

Syntax

Description

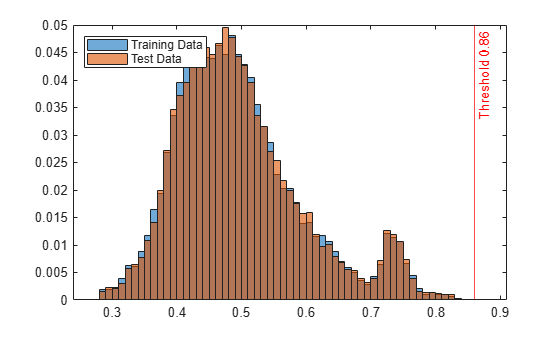

Use the iforest function to fit an isolation forest model for outlier

detection and novelty detection.

Outlier detection (detecting anomalies in training data) — Use the output argument

tfofiforestto identify anomalies in training data.Novelty detection (detecting anomalies in new data with uncontaminated training data) — Create an

IsolationForestobject by passing uncontaminated training data (data with no outliers) toiforest. Detect anomalies in new data by passing the object and the new data to the object functionisanomaly.

forest = iforest(Tbl)IsolationForest

object for predictor data in the table Tbl.

forest = iforest(___,Name=Value)ContaminationFraction=0.1

Examples

Input Arguments

Name-Value Arguments

Output Arguments

More About

Tips

After training a model, you can generate C/C++ code that finds anomalies for new data. Generating C/C++ code requires MATLAB® Coder™. For details, see Code Generation section of the

isanomalyfunction page and Introduction to Code Generation for Statistics and Machine Learning Functions.

Algorithms

iforest considers NaN, '' (empty character vector), "" (empty string), <missing>, and <undefined> values in Tbl and NaN values in X to be missing values.

iforest uses observations with missing values to find splits on

variables for which these observations have valid values. The function might place

these observations in a branch node, not a leaf node. Then

iforest computes anomaly scores by using the

distance from the root node to the branch node. The function places an observation

with all missing values in the root node, so the score value becomes 1.

References

[1] Liu, F. T., K. M. Ting, and Z. Zhou. "Isolation Forest," 2008 Eighth IEEE International Conference on Data Mining. Pisa, Italy, 2008, pp. 413-422.

Extended Capabilities

Version History

Introduced in R2021b