bagOfNGrams

Bag-of-n-grams model

Description

A bag-of-n-grams model records the number of times that each n-gram appears in each document of a collection. An n-gram is a collection of n successive words.

bagOfNgrams does not split text into words. To create an array of

tokenized documents, see tokenizedDocument.

Creation

Syntax

Description

bag = bagOfNgrams

bag = bagOfNgrams(documents)documents.

bag = bagOfNgrams(___,'NgramLengths',lengths)

bag = bagOfNgrams(uniqueNgrams,counts)uniqueNgrams and the corresponding frequency counts in

counts. If uniqueNgrams contains

<missing> values, then the corresponding values in

counts are ignored.

Input Arguments

Properties

Object Functions

encode | Encode documents as matrix of word or n-gram counts |

tfidf | Term Frequency–Inverse Document Frequency (tf-idf) matrix |

topkngrams | Most frequent n-grams |

addDocument | Add documents to bag-of-words or bag-of-n-grams model |

removeDocument | Remove documents from bag-of-words or bag-of-n-grams model |

removeEmptyDocuments | Remove empty documents from tokenized document array, bag-of-words model, or bag-of-n-grams model |

removeNgrams | Remove n-grams from bag-of-n-grams model |

removeInfrequentNgrams | Remove infrequently seen n-grams from bag-of-n-grams model |

join | Combine multiple bag-of-words or bag-of-n-grams models |

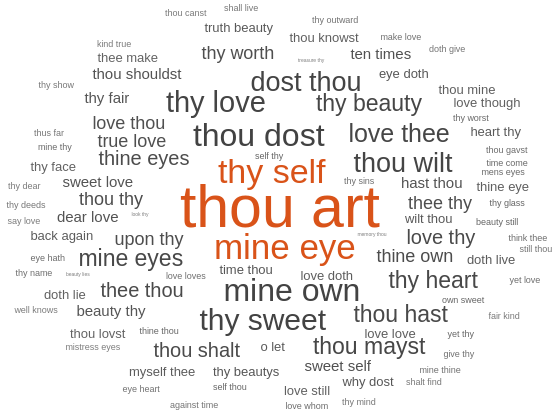

wordcloud | Create word cloud chart from text, bag-of-words model, bag-of-n-grams model, or LDA model |

Examples

Version History

Introduced in R2018a

See Also

bagOfWords | addDocument | removeDocument | removeInfrequentNgrams | removeNgrams | removeEmptyDocuments | topkngrams | encode | tfidf | tokenizedDocument