vision.PeopleDetector

(Removed) Detect upright people using HOG features

vision.PeopleDetectorpeopleDetectorACF function to detect people,

instead.

Description

The people detector object detects people in an input image using the Histogram of Oriented Gradient (HOG) features and a trained Support Vector Machine (SVM) classifier. The object detects unoccluded people in an upright position.

To detect people in an image:

Create the

vision.PeopleDetectorobject and set its properties.Call the object with arguments, as if it were a function.

To learn more about how System objects work, see What Are System Objects?

Creation

Syntax

Description

peopleDetector = vision.PeopleDetectorpeopleDetector, that tracks a set

of points in a video.

peopleDetector = vision.PeopleDetector(model)ClassificationModel property to model.

peopleDetector = vision.PeopleDetector(Name,Value)peopleDetector =

vision.PeopleDetector('ClassificationModel','UprightPeople_128x64')

Properties

Usage

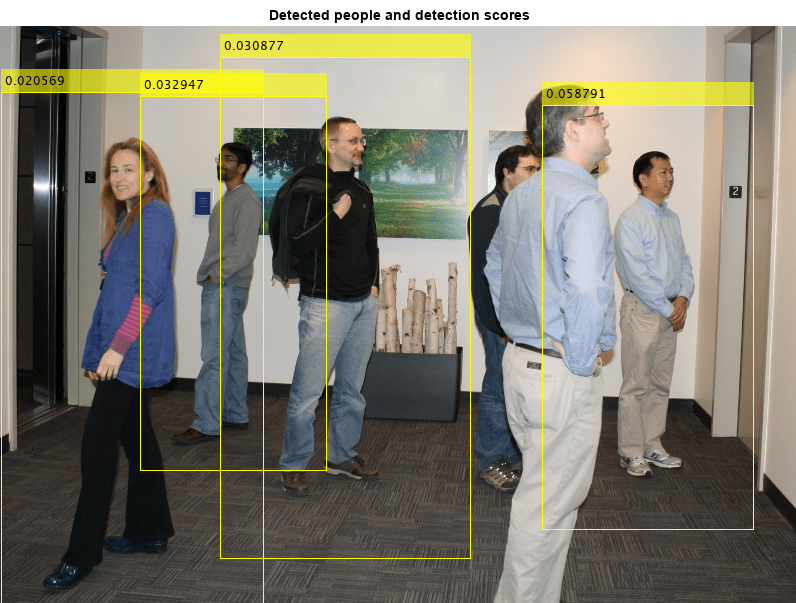

Description

bboxes = peopleDetector(I)I and

returns an M-by-4 matrix defining M bounding

boxes. M represents the number of detected people. Each row of the

output matrix, BBOXES, contains a four-element vector,

[x

y width height]. This vector specifies, in pixels, the upper-left

corner and size, of a bounding box. When no people are detected, the object returns an

empty vector. The input image, I, must be a grayscale or truecolor

(RGB) image.

[

additionally returns a confidence value for the detections.bboxes,

scores] = peopleDetector(I)

Input Arguments

Output Arguments

Object Functions

To use an object function, specify the

System object™ as the first input argument. For

example, to release system resources of a System object named obj, use

this syntax:

release(obj)

Examples

References

[1] Dalal, N. and B. Triggs. “Histograms of Oriented Gradients for Human Detection,”Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, June 2005, pp. 886-893.