Prompts Matter

I was in a meeting the other day and a coworker shared a smiley face they created using the AI Chat Playground. The image looked something like this:

And I suspect the prompt they used was something like this:

"Create a smiley face"

I imagine this output wasn't what my coworker had expected so he was left thinking that this was as good as it gets without manually editing the code, and that the AI Chat Playground couldn't do any better.

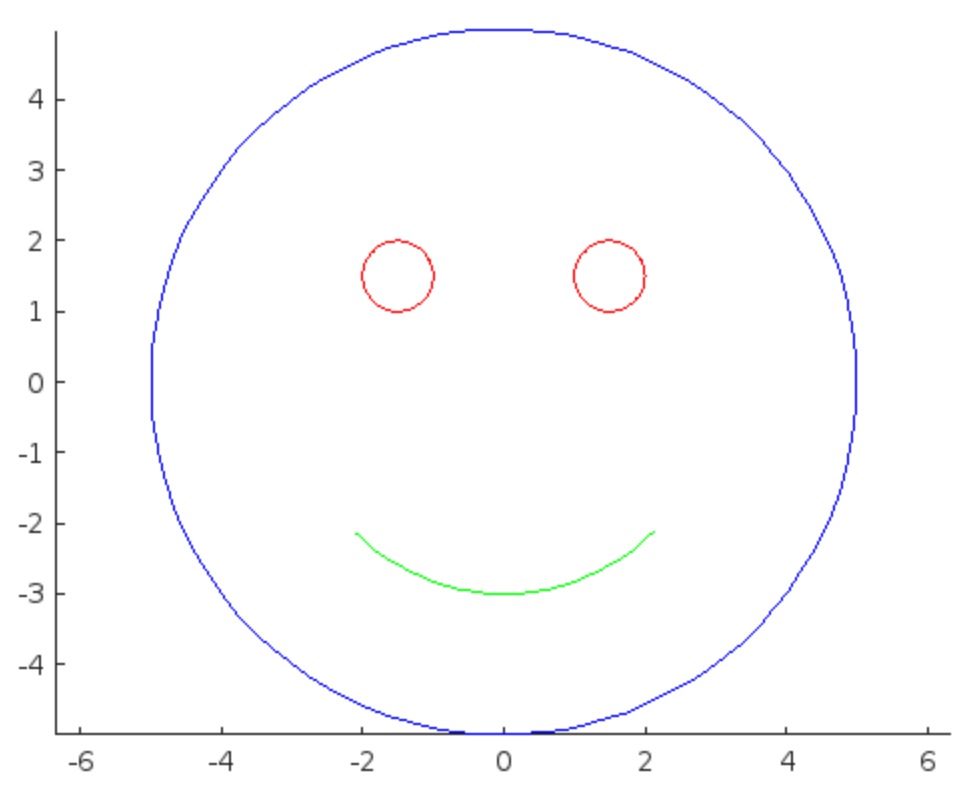

I thought I could get a better result using the Playground so I tried a more detailed prompt using a multi-step technique like this:

"Follow these instructions:

- Create code that plots a circle

- Create two smaller circles as eyes within the first circle

- Create an arc that looks like a smile in the lower part of the first circle"

The output of this prompt was better in my opinion.

These queries/prompts are examples of 'zero-shot' prompts, the expectation being a good result with just one query. As opposed to a back-and-forth chat session working towards a desired outcome.

I wonder how many attempts everyone tries before they decide they can't anything more from the AI/LLM. There are times I'll send dozens of chat queries if I feel like I'm getting close to my goal, while other times I'll try just one or two. One thing I always find useful is seeing how others interact with AI models, which is what inspired me to share this.

Does anyone have examples of techniques that work well? I find multi-step instructions often produces good results.

1 Comment

Time DescendingI did some experiments. My personal experience is that a single clear and detailed prompt generally works better than multiple simple prompts. Furthermore, if you want AI to change the code, it typically doesn't work really well. Therefore, a good use case might be that AI help you write some code and you can then modify the code to your needs.

Sign in to participate