Deep Learning INT8 Quantization

Calibrate, validate, and deploy quantized pretrained series deep learning

networks

Increase throughput, reduce resource utilization, and deploy larger networks onto smaller target boards by quantizing your deep learning networks.

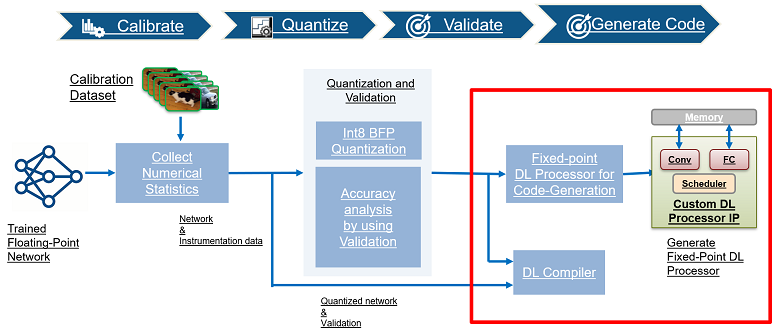

After calibrating your pretrained series network by collecting instrumentation data, quantize your series network and validate the accuracy of your quantized network. Once the quantized network has been validated, generate code for and deploy the quantized network.

Functions

Topics

Get Started

- Supported Networks, Boards, and Tools

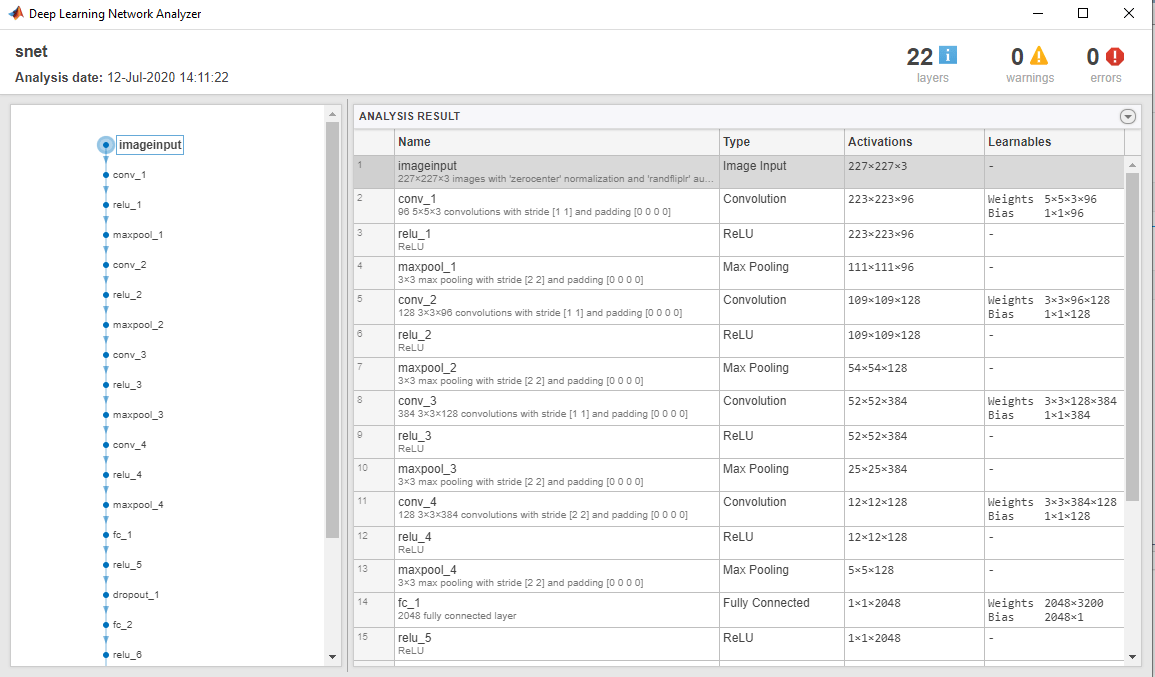

Pretrained deep learning networks and network layers for which code can be generated by Deep Learning HDL Toolbox™. - Quantization of Deep Neural Networks

Learn about deep learning quantization tools and workflows.

Quantization Workflow

- Quantization Workflow System Requirements

See what products are required for the quantization of deep neural networks. - Calibration

Simulate your pretrained series network and collect the dynamic range of weights and biases. - Validation

Quantize and validate your pretrained series deep learning network. - Code Generation and Deployment

Generate code and deploy your quantized pretrained series deep learning network.