Get Started with Deep Network Designer

This example shows how to create a simple recurrent neural network for deep learning sequence classification using Deep Network Designer.

To train a deep neural network to classify sequence data, you can use an LSTM network. An LSTM network enables you to input sequence data into a network, and make predictions based on the individual time steps of the sequence data.

Load Sequence Data

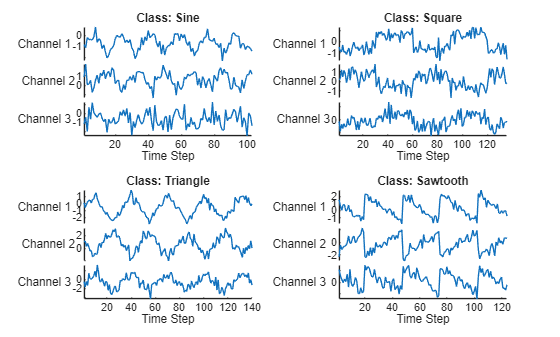

Load the example data from WaveformData. To access this data, open the example as a live script. This data contains waveforms from four classes: sine, square, triangle, and sawtooth. This example trains an LSTM neural network to recognize the type of waveform given time series data. Each sequence has three channels and varies in length.

load WaveformData Visualize some of the sequences in a plot.

numChannels = size(data{1},2);

classNames = categories(labels);

figure

tiledlayout(2,2)

for i = 1:4

nexttile

stackedplot(data{i},DisplayLabels="Channel "+string(1:numChannels))

xlabel("Time Step")

title("Class: " + string(labels(i)))

end

Partition the data into a training set containing 80% of the data and validation and test sets each containing 10% of the data. To partition the data, use the trainingPartitions function. To access this function, open the example as a live script.

numObservations = numel(data); [idxTrain,idxValidation,idxTest] = trainingPartitions(numObservations,[0.8 0.1 0.1]); XTrain = data(idxTrain); TTrain = labels(idxTrain); XValidation = data(idxValidation); TValidation = labels(idxValidation); XTest = data(idxTest); TTest = labels(idxTest);

Define Network Architecture

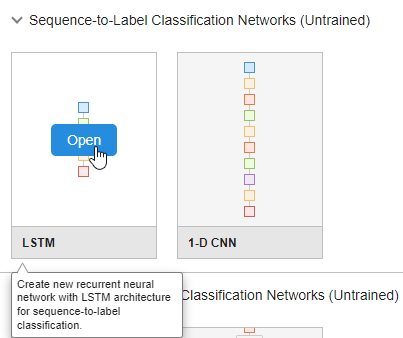

To build the network, use the Deep Network Designer app.

deepNetworkDesigner

To create a sequence network, in the Sequence-to-Label Classification Networks (Untrained) section, click LSTM. Doing so opens a prebuilt network suitable for sequence-to-label classification problems.

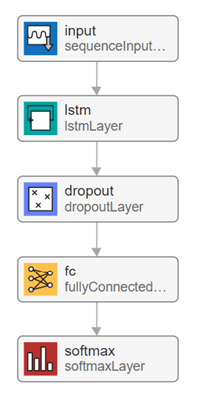

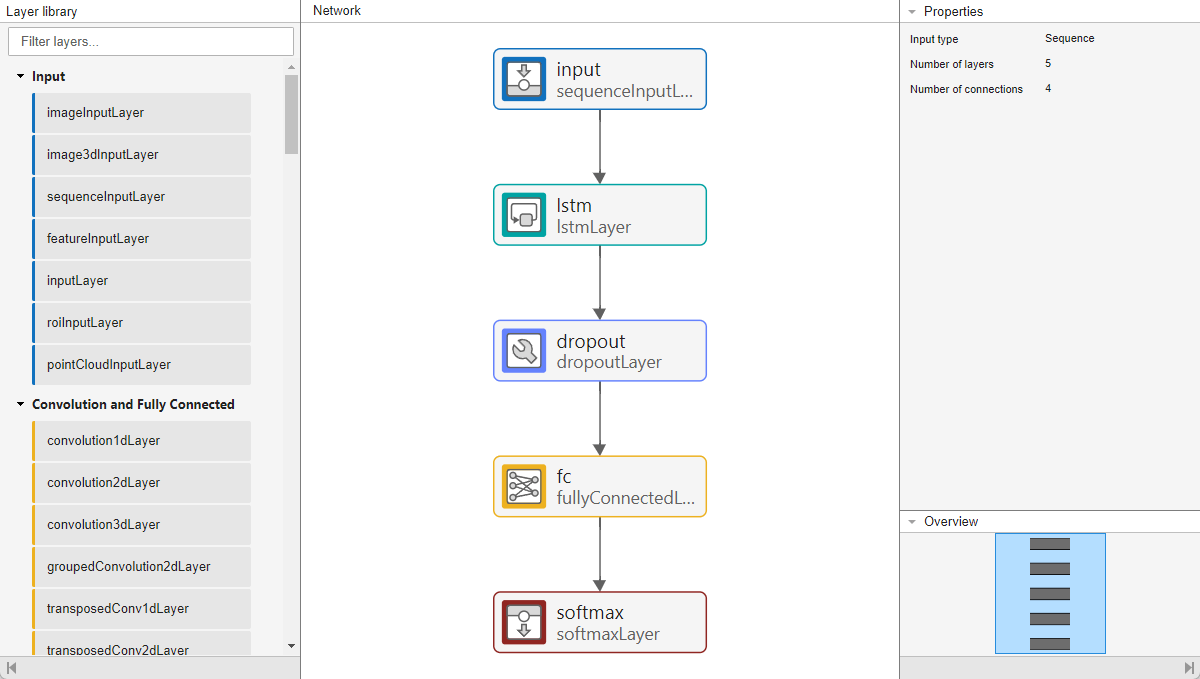

Deep Network Designer displays the prebuilt network.

You can easily adapt this sequence network for the Waveform data set.

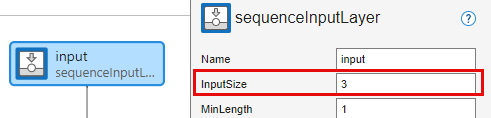

Select the sequence input layer input and set InputSize to 3, to match the number of channels.

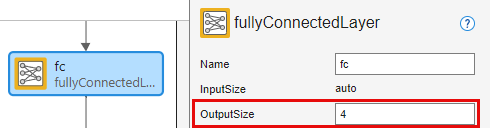

Select the fully connected layer fc and set OutputSize to 4, to match the number of classes.

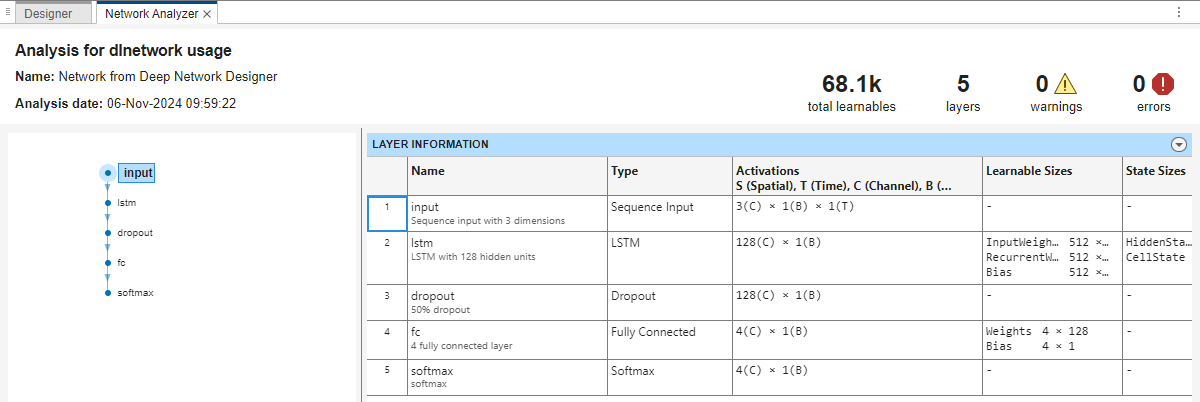

To check that the network is ready for training, click Analyze. The Deep Learning Network Analyzer reports zero errors or warnings, so the network is ready for training.

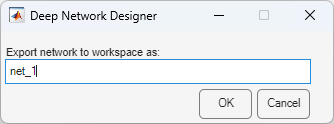

To export the network, click Export and then click OK. The app saves the network in the variable net_1.

Specify Training Options

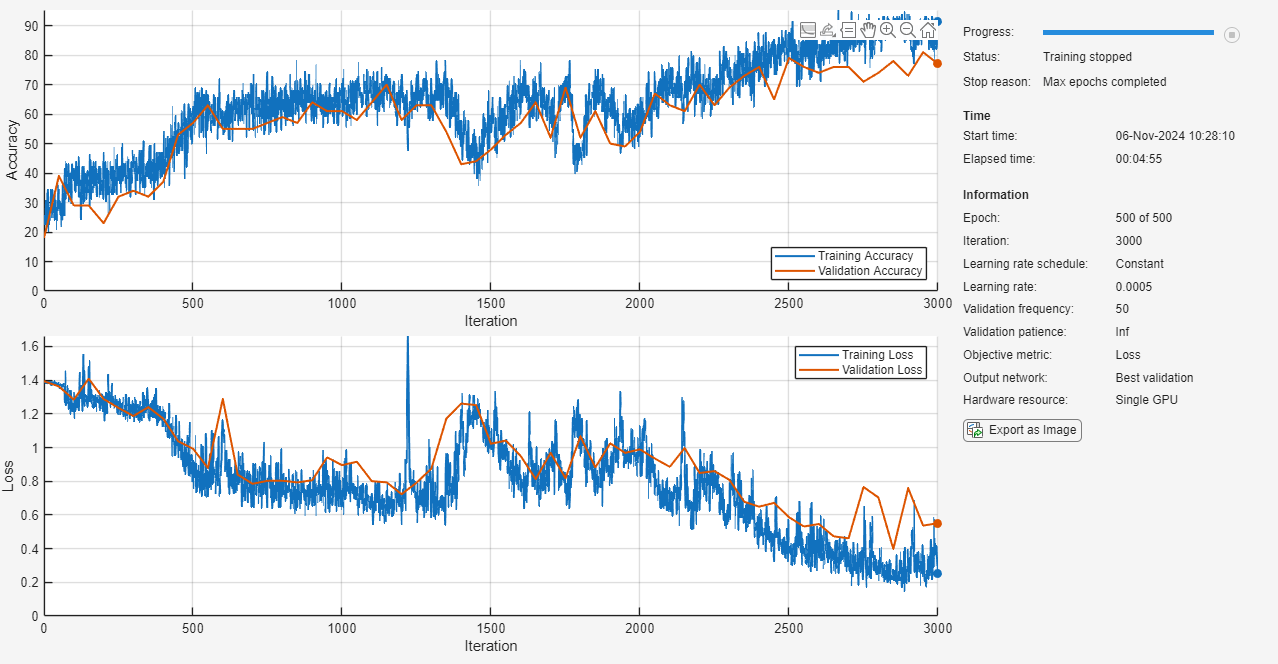

Specify the training options. Choosing among the options requires empirical analysis.

options = trainingOptions("adam", ... MaxEpochs=500, ... InitialLearnRate=0.0005, ... GradientThreshold=1, ... ValidationData={XValidation,TValidation}, ... Shuffle = "every-epoch", ... Plots="training-progress", ... Metrics="accuracy", ... Verbose=false);

Train Neural Network

Train the neural network using the trainnet function. Because the aim is classification, specify cross-entropy loss.

net = trainnet(XTrain,TTrain,net_1,"crossentropy",options);

Test Neural Network

To test the neural network, classify the test data and calculate the classification accuracy.

Make predictions using the minibatchpredict function and convert the scores to labels using the scores2label function.

scores = minibatchpredict(net,XTest); YTest = scores2label(scores,classNames);

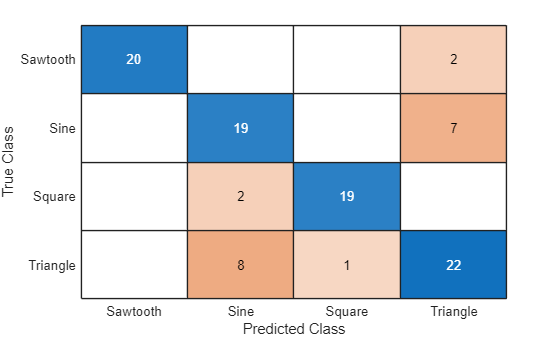

Calculate the classification accuracy. The accuracy is the percentage of correctly predicted labels.

acc = mean(YTest == TTest)

acc = 0.8000

Visualize the predictions in a confusion chart.

figure confusionchart(TTest,YTest)

To improve the performance of this network, you can try padding the data or using a different network architecture. For more information, see Sequence Classification Using Deep Learning.