Projection

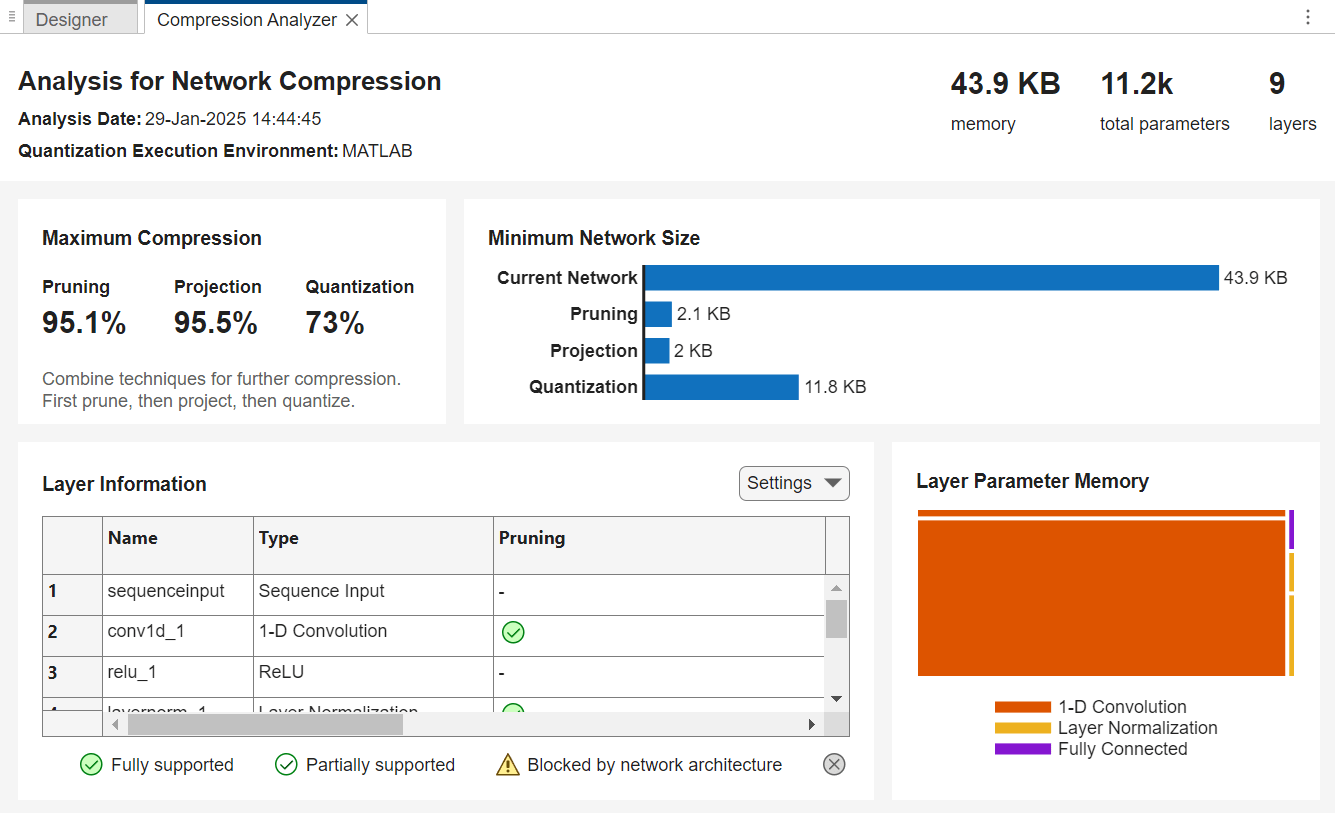

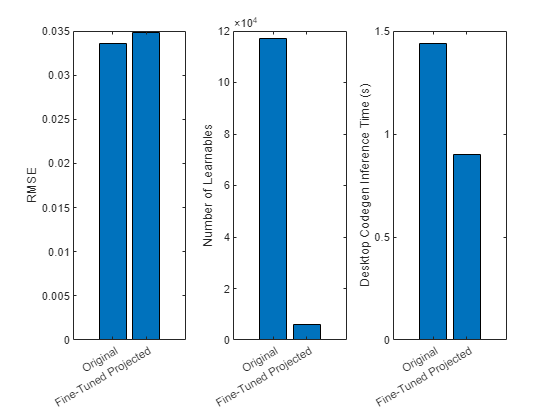

Project layers by performing principal component analysis (PCA) on the layer activations using a data set representative of the training data and applying linear projections on the layer learnable parameters. Forward passes of a projected deep neural network are typically faster when you deploy the network to embedded hardware using library-free C/C++ code generation.

For a detailed overview of the compression techniques available in Deep Learning Toolbox™ Model Compression Library, see Reduce Memory Footprint of Deep Neural Networks.

Functions

compressNetworkUsingProjection | Compress neural network using projection (Since R2022b) |

neuronPCA | Principal component analysis of neuron activations (Since R2022b) |

unpackProjectedLayers | Unpack projected layers of neural network (Since R2023b) |

ProjectedLayer | Compressed neural network layer using projection (Since R2023b) |

gruProjectedLayer | Gated recurrent unit (GRU) projected layer for recurrent neural network (RNN) (Since R2023b) |

lstmProjectedLayer | Long short-term memory (LSTM) projected layer for recurrent neural network (RNN) (Since R2022b) |

Topics

- Compress Neural Network Using Projection

Compress a neural network using projection and principal component analysis (PCA).

- Train Smaller Neural Network Using Knowledge Distillation

Reduce the memory footprint of a deep neural network using knowledge distillation. (Since R2023b)