Assess Simulation and Compare Output Data

Functional testing requires assessing simulation behavior and comparing simulation output to expected output. For example, you can:

Analyze signal behavior in a time interval after an event.

Compare two variables during simulation.

Compare timeseries data to a baseline.

Find peaks in timeseries data, and compare the peaks to a pattern.

This topic provides an overview to help you author assessments for your particular application. In the topic, you can find links to more detailed examples of each assessment.

You can include assessments in a test case, a model, or a test harness.

In a test case, you can:

Compare simulation output to baseline data.

Compare the output of two simulations.

Post-process simulation output using a custom script.

Assess temporal properties using logical and temporal assessments. If you have one or more defined assessments and their associated symbols in a test case, you can use the API or the Test Manager to obtain a list and information about them, copy them to another test case, and remove them from a test case. For information on using the API, see

sltest.testmanager.Assessment,sltest.testmanager.AssessmentSymbol, andsltest.testmanager.TestCase. For the Test Manager, see Assess Temporal Logic by Using Temporal Assessments.

In a test harness or model, you can:

Verify logical conditions in run-time using a

verifystatement, which returns apass,fail, oruntestedresult for each time step.Use

assertstatements to stop simulation on a failure.

Use blocks from the Model Verification or Simulink® Design Verifier™ library.

Compare Simulation Data to Baseline Data or Another Simulation

Baseline criteria are tolerances for simulation data compared to baseline data. Equivalence criteria are tolerances for two sets of simulation data, each from a different simulation. You can set tolerances for numeric, enumerated, or logical data.

Set a numeric tolerance using absolute or relative tolerances. Set time tolerances using leading and lagging tolerances. For numeric data, you can specify absolute tolerance, relative tolerance, leading tolerance, or lagging tolerance. For enumerated or logical data, you can specify leading or lagging tolerance. Results outside the tolerances fail. For more information, see Set Signal Tolerances.

Specify the baseline data and tolerances in the Test Manager Baseline Criteria or Equivalence Criteria section. Results appear in the Results and Artifacts pane. The comparison plot displays the data and differences.

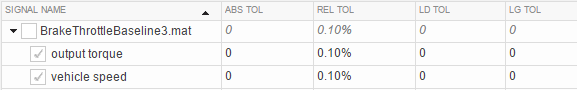

This graphic shows an example of baseline criteria. The baseline criteria sets a

relative tolerance for signals output torque and vehicle

speed.

Post-Process Results With a Custom Script

You can analyze simulation data using specialized functions by using a custom criteria script. For example, you could find peaks in timeseries data using Curve Fitting Toolbox™ functions. A custom criteria script is MATLAB® code that runs after the simulation. Custom criteria scripts use the MATLAB Unit Test framework.

Write a custom criteria script in the Test Manager Custom Criteria section of the test case. Custom criteria results appear in the Results and Artifacts pane. Results are shown for individual MATLAB Unit Test qualifications. For more information, see Process Test Results with Custom Scripts.

This simple test case custom criteria verifies that the value of

slope is greater than 0.

% A simple custom criteria test.verifyGreaterThan(slope,0,'slope must be greater than 0')

Run-Time Assessments

verify Statements

For run-time assessments, use verify statements in a

Test Assessment or Test Sequence block. A

verify statement evaluates a logical expression and returns a

pass, fail, or untested result for each simulation time step.

verify statements can include temporal and conditional

syntax. A failure does not stop simulation.

For information about using verify statements in your model,

see Verify Model Simulation by Using when Decomposition and verify.

assert Statements

Similar to verify statements, assert

statements evaluate logical expressions, but if the test is invalid, simulation

stops. To make results easier to interpret, add an optional message. For more

information, see assert.

Model Verification Blocks

Use blocks from the Simulink

Model Verification library or the Simulink

Design Verifier library to assess signals in your model or test harness.

pass, fail, or untested

results from each block appear in the Test Manager. For more information, see Examine Model Verification Results by Using Simulation Data Inspector.

Note

All Model Verification library blocks,

including the Assertion block, do not produce verification

results when used in For Each subsystems. Use a Test Sequence

block with verify statements instead.

Logical and Temporal Assessments

Logical and temporal assessments evaluate temporal properties such as model timing and event ordering over logged data. Use temporal assessments for additional system verification after the simulation is complete. Temporal assessments are associated with test cases in the Test Manager. Author temporal assessments by using the Logical and Temporal Assessments Editor. See Assess Temporal Logic by Using Temporal Assessments for more information.

Temporal assessment evaluation results appear in the Results and Artifacts pane. Use the Expression Tree to investigate results in detail. If you have a Requirements Toolbox™ license, you can establish traceability between requirements and temporal assessments by creating requirement links. See Link to Requirements for more information.