Framework for Ensemble Learning

Using various methods, you can meld results from many weak learners into one high-quality ensemble predictor. These methods closely follow the same syntax, so you can try different methods with minor changes in your commands.

You can create an ensemble for classification by using fitcensemble or for regression by using fitrensemble.

To train an ensemble for classification using fitcensemble, use this syntax.

ens = fitcensemble(X,Y,Name,Value)

Xis the matrix of data. Each row contains one observation, and each column contains one predictor variable.Yis the vector of responses, with the same number of observations as the rows inX.Name,Valuespecify additional options using one or more name-value pair arguments. For example, you can specify the ensemble aggregation method with the'Method'argument, the number of ensemble learning cycles with the'NumLearningCycles'argument, and the type of weak learners with the'Learners'argument. For a complete list of name-value pair arguments, see thefitcensemblefunction page.

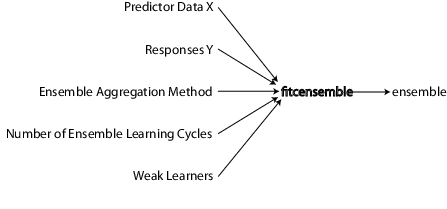

This figure shows the information you need to create a classification ensemble.

Similarly, you can train an ensemble for regression by using fitrensemble, which follows the same syntax as fitcensemble. For details on the input arguments and name-value pair arguments, see the fitrensemble function page.

For all classification or nonlinear regression problems, follow these steps to create an ensemble:

Prepare the Predictor Data

All supervised learning methods start with predictor data, usually called X in this documentation. X can be stored in a matrix or a table. Each row of X represents one observation, and each column of X represents one variable or predictor.

Prepare the Response Data

You can use a wide variety of data types for the response data.

For regression ensembles,

Ymust be a numeric vector with the same number of elements as the number of rows ofX.For classification ensembles,

Ycan be a numeric vector, categorical vector, character array, string array, cell array of character vectors, or logical vector.For example, suppose your response data consists of three observations in the following order:

true,false,true. You could expressYas:[1;0;1](numeric vector)categorical({'true','false','true'})(categorical vector)[true;false;true](logical vector)['true ';'false';'true '](character array, padded with spaces so each row has the same length)["true","false","true"](string array){'true','false','true'}(cell array of character vectors)

Use whichever data type is most convenient. Because you cannot represent missing values with logical entries, do not use logical entries when you have missing values in

Y.

fitcensemble and fitrensemble ignore missing values in Y when creating an ensemble. This table contains the method of including missing entries.

| Data Type | Missing Entry |

|---|---|

| Numeric vector | NaN |

| Categorical vector | <undefined> |

| Character array | Row of spaces |

| String array | <missing> or "" |

| Cell array of character vectors | '' |

| Logical vector | (not possible to represent) |

Choose an Applicable Ensemble Aggregation Method

To create classification and regression ensembles with fitcensemble and fitrensemble, respectively, choose appropriate algorithms from this list.

For classification with two classes:

'AdaBoostM1'— Adaptive boosting'LogitBoost'— Adaptive logistic regression'GentleBoost'— Gentle adaptive boosting'RobustBoost'— Robust boosting (requires Optimization Toolbox™)'LPBoost'— Linear programming boosting (requires Optimization Toolbox)'TotalBoost'— Totally corrective boosting (requires Optimization Toolbox)'RUSBoost'— Random undersampling boosting'Subspace'— Random subspace'Bag'— Bootstrap aggregation (bagging)

For classification with three or more classes:

'AdaBoostM2'— Adaptive boosting'LPBoost'— Linear programming boosting (requires Optimization Toolbox)'TotalBoost'— Totally corrective boosting (requires Optimization Toolbox)'RUSBoost'— Random undersampling boosting'Subspace'— Random subspace'Bag'— Bootstrap aggregation (bagging)

For regression:

'LSBoost'— Least-squares boosting'Bag'— Bootstrap aggregation (bagging)

For descriptions of the various algorithms, see Ensemble Algorithms.

See Suggestions for Choosing an Appropriate Ensemble Algorithm.

This table lists characteristics of the various algorithms. In the table titles:

Imbalance — Good for imbalanced data (one class has many more observations than the other)

Stop — Algorithm self-terminates

Sparse — Requires fewer weak learners than other ensemble algorithms

| Algorithm | Regression | Binary Classification | Multiclass Classification | Class Imbalance | Stop | Sparse |

|---|---|---|---|---|---|---|

Bag | × | × | × | |||

AdaBoostM1 | × | |||||

AdaBoostM2 | × | |||||

LogitBoost | × | |||||

GentleBoost | × | |||||

RobustBoost | × | |||||

LPBoost | × | × | × | × | ||

TotalBoost | × | × | × | × | ||

RUSBoost | × | × | × | |||

LSBoost | × | |||||

Subspace | × | × |

RobustBoost, LPBoost, and TotalBoost require an Optimization Toolbox license. Try TotalBoost before LPBoost, as TotalBoost can be more robust.

Suggestions for Choosing an Appropriate Ensemble Algorithm

Regression — Your choices are

LSBoostorBag. See General Characteristics of Ensemble Algorithms for the main differences between boosting and bagging.Binary Classification — Try

AdaBoostM1first, with these modifications:Data Characteristic Recommended Algorithm Many predictors SubspaceSkewed data (many more observations of one class) RUSBoostLabel noise (some training data has the wrong class) RobustBoostMany observations Avoid LPBoostandTotalBoostMulticlass Classification — Try

AdaBoostM2first, with these modifications:Data Characteristic Recommended Algorithm Many predictors SubspaceSkewed data (many more observations of one class) RUSBoostMany observations Avoid LPBoostandTotalBoost

For details of the algorithms, see Ensemble Algorithms.

General Characteristics of Ensemble Algorithms

Boostalgorithms generally use very shallow trees. This construction uses relatively little time or memory. However, for effective predictions, boosted trees might need more ensemble members than bagged trees. Therefore it is not always clear which class of algorithms is superior.Baggenerally constructs deep trees. This construction is both time consuming and memory-intensive. This also leads to relatively slow predictions.Bagcan estimate the generalization error without additional cross validation. SeeoobLoss.Except for

Subspace, all boosting and bagging algorithms are based on decision tree learners.Subspacecan use either discriminant analysis or k-nearest neighbor learners.

For details of the characteristics of individual ensemble members, see Characteristics of Classification Algorithms.

Set the Number of Ensemble Members

Choosing the size of an ensemble involves balancing speed and accuracy.

Larger ensembles take longer to train and to generate predictions.

Some ensemble algorithms can become overtrained (inaccurate) when too large.

To set an appropriate size, consider starting with several dozen to several hundred members in an ensemble, training the ensemble, and then checking the ensemble quality, as in Test Ensemble Quality. If it appears that you need more members, add them using the resume method (classification) or the resume method (regression). Repeat until adding more members does not improve ensemble quality.

Tip

For classification, the LPBoost and TotalBoost algorithms are self-terminating, meaning you do not have to investigate the appropriate ensemble size. Try setting NumLearningCycles to 500. The algorithms usually terminate with fewer members.

Prepare the Weak Learners

Currently the weak learner types are:

'Discriminant'(recommended forSubspaceensemble)'KNN'(only forSubspaceensemble)'Tree'(for any ensemble exceptSubspace)

There are two ways to set the weak learner type in an ensemble.

To create an ensemble with default weak learner options, specify the value of the

'Learners'name-value pair argument as the character vector or string scalar of the weak learner name. For example:ens = fitcensemble(X,Y,'Method','Subspace', ... 'NumLearningCycles',50,'Learners','KNN'); % or ens = fitrensemble(X,Y,'Method','Bag', ... 'NumLearningCycles',50,'Learners','Tree');

To create an ensemble with nondefault weak learner options, create a nondefault weak learner using the appropriate

templatemethod.For example, if you have missing data, and want to use classification trees with surrogate splits for better accuracy:

templ = templateTree('Surrogate','all'); ens = fitcensemble(X,Y,'Method','AdaBoostM2', ... 'NumLearningCycles',50,'Learners',templ);To grow trees with leaves containing a number of observations that is at least 10% of the sample size:

templ = templateTree('MinLeafSize',size(X,1)/10); ens = fitcensemble(X,Y,'Method','AdaBoostM2', ... 'NumLearningCycles',50,'Learners',templ);Alternatively, choose the maximal number of splits per tree:

templ = templateTree('MaxNumSplits',4); ens = fitcensemble(X,Y,'Method','AdaBoostM2', ... 'NumLearningCycles',50,'Learners',templ);You can also use nondefault weak learners in

fitrensemble.

While you can give fitcensemble and fitrensemble a cell array of learner templates, the most common usage is to give just one weak learner template.

For examples using a template, see Handle Imbalanced Data or Unequal Misclassification Costs in Classification Ensembles and Surrogate Splits.

Decision trees can handle NaN values in X. Such values are called “missing”. If you have some missing values in a row of X, a decision tree finds optimal splits using nonmissing values only. If an entire row consists of NaN, fitcensemble and fitrensemble ignore that row. If you have data with a large fraction of missing values in X, use surrogate decision splits. For examples of surrogate splits, see Handle Imbalanced Data or Unequal Misclassification Costs in Classification Ensembles and Surrogate Splits.

Common Settings for Tree Weak Learners

The depth of a weak learner tree makes a difference for training time, memory usage, and predictive accuracy. You control the depth these parameters:

MaxNumSplits— The maximal number of branch node splits isMaxNumSplitsper tree. Set large values ofMaxNumSplitsto get deep trees. The default for bagging issize(X,1) - 1. The default for boosting is1.MinLeafSize— Each leaf has at leastMinLeafSizeobservations. Set small values ofMinLeafSizeto get deep trees. The default for classification is1and5for regression.MinParentSize— Each branch node in the tree has at leastMinParentSizeobservations. Set small values ofMinParentSizeto get deep trees. The default for classification is2and10for regression.

If you supply both

MinParentSizeandMinLeafSize, the learner uses the setting that gives larger leaves (shallower trees):MinParent = max(MinParent,2*MinLeaf)If you additionally supply

MaxNumSplits, then the software splits a tree until one of the three splitting criteria is satisfied.Surrogate— Grow decision trees with surrogate splits whenSurrogateis'on'. Use surrogate splits when your data has missing values.Note

Surrogate splits cause slower training and use more memory.

PredictorSelection—fitcensemble,fitrensemble, andTreeBaggergrow trees using the standard CART algorithm [1] by default. If the predictor variables are heterogeneous or there are predictors having many levels and other having few levels, then standard CART tends to select predictors having many levels as split predictors. For split-predictor selection that is robust to the number of levels that the predictors have, consider specifying'curvature'or'interaction-curvature'. These specifications conduct chi-square tests of association between each predictor and the response or each pair of predictors and the response, respectively. The predictor that yields the minimal p-value is the split predictor for a particular node. For more details, see Choose Split Predictor Selection Technique.Note

When boosting decision trees, selecting split predictors using the curvature or interaction tests is not recommended.

Call fitcensemble or fitrensemble

The syntaxes for fitcensemble and fitrensemble are identical. For fitrensemble, the syntax is:

ens = fitrensemble(X,Y,Name,Value)

Xis the matrix of data. Each row contains one observation, and each column contains one predictor variable.Yis the responses, with the same number of observations as rows inX.Name,Valuespecify additional options using one or more name-value pair arguments. For example, you can specify the ensemble aggregation method with the'Method'argument, the number of ensemble learning cycles with the'NumLearningCycles'argument, and the type of weak learners with the'Learners'argument. For a complete list of name-value pair arguments, see thefitrensemblefunction page.

The result of fitrensemble and fitcensemble is an ensemble object, suitable for making predictions on new data. For a basic example of creating a regression ensemble, see Train Regression Ensemble. For a basic example of creating a classification ensemble, see Train Classification Ensemble.

Where to Set Name-Value Pairs

There are several name-value pairs you can pass to fitcensemble or fitrensemble, and several that apply to the weak learners (templateDiscriminant, templateKNN, and templateTree). To determine which name-value pair argument is appropriate, the ensemble or the weak learner:

Use template name-value pairs to control the characteristics of the weak learners.

Use

fitcensembleorfitrensemblename-value pair arguments to control the ensemble as a whole, either for algorithms or for structure.

For example, for an ensemble of boosted classification trees with each tree deeper than the default, set the templateTree name-value pair arguments MinLeafSize and MinParentSize to smaller values than the defaults. Or, MaxNumSplits to a larger value than the defaults. The trees are then leafier (deeper).

To name the predictors in a classification ensemble (part of the structure of the ensemble), use the PredictorNames name-value pair in fitcensemble.

References

[1] Breiman, L., J. H. Friedman, R. A. Olshen, and C. J. Stone. Classification and Regression Trees. Boca Raton, FL: Chapman & Hall, 1984.

See Also

fitcensemble | fitrensemble | oobLoss | resume | resume | templateDiscriminant | templateKNN | templateTree