Generating Pseudorandom Numbers

Pseudorandom numbers are generated by deterministic algorithms. They are "random" in the sense that, on average, they pass statistical tests regarding their distribution and correlation. They differ from true random numbers in that they are generated by an algorithm, rather than a truly random process.

Random number generators (RNGs) like those in MATLAB® are algorithms for generating pseudorandom numbers with a specified distribution.

For more information on the GUI for generating random numbers from supported

distributions, see randtool.

For more complex probability distributions, you can use the methods described in Representing Sampling Distributions Using Markov Chain Samplers.

Common Pseudorandom Number Generation Methods

Methods for generating pseudorandom numbers usually start with uniform random numbers,

like the MATLAB

rand function produces. The methods described in this section detail how to

produce random numbers from other distributions.

Direct Methods

Direct methods directly use the definition of the distribution.

For example, consider binomial random numbers. A binomial random number is the number of heads in tosses of a coin with probability of a heads on any single toss. If you generate uniform random numbers on the interval (0,1) and count the number less than , then the count is a binomial random number with parameters and .

This function is a simple implementation of a binomial RNG using the direct approach:

function X = directbinornd(N,p,m,n) X = zeros(m,n); % Preallocate memory for i = 1:m*n u = rand(N,1); X(i) = sum(u < p); end end

For example:

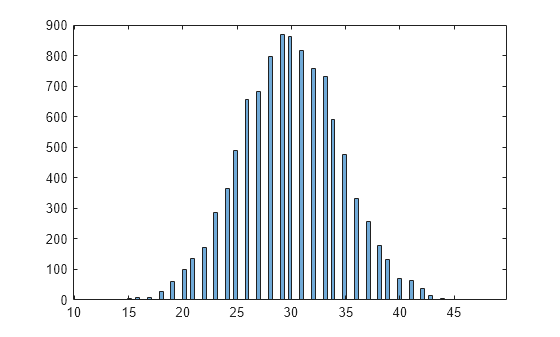

rng('default') % For reproducibility X = directbinornd(100,0.3,1e4,1); histogram(X,101)

The binornd function uses a modified direct method, based on the definition of a binomial random variable as the sum of Bernoulli random variables.

You can easily convert the previous method to a random number generator for the Poisson distribution with parameter . The Poisson Distribution is the limiting case of the binomial distribution as approaches infinity, approaches zero, and is held fixed at . To generate Poisson random numbers, create a version of the previous generator that inputs rather than and , and internally sets to some large number and to .

The poissrnd function actually uses two direct methods:

A waiting time method for small values of

A method due to Ahrens and Dieter for larger values of

Inversion Methods

Inversion methods are based on the observation that continuous cumulative distribution functions (cdfs) range uniformly over the interval (0,1). If is a uniform random number on (0,1), then using generates a random number from a continuous distribution with specified cdf .

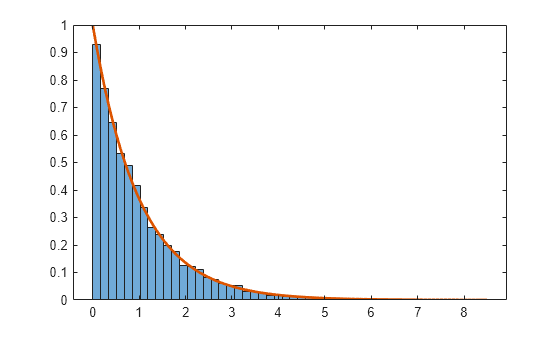

For example, the following code generates random numbers from a specific Exponential Distribution using the inverse cdf and the MATLAB® uniform random number generator rand:

rng('default') % For reproducibility mu = 1; X = expinv(rand(1e4,1),mu);

Compare the distribution of the generated random numbers to the pdf of the specified exponential.

numbins = 50; h = histogram(X,numbins,'Normalization','pdf'); hold on x = linspace(h.BinEdges(1),h.BinEdges(end)); y = exppdf(x,mu); plot(x,y,'LineWidth',2) hold off

Inversion methods also work for discrete distributions. To generate a random number from a discrete distribution with probability mass vector where , generate a uniform random number on (0,1) and then set if .

For example, the following function implements an inversion method for a discrete distribution with probability mass vector :

function X = discreteinvrnd(p,m,n) X = zeros(m,n); % Preallocate memory for i = 1:m*n u = rand; I = find(u < cumsum(p)); X(i) = min(I); end end

Use the function to generate random numbers from any discrete distribution.

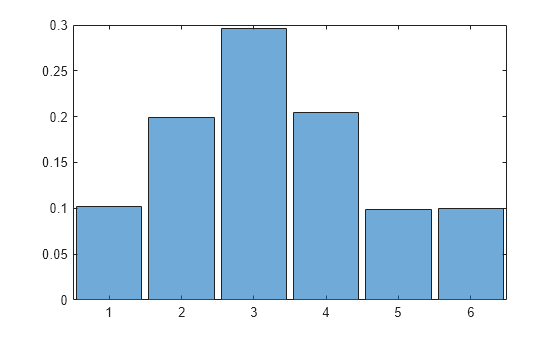

p = [0.1 0.2 0.3 0.2 0.1 0.1]; % Probability mass function (pmf) values

X = discreteinvrnd(p,1e4,1);Alternatively, you can use the discretize function to generate discrete random numbers.

X = discretize(rand(1e4,1),[0 cusmsum(p)]);

Plot the histogram of the generated random numbers, and confirm then the distribution follows the specified pmf values.

histogram(categorical(X),'Normalization','probability')

Acceptance-Rejection Methods

The functional form of some distributions makes it difficult or time-consuming to generate random numbers using direct or inversion methods. Acceptance-rejection methods provide an alternative in these cases.

Acceptance-rejection methods begin with uniform random numbers, but require an additional random number generator. If your goal is to generate a random number from a continuous distribution with pdf , acceptance-rejection methods first generate a random number from a continuous distribution with pdf satisfying for some and all .

A continuous acceptance-rejection RNG proceeds as follows:

Chooses a density .

Finds a constant such that for all .

Generates a uniform random number .

Generates a random number from .

If , accepts and returns . Otherwise, rejects and goes to step 3.

For efficiency, a "cheap" method is necessary for generating random numbers from , and the scalar should be small. The expected number of iterations to produce a single random number is .

The following function implements an acceptance-rejection method for generating random numbers from pdf given , , the RNG grnd for , and the constant :

function X = accrejrnd(f,g,grnd,c,m,n) X = zeros(m,n); % Preallocate memory for i = 1:m*n accept = false; while accept == false u = rand(); v = grnd(); if c*u <= f(v)/g(v) X(i) = v; accept = true; end end end end

For example, the function satisfies the conditions for a pdf on (nonnegative and integrates to 1). The exponential pdf with mean 1, , dominates for greater than about 2.2. Thus, you can use rand and exprnd to generate random numbers from :

f = @(x)x.*exp(-(x.^2)/2); g = @(x)exp(-x); grnd = @()exprnd(1); rng('default') % For reproducibility X = accrejrnd(f,g,grnd,2.2,1e4,1);

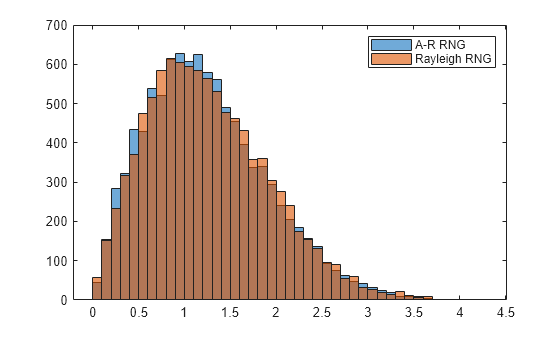

The pdf is actually a Rayleigh Distribution with shape parameter 1. This example compares the distribution of random numbers generated by the acceptance-rejection method with those generated by raylrnd:

Y = raylrnd(1,1e4,1); histogram(X) hold on histogram(Y) legend('A-R RNG','Rayleigh RNG')

The raylrnd function uses a transformation method, expressing a Rayleigh random variable in terms of a chi-square random variable, which you compute using randn.

Acceptance-rejection methods also work for discrete distributions. In this case, the goal is to generate random numbers from a distribution with probability mass , assuming that you have a method for generating random numbers from a distribution with probability mass . The RNG proceeds as follows:

Chooses a density .

Finds a constant such that for all .

Generates a uniform random number .

Generates a random number from .

If , accepts and returns . Otherwise, rejects and goes to step 3.