alexnet

(Not recommended) AlexNet convolutional neural network

alexnet is not recommended. Use the imagePretrainedNetwork function instead and specify the "alexnet" model. For more information, see Version History.

Description

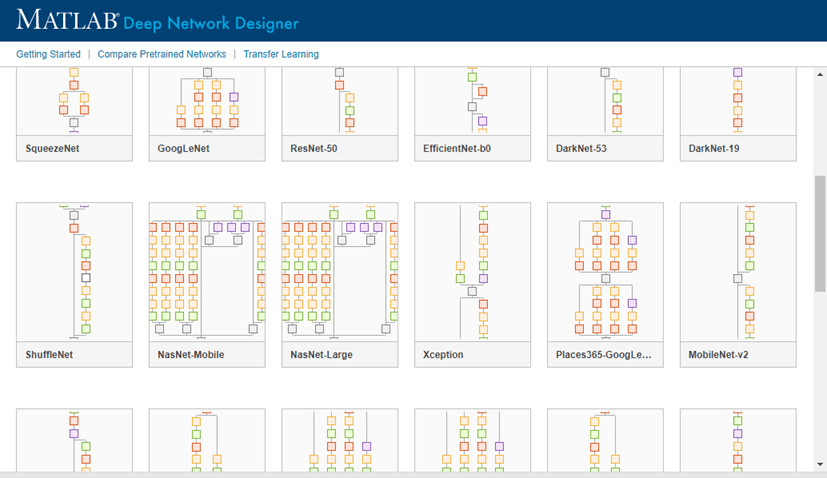

AlexNet is a convolutional neural network that is 8 layers deep. You can load a pretrained version of the network trained on more than a million images from the ImageNet database [1]. The pretrained network can classify images into 1000 object categories, such as keyboard, mouse, pencil, and many animals. As a result, the network has learned rich feature representations for a wide range of images. The network has an image input size of 227-by-227. For more pretrained networks in MATLAB®, see Pretrained Deep Neural Networks.

For a free hands-on introduction to practical deep learning methods, see Deep Learning Onramp.

net = alexnet

This function requires Deep Learning Toolbox™ Model for AlexNet Network support package. If this support package is not installed, the function provides a download link. Alternatively, see Deep Learning Toolbox Model for AlexNet Network.

For more pretrained networks in MATLAB, see Pretrained Deep Neural Networks.

net = alexnet('Weights','imagenet')net = alexnet.

layers = alexnet('Weights','none')

Examples

Output Arguments

Tips

For a free hands-on introduction to practical deep learning methods, see Deep Learning Onramp.

References

[1] ImageNet. http://www.image-net.org.

[2] Russakovsky, O., Deng, J., Su, H., et al. "ImageNet Large Scale Visual Recognition Challenge." International Journal of Computer Vision (IJCV). Vol 115, Issue 3, 2015, pp. 211–252

[3] Krizhevsky, Alex, Ilya Sutskever, and Geoffrey E. Hinton. "ImageNet Classification with Deep Convolutional Neural Networks." Communications of the ACM 60, no. 6 (May 24, 2017): 84–90. https://doi.org/10.1145/3065386.

[4] BVLC AlexNet Model. https://github.com/BVLC/caffe/tree/master/models/bvlc_alexnet